Here’s a post that’s suitable for the Thanksgiving season.

I no longer believe in the Shangri-La diet. Here’s the story.

Background

I met Seth Roberts back in the early 1990s when we were both professors at the University of California. He sometimes came to the statistics department seminar and we got to talking about various things; in particular we shared an interest in statistical graphics. Much of my work in this direction eventually went toward the use of graphical displays to understand fitted models. Seth went in another direction and got interested in the role of exploratory data analysis in science, the idea that we could use graphs not just to test or even understand a model but also as the source of new hypotheses. We continued to discuss these issues over the years.

At some point when we were at Berkeley the administration was encouraging the faculty to teach freshman seminars, and I had the idea of teaching a course on left-handedness. I’d just read the book by Stanley Coren and thought it would be fun to go through it with a class, chapter by chapter. But my knowledge of psychology was minimal so I contacted the one person I knew in the psychology department and asked him if he had any suggestions of someone who’d like to teach the course with me. Seth responded that he’d be interested in doing it himself, and we did it.

Seth was an unusual guy—not always in a good way, but some of his positive traits were friendliness, inquisitiveness, and an openness to consider new ideas. He also struggled with mood swings, social awkwardness, and difficulties with sleep, and he attempted to address these problems with self-experimentation.

After we taught the class together we got together regularly for lunch and Seth told me about his efforts in self-experimentation involving sleeping hours and mood. Most interesting to me was his discovery that seeing life-sized faces in the morning helped with his mood. I can’t remember how he came up with this idea, but perhaps he started by following the recommendation that is often given to people with insomnia to turn off TV and other sources of artificial light in the evening. Seth got in the habit of taping late-night talk-show monologues and then watching them in the morning while he ate breakfast. He found himself happier, did some experimentation, and concluded that we had evolved to talk with people in the morning, and that life-sized faces were necessary. Seth lived alone, so the more natural approach of talking over breakfast with a partner was not available.

Seth’s self-experimentation went slowly, with lots of dead-ends and restarts, which makes sense given the difficulty of his projects. I was always impressed by Seth’s dedication in this, putting in the effort day after day for years. Or maybe it did not represent a huge amount of labor for him, perhaps it was something like a diary or blog which is pleasurable to create, even if it seems from the outside to be a lot of work. In any case, from my perspective, the sustained focus was impressive. He had worked for years to solve his sleep problems and only then turned to the experiments on mood.

Seth’s academic career was unusual. He shot through college and graduate school to a tenure-track job at a top university, then continued to do publication-quality research for several years until receiving tenure. At that point he was not a superstar but I think he was still considered a respected member of the mainstream academic community. But during the years that followed, Seth lost interest in that thread of research. He told me once that his shift was motivated by teaching introductory undergraduate psychology: the students, he said, were interested in things that would affect their lives, and, compared to that, the kind of research that leads to a productive academic career did not seem so appealing.

I suppose that Seth could’ve tried to do research in clinical psychology (Berkeley’s department actually has a strong clinical program) but instead he moved in a different direction and tried different things to improve his sleep and then, later, his skin, his mood, and his diet. In this work, Seth applied what he later called his “insider/outsider perspective”: he was an insider in that he applied what he’d learned from years of research on animal behavior, an outsider in that he was not working within the existing paradigm of research in physiology and nutrition.

At the same time he was working on a book project, which I believe started as a new introductory psychology course focused on science and self-improvement but ultimately morphed into a trade book on ways in which our adaptations to Stone Age life were not serving us well in the modern era. I liked the book but I don’t think he found a publisher. In the years since, this general concept has been widely advanced and many books have been published on the topic.

When Seth came up with the connection between morning faces and depression, this seemed potentially hugely important. Were the faces were really doing anything? I have no idea. On one hand, Seth was measuring his own happiness and doing his own treatments on his own hypothesis so the potential for expectation effects are huge. On the other hand, he said the effect he discovered was a surprise to him and he also reported that the treatment worked with others. Neither he nor, as far as I know, anyone else, has attempted a controlled trial of this idea.

In his self-experimentation, Seth lived the contradiction between the two tenets of evidence-based medicine: (1) Try everything, measure everything, record everything; and (2) Make general recommendations based on statistical evidence rather than anecdotes.

Seth’s ideas were extremely evidence-based in that they were based on data that he gathered himself or that people personally sent in to him, and he did use the statistical evidence of his self-measurements, but he did not put in much effort to reduce, control, or adjust for biases in his measurements, nor did he systematically gather data on multiple people.

The Shangri-La diet

Seth’s next success after curing his depression was losing 40 pounds on an unusual diet that he came up with, in which you can eat whatever you want as long as each day you drink a cup of unflavored sugar water, at least an hour before or after a meal. The way he theorized that his diet worked was that the carefully-timed sugar water had the effect of reducing the association between calories and flavor, thus lowering your weight set-point and making you uninterested in eating lots of food.

I asked Seth once if he thought I’d lose weight if I were to try his diet in a passive way, drinking the sugar water at the recommended time but not actively trying to reduce my caloric intake. He said he supposed not, that the diet would make it easier to lose weight but I’d probably still have to consciously eat less.

I described Seth’s diet to one of my psychologist colleagues at Columbia and asked what he thought of it. My colleague said he thought it was ridiculous. And, as with the depression treatment, Seth never had an interest in running a controlled trial, even for the purpose of convincing the skeptics.

I had a conversation with Seth about this. He said he’d tried lots of diets and none had worked for him. I suggested that maybe he was just ready at last to eat least and lose weight, and he said he’d been ready for awhile but this was the first diet that allowed him to eat less without difficulty. I suggested that maybe the theory underlying Seth’s diet was compelling enough to act as a sort of placebo, motivating him to follow the protocol. Seth responded that other people had tried his diet and lost weight with it. He also reminded me that it’s generally accepted that “diets don’t work” and that people who lose weight while dieting will usually gain it all back. He felt that his diet was different in that it didn’t you what foods to eat or how much; rather, it changed your set point so that you didn’t want to eat so much. I found Seth’s arguments persuasive. I didn’t feel that his diet had been proved effective, but I thought it might really work, I told people about it, and I was happy about its success. Unlike my Columbia colleague, I didn’t think the idea was ridiculous.

Media exposure and success

Seth’s breakout success happened gradually, starting with a 2005 article on self-experimentation in Behavioral and Brain Sciences, a journal that publishes long articles followed by short discussions from many experts. Some of his findings from the ten of his experiments discussed in the article:

Seeing faces in the morning on television decreased mood in the evening and improved mood the next day . . . Standing 8 hours per day reduced early awakening and made sleep more restorative . . . Drinking unflavored fructose water caused a large weight loss that has lasted more than 1 year . . .

As Seth described it, self-experimentation generates new hypotheses and is also an inexpensive way to test and modify them. The article does not seem to have had a huge effect within research psychology (Google Scholar gives it 93 cites) but two of its contributions—the idea of systematic self-experimentation and the weight-loss method—have spread throughout the popular culture in various ways. Seth’s work was featured in a series of increasingly prominent blogs, which led to a newspaper article by the authors of Freakonomics and ultimately a successful diet book (not enough to make Seth rich, I think, but Seth had simple tastes and no desire to be rich, as far as I know). Meanwhile, Seth started a blog of his own which led to a message board for his diet that he told me had thousands of participants.

Seth achieved some measure of internet fame, with fans including Nassim Taleb, Steven Levitt, Dennis Prager, Tucker Max, Tyler Cowen, . . . and me! In retrospect, I don’t think having all this appreciation was good for him. On his blog and elsewhere Seth reported success with various self-experiments, the last of which was a claim of improved brain function after eating half a stick of butter a day. Even while maintaining interest in Seth’s ideas on mood and diet, I was entirely skeptical of his new claims, partly because of his increasing rate of claimed successes. It took Seth close to 10 years of sustained experimentation to fix his sleep problems, but in later years it seemed that all sorts of different things he tried were effective. His apparent success rate was implausibly high. What was going on? One problem is that sleep hours and weight can be measured fairly objectively, whereas if you measure brain function by giving yourself little quizzes, it doesn’t seem hard at all for a bit of unconscious bias to drive all your results. I also wonder if Seth’s blog audience was a problem: if you have people cheering on your every move, it can be that much easier to fool yourself.

Seth also started to go down some internet rabbit holes. On one hand, he was a left-wing Berkeley professor who supported universal health care, Amnesty International, and other liberal causes. On the other hand, his paleo-diet enthusiasm brought him close to various internet right-wingers, and he was into global warming denial and kinda sympathetic to Holocaust denial, not because he was a Nazi or anything but just because he had distrust of authority thing going on. I guess that if he’d been an adult back in the 1950s and 1960s he would’ve been on the extreme left, but more recently it’s been the far right where the rebels are hanging out. Seth also had sympathy for some absolutely ridiculous and innumerate research on sex ratios and absolutely loved the since-discredited work of food behavior researcher Brian Wansink; see here and here. The point here is not that Seth believed things that turned out to be false—that happens to all of us—but rather that he had a soft spot for extreme claims that were wrapped in the language of science.

Back to Shangri-La

A few years ago, Seth passed away, and I didn’t think of him too often, but then a couple years ago my doctor told me that my cholesterol level too high. He prescribed a pill, which I’m still taking every day, and he told me to switch to a mostly-plant diet and lose a bunch of weight.

My first thought was to try the Shangri-La diet. That cup of unflavored sugar water at least an hour between meals. Or maybe I did the spoonful of unflavored olive oil, I can’t remember which. Anyway, I tried it for a few days, also following the advice to eat less. And then after a few days, I thought: if the point is to eat less, why not just do that? So that’s what I did. No sugar water or olive oil needed.

What’s the point of this story? Not that losing the weight was easy for me. For a few years before that fateful conversation, my doctor had been bugging me to lose weight, and I’d vaguely wanted that to happen, but it hadn’t. What worked was me having this clear goal and motivation. And it’s not like I’m starving all the time. I’m fine; I just changed my eating patterns, and I take in a lot less energy every day.

But here’s a funny thing. Suppose I’d stuck with the sugar water and everything else had been the same. Then I’d have lost all this weight, exactly when I’d switched to the new diet. I’d be another enthusiastic Shangri-La believer, and I’d be telling you, truthfully, that only since switching to that diet had I been able to comfortably eat less. But I didn’t stick with Shangri-La and I lost the weight anyway, so I won’t make that attribution.

OK, so after that experience I had a lot less belief in Seth’s diet. The flip side of being convinced by his earlier self-experiment was becoming unconvinced after my own self-experiment.

And that’s where I stood until I saw this post at the blog Slime Mold Time Mold about informal experimentation:

For the potato diet, we started with case studies like Andrew Taylor and Penn Jilette; we recruited some friends to try nothing but potatoes for several days; and one of the SMTM authors tried the all-potato diet for a couple weeks.

For the potassium trial, two SMTM hive mind members tried the low-dose potassium protocol for a couple of weeks and lost weight without any negative side effects. Then we got a couple of friends to try it for just a couple of days to make sure that there weren’t any side effects for them either.

For the half-tato diet, we didn’t explicitly organize things this way, but we looked at three very similar case studies that, taken together, are essentially an N = 3 pilot of the half-tato diet protocol. No idea if the half-tato effect will generalize beyond Nicky Case and M, but the fact that it generalizes between them is pretty interesting. We also happened to know about a couple of other friends who had also tried versions of the half-tato diet with good results.

My point here is not to delve into the details of these new diets, but rather to point out that they are like the Shangri-La diet in being different from other diets, associated with some theory, evaluated through before-after studies on some people who wanted to lose weight, and yielded success.

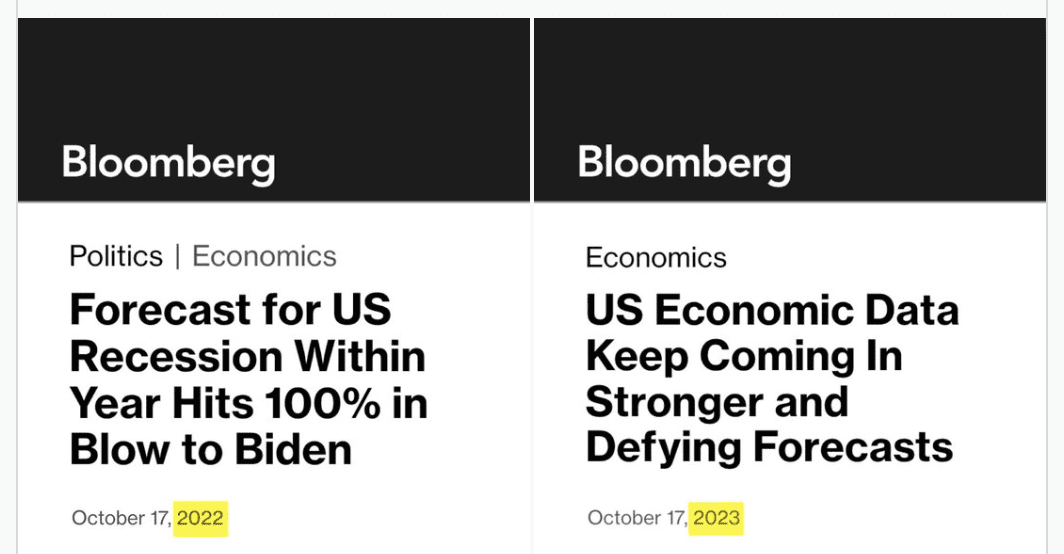

At this point, though, my conclusion is not that unflavored sugar water is effective in making it easy to lose weight, or that unflavored oil works, or that potatoes work, or that potassium works. Rather, the hypothesis that’s most plausible to me is that, if you’re at the right stage of motivation, anything can work.

Or, to put it another way, I now believe that the observed effect of the Shangri-La diet, the potato diet, etc., comes from a mixture of placebo and selection. The placebo is that just about any gimmick can help you lose weight, and keep the weight off, if it somehow motivates you to eat less. The selection is that, once you’re ready to try something like this diet, you might be ready to eat less.

But what about “diets don’t work”? I guess that diets don’t work for most people at most times. But the people trying these diets are not “most people at most times.” They’re people with a high motivation to eat less and lose weight.

I’m not saying I have an ironclad case here. I’m pretty much now in the position of my Columbia colleague who felt that there’s no good reason to believe that Seth’s diet is more effective than any other arbitrary series of rules that somewhere includes the suggestion to eat less. And, yes, I have the same impression of the potato diet and the other ideas mentioned above. It’s just funny that it took so long for me to reach this position.

Back to Seth

I wouldn’t say the internet killed Seth Roberts, but ultimately I don’t think it did him any favors for him to become an internet hero, in the same way that it’s not always good for an ungrounded person to become an academic hero, or an athletic hero, or a musical hero, or a literary hero, or a military hero, or any other kind of hero. The stuff that got you to heroism can be a great service to the world, but what comes next can be a challenge.

Seth ended up believing in his own hype. In this case, the hype was not that he was an amazing genius; rather, the hype was about his method, the idea that he had discovered modern self-experimentation (to the extent that this rediscovery can be attributed to anybody, it should be to Seth’s undergraduate adviser, Allen Neuringer, in this article from 1981). Maybe even without his internet fame Seth would’ve gone off the deep end and started to believe he was regularly making major discoveries; I don’t know.

From a scientific standpoint, Seth’s writings are an example of the principle that honesty and transparency are not enough. He clearly described what he did, but his experiments got to be so flawed as to be essentially useless.

After I posted my obituary of Seth (from which I took much of the beginning of this post), there were many moving tributes in the comments, and I concluded by writing, “It is good that he found an online community of people who valued him.” That’s how I felt right now, but in retrospect, maybe not. If I could’ve done it all over again, I never would’ve promoted his diet, a promotion that led to all the rest.

I’d guess that the wide dissemination of Seth’s ideas was a net benefit to the world. Even if his diet idea is bogus, it seems to have made a difference to a lot of people. And even if the discoveries he reported from his self-experimentation (eating a stick of butter a day improving brain functioning and all the rest) were nothing but artifacts of his hopeful measurement protocols, the idea of self-experimentation was empowering to people—and I’m assuming that even his true believers (other than himself) weren’t actually doing the butter thing.

Setting aside the effects on others, though, I don’t think that this online community was good for Seth in his own work or for his personal life. In some ways he was ahead of his time, as nowadays we’re hearing a lot about people getting sucked into cult-like vortexes of misinformation.

P.S. Lots of discussion in comments, including this from the Slime Mold Time Mold bloggers.