A few months ago I sent the following message to some people:

Dear philosophically-inclined colleagues:

I’d like to organize an online discussion of Deborah Mayo’s new book.

The table of contents and some of the book are here at Google books, also in the attached pdf and in this post by Mayo.

I think that many, if not all, of Mayo’s points in her Excursion 4 are answered by my article with Hennig here.

What I was thinking for this discussion is that if you’re interested you can write something, either a review of Mayo’s book (if you happen to have a copy of it) or a review of the posted material, or just your general thoughts on the topic of statistical inference as severe testing.

I’m hoping to get this all done this month, because it’s all informal and what’s the point of dragging it out, right? So if you’d be interested in writing something on this that you’d be willing to share with the world, please let me know. It should be fun, I hope!

I did this in consultation with Deborah Mayo, and I just sent this email to a few people (so if you were not included, please don’t feel left out! You have a chance to participate right now!), because our goal here was to get the discussion going. The idea was to get some reviews, and this could spark a longer discussion here in the comments section.

And, indeed, we received several responses. And I’ll also point you to my paper with Shalizi on the philosophy of Bayesian statistics, with discussions by Mark Andrews and Thom Baguley, Denny Borsboom and Brian Haig, John Kruschke, Deborah Mayo, Stephen Senn, and Richard D. Morey, Jan-Willem Romeijn and Jeffrey N. Rouder.

Also relevant is this summary by Mayo of some examples from her book.

And now on to the reviews.

Brian Haig

I’ll start with psychology researcher Brian Haig, because he’s a strong supporter of Mayo’s message and his review also serves as an introduction and summary of her ideas. The review itself is a few pages long, so I will quote from it, interspersing some of my own reaction:

Deborah Mayo’s ground-breaking book, Error and the growth of statistical knowledge (1996) . . . presented the first extensive formulation of her error-statistical perspective on statistical inference. Its novelty lay in the fact that it employed ideas in statistical science to shed light on philosophical problems to do with evidence and inference.

By contrast, Mayo’s just-published book, Statistical inference as severe testing (SIST) (2018), focuses on problems arising from statistical practice (“the statistics wars”), but endeavors to solve them by probing their foundations from the vantage points of philosophy of science, and philosophy of statistics. The “statistics wars” to which Mayo refers concern fundamental debates about the nature and foundations of statistical inference. These wars are longstanding and recurring. Today, they fuel the ongoing concern many sciences have with replication failures, questionable research practices, and the demand for an improvement of research integrity. . . .

For decades, numerous calls have been made for replacing tests of statistical significance with alternative statistical methods. The new statistics, a package deal comprising effect sizes, confidence intervals, and meta-analysis, is one reform movement that has been heavily promoted in psychological circles (Cumming, 2012; 2014) as a much needed successor to null hypothesis significance testing (NHST) . . .

The new statisticians recommend replacing NHST with their favored statistical methods by asserting that it has several major flaws. Prominent among them are the familiar claims that NHST encourages dichotomous thinking, and that it comprises an indefensible amalgam of the Fisherian and Neyman-Pearson schools of thought. However, neither of these features applies to the error-statistical understanding of NHST. . . .

There is a double irony in the fact that the new statisticians criticize NHST for encouraging simplistic dichotomous thinking: As already noted, such thinking is straightforwardly avoided by employing tests of statistical significance properly, whether or not one adopts the error-statistical perspective. For another, the adoption of standard frequentist confidence intervals in place of NHST forces the new statisticians to engage in dichotomous thinking of another kind: A parameter estimate is either inside, or outside, its confidence interval.

At this point I’d like to interrupt and say that a confidence or interval (or simply an estimate with standard error) can be used to give a sense of inferential uncertainty. There is no reason for dichotomous thinking when confidence intervals, or uncertainty intervals, or standard errors, are used in practice.

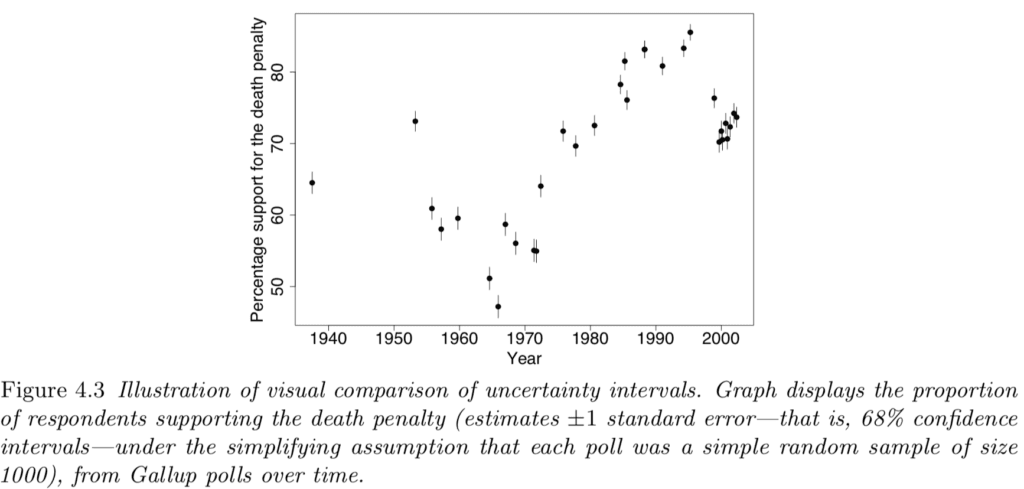

Here’s a very simple example from my book with Jennifer:

This graph has a bunch of estimates +/- standard errors, that is, 68% confidence intervals, with no dichotomous thinking in sight. In contrast, testing some hypothesis of no change over time, or no change during some period of time, would make no substantive sense and would just be an invitation to add noise to our interpretation of these data.

OK, to continue with Haig’s review:

Error-statisticians have good reason for claiming that their reinterpretation of frequentist confidence intervals is superior to the standard view. The standard account of confidence intervals adopted by the new statisticians prespecifies a single confidence interval (a strong preference for 0.95 in their case). . . . By contrast, the error-statistician draws inferences about each of the obtained values according to whether they are warranted, or not, at different severity levels, thus leading to a series of confidence intervals. Crucially, the different values will not have the same probative force. . . . Details on the error-statistical conception of confidence intervals can be found in SIST (pp. 189-201), as well as Mayo and Spanos (2011) and Spanos (2014). . . .

SIST makes clear that, with its error-statistical perspective, statistical inference can be employed to deal with both estimation and hypothesis testing problems. It also endorses the view that providing explanations of things is an important part of science.

Another interruption from me . . . I just want to plug my paper with Guido Imbens, Why ask why? Forward causal inference and reverse causal questions, in which we argue that Why questions can be interpreted as model checks, or, one might say, hypothesis tests—but tests of hypotheses of interest, not of straw-man null hypotheses. Perhaps there’s some connection between Mayo’s ideas and those of Guido and me on this point.

Haig continues with a discussion of Bayesian methods, including those of my collaborators and myself:

One particularly important modern variant of Bayesian thinking, which receives attention in SIST, is the falsificationist Bayesianism of . . . Gelman and Shalizi (2013). Interestingly, Gelman regards his Bayesian philosophy as essentially error-statistical in nature – an intriguing claim, given the anti-Bayesian preferences of both Mayo and Gelman’s co-author, Cosma Shalizi. . . . Gelman acknowledges that his falsificationist Bayesian philosophy is underdeveloped, so it will be interesting to see how its further development relates to Mayo’s error-statistical perspective. It will also be interesting to see if Bayesian thinkers in psychology engage with Gelman’s brand of Bayesian thinking. Despite the appearance of his work in a prominent psychology journal, they have yet to do so. . . .

Hey, not quite! I’ve done a lot of collaboration with psychologists; see here and search on “Iven Van Mechelen” and “Francis Tuerlinckx”—but, sure, I recognize that our Bayesian methods, while mainstream in various fields including ecology and political science, are not yet widely used in psychology.

Haig concludes:

From a sympathetic, but critical, reading of Popper, Mayo endorses his strategy of developing scientific knowledge by identifying and correcting errors through strong tests of scientific claims. . . . A heartening attitude that comes through in SIST is the firm belief that a philosophy of statistics is an important part of statistical thinking. This contrasts markedly with much of statistical theory, and most of statistical practice. Given that statisticians operate with an implicit philosophy, whether they know it or not, it is better that they avail themselves of an explicitly thought-out philosophy that serves practice in useful ways.

I agree, very much.

To paraphrase Bill James, the alternative to good philosophy is not “no philosophy,” it’s “bad philosophy.” I’ve spent too much time seeing Bayesians avoid checking their models out of a philosophical conviction that subjective priors cannot be empirically questioned, and too much time seeing non-Bayesians produce ridiculous estimates that could have been avoided by using available outside information. There’s nothing so practical as good practice, but good philosophy can facilitate both the development and acceptance of better methods.

E. J. Wagenmakers

I’ll follow up with a very short review, or, should I say, reaction-in-place-of-a-review, from psychometrician E. J. Wagenmakers:

I cannot comment on the contents of this book, because doing so would require me to read it, and extensive prior knowledge suggests that I will violently disagree with almost every claim that is being made. In my opinion, the only long-term hope for vague concepts such as the “severity” of a test is to embed them within a rational (i.e., Bayesian) framework, but I suspect that this is not the route that the author wishes to pursue. Perhaps this book is comforting to those who have neither the time nor the desire to learn Bayesian inference, in a similar way that homeopathy provides comfort to patients with a serious medical condition.

You don’t have to agree with E. J. to appreciate his honesty!

Art Owen

Coming from a different perspective is theoretical statistician Art Owen, whose review has some mathematical formulas—nothing too complicated, but not so easy to display in html, so I’ll just link to the pdf and share some excerpts:

There is an emphasis throughout on the importance of severe testing. It has long been known that a test that fails to reject H0 is not very conclusive if it had low power to reject H0. So I wondered whether there was anything more to the severity idea than that. After some searching I found on page 343 a description of how the severity idea differs from the power notion. . . .

I think that it might be useful in explaining a failure to reject H0 as the sample size being too small. . . . it is extremely hard to measure power post hoc because there is too much uncertainty about the effect size. Then, even if you want it, you probably cannot reliably get it. I think severity is likely to be in the same boat. . . .

I believe that the statistical problem from incentives is more severe than choice between Bayesian and frequentist methods or problems with people not learning how to use either kind of method properly. . . . We usually teach and do research assuming a scientific loss function that rewards being right. . . . In practice many people using statistics are advocates. . . . The loss function strongly informs their analysis, be it Bayesian or frequentist. The scientist and advocate both want to minimize their expected loss. They are led to different methods. . . .

I appreciate Owen’s efforts to link Mayo’s words to the equations that we would ultimately need to implement, or evaluate, her ideas in statistics.

Robert Cousins

Physicist Robert Cousins did not have the time to write a comment on Mayo’s book, but he did point us to this monograph he wrote on the foundations of statistics, which has lots of interesting stuff but is unfortunately a bit out of date when it comes to the philosophy of Bayesian statistics, which he ties in with subjective probability. (For a corrective, see my aforementioned article with Hennig.)

In his email to me, Cousins also addressed issues of statistical and practical significance:

Our [particle physicists’] problems and the way we approach them are quite different from some other fields of science, especially social science. As one example, I think I recall reading that you do not mind adding a parameter to your model, whereas adding (certain) parameters to our models means adding a new force of nature (!) and a Nobel Prize if true. As another example, a number of statistics papers talk about how silly it is to claim a 10^{⁻4} departure from 0.5 for a binomial parameter (ESP examples, etc), using it as a classic example of the difference between nominal (probably mismeasured) statistical significance and practical significance. In contrast, when I was a grad student, a famous experiment in our field measured a 10^{⁻4} departure from 0.5 with an uncertainty of 10% of itself, i.e., with an uncertainty of 10^{⁻5}. (Yes, the order or 10^10 Bernoulli trials—counting electrons being scattered left or right.) This led quickly to a Nobel Prize for Steven Weinberg et al., whose model (now “Standard”) had predicted the effect.

I replied:

This interests me in part because I am a former physicist myself. I have done work in physics and in statistics, and I think the principles of statistics that I have applied to social science, also apply to physical sciences. Regarding the discussion of Bem’s experiment, what I said was not that an effect of 0.0001 is unimportant, but rather that if you were to really believe Bem’s claims, there could be effects of +0.0001 in some settings, -0.002 in others, etc. If this is interesting, fine: I’m not a psychologist. One of the key mistakes of Bem and others like him is to suppose that, even if they happen to have discovered an effect in some scenario, there is no reason to suppose this represents some sort of universal truth. Humans differ from each other in a way that elementary particles to not.

And Cousins replied:

Indeed in the binomial experiment I mentioned, controlling unknown systematic effects to the level of 10^{-5}, so that what they were measuring (a constant of nature called the Weinberg angle, now called the weak mixing angle) was what they intended to measure, was a heroic effort by the experimentalists.

Stan Young

Stan Young, a statistician who’s worked in the pharmaceutical industry, wrote:

I’ve been reading at the Mayo book and also pestering where I think poor statistical practice is going on. Usually the poor practice is by non-professionals and usually it is not intentionally malicious however self-serving. But I think it naive to think that education is all that is needed. Or some grand agreement among professional statisticians will end the problems.

There are science crooks and statistical crooks and there are no cops, or very few.

That is a long way of saying, this problem is not going to be solved in 30 days, or by one paper, or even by one book or by three books! (I’ve read all three.)

I think a more open-ended and longer dialog would be more useful with at least some attention to willful and intentional misuse of statistics.

Chambers C. The Seven Deadly Sins of Psychology. New Jersey: Princeton University Press, 2017.

Harris R. Rigor mortis: how sloppy science creates worthless cures, crushes hope, and wastes billions. New York: Basic books, 2017.

Hubbard R. Corrupt Research. London: Sage Publications, 2015.

Christian Hennig

Hennig, a statistician and my collaborator on the Beyond Subjective and Objective paper, send in two reviews of Mayo’s book.

Here are his general comments:

What I like about Deborah Mayo’s “Statistical Inference as Severe Testing”

Before I start to list what I like about “Statistical Inference as Severe Testing”. I should say that I don’t agree with everything in the book. In particular, as a constructivist I am skeptical about the use of terms like “objectivity”, “reality” and “truth” in the book, and I think that Mayo’s own approach may not be able to deliver everything that people may come to believe it could, from reading the book (although Mayo could argue that overly high expectations could be avoided by reading carefully).

So now, what do I like about it?

1) I agree with the broad concept of severity and severe testing. In order to have evidence for a claim, it has to be tested in ways that would reject the claim with high probability if it indeed were false. I also think that it makes a lot of sense to start a philosophy of statistics and a critical discussion of statistical methods and reasoning from this requirement. Furthermore, throughout the book Mayo consistently argues from this position, which makes the different “Excursions” fit well together and add up to a consistent whole.

2) I get a lot out of the discussion of the philosophical background of scientific inquiry, of induction, probabilism, falsification and corroboration, and their connection to statistical inference. I think that it makes sense to connect Popper’s philosophy to significance tests in the way Mayo does (without necessarily claiming that this is the only possible way to do it), and I think that her arguments are broadly convincing at least if I take a realist perspective of science (which as a constructivist I can do temporarily while keeping the general reservation that this is about a specific construction of reality which I wouldn’t grant absolute authority).

3) I think that Mayo does by and large a good job listing much of the criticism that has been raised in the literature against significance testing, and she deals with it well. Partly she criticises bad uses of significance testing herself by referring to the severity requirement, but she also defends a well understood use in a more general philosophical framework of testing scientific theories and claims in a piecemeal manner. I find this largely convincing, conceding that there is a lot of detail and that I may find myself in agreement with the occasional objection against the odd one of her arguments.

4) The same holds for her comprehensive discussion of Bayesian/probabilist foundations in Excursion 6. I think that she elaborates issues and inconsistencies in the current use of Bayesian reasoning very well, maybe with the odd exception.

5) I am in full agreement with Mayo’s position that when using probability modelling, it is important to be clear about the meaning of the computed probabilities. Agreement in numbers between different “camps” isn’t worth anything if the numbers mean different things. A problem with some positions that are sold as “pragmatic” these days is that often not enough care is put into interpreting what the results mean, or even deciding in advance what kind of interpretation is desired.

6) As mentioned above, I’m rather skeptical about the concept of objectivity and about an all too realist interpretation of statistical models. I think that in Excursion 4 Mayo manages to explain in a clear manner what her claims of “objectivity” actually mean, and she also appreciates more clearly than before the limits of formal models and their distance to “reality”, including some valuable thoughts on what this means for model checking and arguments from models.

So overall it was a very good experience to read her book, and I think that it is a very valuable addition to the literature on foundations of statistics.

Hennig also sent some specific discussion of one part of the book:

1 Introduction

This text discusses parts of Excursion 4 of Mayo (2018) titled “Objectivity and Auditing”. This starts with the section title “The myth of ‘The myth of objectivity'”. Mayo advertises objectivity in science as central and as achievable.

In contrast, in Gelman and Hennig (2017) we write: “We argue that the words ‘objective’ and ‘subjective’ in statistics discourse are used in a mostly unhelpful way, and we propose to replace each of them with broader collections of attributes.” I will here outline agreement and disagreement that I have with Mayo’s Excursion 4, and raise some issues that I think require more research and discussion.

2 Pushback and objectivity

The second paragraph of Excursion 4 states in bold letters: “The Key Is Getting Pushback”, and this is the major source of agreement between Mayo’s and my views (*). I call myself a constructivist, and this is about acknowledging the impact of human perception, action, and communication on our world-views, see Hennig (2010). However, it is an almost universal experience that we cannot construct our perceived reality as we wish, because we experience “pushback” from what we perceive as “the world outside”. Science is about allowing us to deal with this pushback in stable ways that are open to consensus. A major ingredient of such science is the “Correspondence (of scientific claims) to observable reality”, and in particular “Clear conditions for reproduction, testing and falsification”, listed as “Virtue 4/4(b)” in Gelman and Hennig (2017). Consequently, there is no disagreement with much of the views and arguments in Excursion 4 (and the rest of the book). I actually believe that there is no contradiction between constructivism understood in this way and Chang’s (2012) “active scientific realism” that asks for action in order to find out about “resistance from reality”, or in other words, experimenting, experiencing and learning from error.

If what is called “objectivity” in Mayo’s book were the generally agreed meaning of the term, I would probably not have a problem with it. However, there is a plethora of meanings of “objectivity” around, and on top of that the term is often used as a sales pitch by scientists in order to lend authority to findings or methods and often even to prevent them from being questioned. Philosophers understand that this is a problem but are mostly eager to claim the term anyway; I have attended conferences on philosophy of science and heard a good number of talks, some better, some worse, with messages of the kind “objectivity as understood by XYZ doesn’t work, but here is my own interpretation that fixes it”. Calling frequentist probabilities “objective” because they refer to the outside world rather than epsitemic states, and calling a Bayesian approach “objective” because priors are chosen by general principles rather than personal beliefs are in isolation also legitimate meanings of “objectivity”, but these two and Mayo’s and many others (see also the Appendix of Gelman and Hennig, 2017) differ. The use of “objectivity” in public and scientific discourse is a big muddle, and I don’t think this will change as a consequence of Mayo’s work. I prefer stating what we want to achieve more precisely using less loaded terms, which I think Mayo has achieved well not by calling her approach “objective” but rather by explaining in detail what she means by that.

3. Trust in models?

In the remainder, I will highlight some limitations of Mayo’s “objectivity” that are mainly connected to Tour IV on objectivity, model checking and whether it makes sense to say that “all models are false”. Error control is central for Mayo’s objectivity, and this relies on error probabilities derived from probability models. If we want to rely on these error probabilities, we need to trust the models, and, very appropriately, Mayo devotes Tour IV to this issue. She concedes that all models are false, but states that this is rather trivial, and what is really relevant when we use statistical models for learning from data is rather whether the models are adequate for the problem we want to solve. Furthermore, model assumptions can be tested and it is crucial to do so, which, as follows from what was stated before, does not mean to test whether they are really true but rather whether they are violated in ways that would destroy the adequacy of the model for the problem. So far I can agree. However, I see some difficulties that are not addressed in the book, and mostly not elsewhere either. Here is a list.

3.1. Adaptation of model checking to the problem of interest

As all models are false, it is not too difficult to find model assumptions that are violated but don’t matter, or at least don’t matter in most situations. The standard example would be the use of continuous distributions to approximate distributions of essentially discrete measurements. What does it mean to say that a violation of a model assumption doesn’t matter? This is not so easy to specify, and not much about this can be found in Mayo’s book or in the general literature. Surely it has to depend on what exactly the problem of interest is. A simple example would be to say that we are interested in statements about the mean of a discrete distribution, and then to show that estimation or tests of the mean are very little affected if a certain continuous approximation is used. This is reassuring, and certain other issues could be dealt with in this way, but one can ask harder questions. If we approximate a slightly skew distribution by a (unimodal) symmetric one, are we really interested in the mean, the median, or the mode, which for a symmetric distribution would be the same but for the skew distribution to be approximated would differ? Any frequentist distribution is an idealisation, so do we first need to show that it is fine to approximate a discrete non-distribution by a discrete distribution before worrying whether the discrete distribution can be approximated by a continuous one? (And how could we show that?) And so on.

3.2. Severity of model misspecification tests

Following the logic of Mayo (2018), misspecification tests need to be severe in ordert to fulfill their purpose; otherwise data could pass a misspecification test that would be of little help ruling out problematic model deviations. I’m not sure whether there are any results of this kind, be it in Mayo’s work or elsewhere. I imagine that if the alternative is parametric (for example testing independence against a standard time series model) severity can occasionally be computed easily, but for most model misspecification tests it will be a hard problem.

3.3. Identifiability issues, and ruling out models by other means than testing

Not all statistical models can be distinguished by data. For example, even with arbitrarily large amounts of data only lower bounds of the number of modes can be estimated; an assumption of unimodality can strictly not be tested (Donoho 1988). Worse, only regular but not general patterns of dependence can be distinguished from independence by data; any non-i.i.d. pattern can be explained by either dependence or non-identity of distributions, and telling these apart requires constraints on dependence and non-identity structures that can itself not be tested on the data (in the example given in 4.11 of Mayo, 2018, all tests discover specific regular alternatives to the model assumption). Given that this is so, the question arises on which grounds we can rule out irregular patterns (about the simplest and most silly one is “observations depend in such a way that every observation determines the next one to be exactly what it was observed to be”) by other means than data inspection and testing. Such models are probably useless, however if they were true, they would destroy any attempt to find “true” or even approximately true error probabilities.

3.4. Robustness against what cannot be ruled out

The above implies that certain deviations from the model assumptions cannot be ruled out, and then one can ask: How robust is the substantial conclusion that is drawn from the data against models different from the nominal one, which could not be ruled out by misspecification testing, and how robust are error probabilities? The approaches of standard robust statistics probably have something to contribute in this respect (e.g., Hampel et al., 1986), although their starting point is usually different from “what is left after misspecification testing”. This will depend, as everything, on the formulation of the “problem of interest”, which needs to be defined not only in terms of the nominal parametric model but also in terms of the other models that could not be rules out.

3.5. The effect of preliminary model checking on model-based inference

Mayo is correctly concerned about biasing effects of model selection on inference. Deciding what model to use based on misspecification tests is some kind of model selection, so it may bias inference that is made in case of passing misspecification tests. One way of stating the problem is to realise that in most cases the assumed model conditionally on having passed a misspecification test does no longer hold. I have called this the “goodness-of-fit paradox” (Hennig, 2007); the issue has been mentioned elsewhere in the literature. Mayo has argued that this is not a problem, and this is in a well defined sense true (meaning that error probabilities derived from the nominal model are not affected by conditioning on passing a misspecification test) if misspecification tests are indeed “independent of (or orthogonal to) the primary question at hand” (Mayo 2018, p. 319). The problem is that for the vast majority of misspecification tests independence/orthogonality does not hold, at least not precisely. So the actual effect of misspecification testing on model-based inference is a matter that requires to be investigated on a case-by-case basis. Some work of this kind has been done or is currently done; results are not always positive (an early example is Easterling and Anderson 1978).

4 Conclusion

The issues listed in Section 3 are in my view important and worthy of investigation. Such investigation has already been done to some extent, but there are many open problems. I believe that some of these can be solved, some are very hard, and some are impossible to solve or may lead to negative results (particularly connected to lack of identifiability). However, I don’t think that these issues invalidate Mayo’s approach and arguments; I expect at least the issues that cannot be solved to affect in one way or another any alternative approach. My case is just that methodology that is “objective” according to Mayo comes with limitations that may be incompatible with some other peoples’ ideas of what “objectivity” should mean (in which sense it is in good company though), and that the falsity of models has some more cumbersome implications than Mayo’s book could make the reader believe.

(*) There is surely a strong connection between what I call “my” view here with the collaborative position in Gelman and Hennig (2017), but as I write the present text on my own, I will refer to “my” position here and let Andrew Gelman speak for himself.

References:

Chang, H. (2012) Is Water H2O? Evidence, Realism and Pluralism. Dordrecht: Springer.Donoho, D. (1988) One-Sided Inference about Functionals of a Density. Annals of Statistics 16, 1390-1420.

Easterling, R. G. and Anderson, H.E. (1978) The effect of preliminary normality goodness of fit tests on subsequent inference. Journal of Statistical Computation and Simulation 8, 1-11.

Gelman, A. and Hennig, C. (2017) Beyond subjective and objective in statistics (with discussion). Journal of the Royal Statistical Society, Series A 180, 967–1033.

Hampel, F. R., Ronchetti, E. M., Rousseeuw, P. J. and Stahel, W. A. (1986) Robust statistics. New York: Wiley.

Hennig, C. (2010) Mathematical models and reality: a constructivist perspective. Foundations of Science 15, 29–48.

Hennig, C. (2007) Falsification of propensity models by statistical tests and the goodness-of-fit paradox. Philosophia Mathematica 15, 166-192.

Mayo, D. G. (2018) Statistical Inference as Severe Testing. Cambridge University Press.

My own reactions

I’m still struggling with the key ideas of Mayo’s book. (Struggling is a good thing here, I think!)

First off, I appreciate that Mayo takes my own philosophical perspective seriously—I’m actually thrilled to be taken seriously, after years of dealing with a professional Bayesian establishment tied to naive (as I see it) philosophies of subjective or objective probabilities, and anti-Bayesians not willing to think seriously about these issues at all—and I don’t think any of these philosophical issues are going to be resolved any time soon. I say this because I’m so aware of the big Cantor-size hole in the corner of my own philosophy of statistical learning.

In statistics—maybe in science more generally—philosophical paradoxes are sometimes resolved by technological advances. Back when I was a student I remember all sorts of agonizing over the philosophical implications of exchangeability, but now that we can routinely fit varying-intercept, varying-slope models with nested and non-nested levels and (we’ve finally realized the importance of) informative priors on hierarchical variance parameters, a lot of the philosophical problems have dissolved; they’ve become surmountable technical problems. (For example: should we consider a group of schools, or states, or hospitals, as “truly exchangeable”? If not, there’s information distinguishing them, and we can include such information as group-level predictors in our multilevel model. Problem solved.)

Rapid technological progress resolves many problems in ways that were never anticipated. (Progress creates new problems too; that’s another story.) I’m not such an expert on deep learning and related methods for inference and prediction—but, again, I think these will change our perspective on statistical philosophy in various ways.

This is all to say that any philosophical perspective is time-bound. On the other hand, I don’t think that Popper/Kuhn/Lakatos will ever be forgotten: this particular trinity of twentieth-century philosophy of science has forever left us in a different place than where we were, a hundred years ago.

To return to Mayo’s larger message: I agree with Hennig that Mayo is correct to place evaluation at the center of statistics.

I’ve thought a lot about this, in many years of teaching statistics to graduate students. In a class for first-year statistics Ph.D. students, you want to get down to the fundamentals.

What’s the most fundamental thing in statistics? Experimental design? No. You can’t really pick your design until you have some sense of how you will analyze the data. (This is the principle of the great Raymond Smullyan: To understand the past, we must first know the future.) So is data analysis the most fundamental thing? Maybe so, but what method of data analysis? Last I heard, there are many schools. Bayesian data analysis, perhaps? Not so clear; what’s the motivation for modeling everything probabilistically? Sure, it’s coherent—but so is some mental patient who thinks he’s Napoleon and acts daily according to that belief. We can back into a more fundamental, or statistical, justification of Bayesian inference and hierarchical modeling by first considering the principle of external validation of predictions, then showing (both empirically and theoretically) that a hierarchical Bayesian approach performs well based on this criterion—and then following up with the Jaynesian point that, when Bayesian inference fails to perform well, this recognition represents additional information that can and should be added to the model. All of this is the theme of the example in section 7 of BDA3—although I have the horrible feeling that students often don’t get the point, as it’s easy to get lost in all the technical details of the inference for the hyperparameters in the model.

Anyway, to continue . . . it still seems to me that the most foundational principles of statistics are frequentist. Not unbiasedness, not p-values, and not type 1 or type 2 errors, but frequency properties nevertheless. Statements about how well your procedure will perform in the future, conditional on some assumptions of stationarity and exchangeability (analogous to the assumption in physics that the laws of nature will be the same in the future as they’ve been in the past—or, if the laws of nature are changing, that they’re not changing very fast! We’re in Cantor’s corner again).

So, I want to separate the principle of frequency evaluation—the idea that frequency evaluation and criticism represents one of the three foundational principles of statistics (with the other two being mathematical modeling and the understanding of variation)—from specific statistical methods, whether they be methods that I like (Bayesian inference, estimates and standard errors, Fourier analysis, lasso, deep learning, etc.) or methods that I suspect have done more harm than good or, at the very least, have been taken too far (hypothesis tests, p-values, so-called exact tests, so-called inverse probability weighting, etc.). We can be frequentists, use mathematical models to solve problems in statistical design and data analysis, and engage in model criticism, without making decisions based on type 1 error probabilities etc.

To say it another way, bringing in the title of the book under discussion: I would not quite say that statistical inference is severe testing, but I do think that severe testing is a crucial part of statistics. I see statistics as an unstable mixture of inference conditional on a model (“normal science”) and model checking (“scientific revolution”). Severe testing is fundamental, in that prospect of revolution is a key contributor to the success of normal science. We lean on our models in large part because they have been, and will continue to be, put to the test. And we choose our statistical methods in large part because, under certain assumptions, they have good frequency properties.

And now on to Mayo’s subtitle. I don’t think her, or my, philosophical perspective will get us “beyond the statistics wars” by itself—but perhaps it will ultimately move us in this direction, if practitioners and theorists alike can move beyond naive confirmationist reasoning toward an embrace of variation and acceptance of uncertainty.

I’ll summarize by expressing agreement with Mayo’s perspective that frequency evaluation is fundamental, while disagreeing with her focus on various crude (from my perspective) ideas such as type 1 errors and p-values. When it comes to statistical philosophy, I’d rather follow Laplace, Jaynes, and Box, rather than Neyman, Wald, and Savage. Phony Bayesmania has bitten the dust.

Thanks

Let me again thank Haig, Wagenmakers, Owen, Cousins, Young, and Hennig for their discussions. I expect that Mayo will respond to these, and also to any comments that follow in this thread, once she has time to digest it all.

P.S. And here’s a review from Christian Robert.

As I might be the first commenting, I would like to thank you for organizing a reasonable number of thoughtful people to comment first and only then share.

> the most foundational principles of statistics are frequentist.

Thanks for being so clear about that and clarifying general notions of frequency evaluation.

Cantor’s corner, to me, is just a colorful restatement of fallibility in science – never any firm conclusions but rather pauses until we see good benefit/cost of something noticed to be wrong along with anticipating how it might be fixed.

> no contradiction between constructivism understood in this way and Chang’s (2012) “active scientific realism” that asks for action in order to find out about “resistance from reality”, or in other words, experimenting, experiencing and learning from error.

I believe Deborah and Chang would confirm the major source of their views on this are from CS Peirce. Sorry could not help myself and I do believe the sources of ideas are important to be aware of.

#10

Keith comment

I do always credit Peirce as the philosopher from whom I’ve learned a great deal in phil sci, and also other pragmatists. Chang may have learned something about these themes from me (he wrote a wonderful review of EGEK 20 years ago). I’d like to get your take on SIST at some point.

Interesting, didn’t realize the acceptance of the standard model was based on a free parameter like this:

https://en.wikipedia.org/wiki/Weinberg_angle

We can see how they reasoned:

Prescott, C. Y., Atwood, W. B., Cottrell, R. L. A., DeStaebler, H., Garwin, E. L., Gonidec, A., … Jentschke, W. (1978). Parity non-conservation in inelastic electron scattering. Physics Letters B, 77(3), 347–352. doi:10.1016/0370-2693(78)90722-0

So there were some models that predicted exactly A/Q^2 = 0. These are deemed inconsistent with the data: p(D|H) ~ 0 for these models. However there were also two models that allowed A/Q^2 to vary but had a free parameter to fit (the W-S model and the “hybrid” model). Due to the free parameter, predictions of these models are more vague than a point prediction.

However, we can see from their figure we see that it is not like any non-zero value for A/Q^2 would be consistent with these models. The models do predict a precise curve for a given Weinberg angle and “y” (whatever that is): https://i.ibb.co/7pRRxP4/prescott1978.png

The published more data for varying “y” and a similar chart the following year:

https://i.ibb.co/8KpxdpR/prescott1979.png

Prescott, C. Y., Atwood, W. B., Cottrell, R. L. A., DeStaebler, H., Garwin, E. L., Gonidec, A., … Jentschke, W. (1979). Further measurements of parity non-conservation in inelastic electron scattering. Physics Letters B, 84(4), 524–528. doi:10.1016/0370-2693(79)91253-x

So now we see that p(D|W-S) ~ 0.4 and p(D|”hybrid”) ~ 6e-4. They do not seem to have a prior preference between the models, so p(W-S) ~ p(“hybrid”). Remembering that we dropped other models from the denominator that were considered to have p(D|H) ~ 0:

Looks exactly like the bayesian reason that I described here:

https://statmodeling.stat.columbia.edu/2019/03/31/a-comment-about-p-values-from-art-owen-upon-reading-deborah-mayos-new-book/#comment-1009714

tl;dr:

The physicists are not just rejecting A/Q^2 = 0 and then concluding their W-S model is correct like Robert Cousins implied.

They do not consider one model in isolation, rather they compare the relative fit of a variety of possible explanations and choose the best.

Some interesting points I noticed after making the post:

1) Because both W-S and “hybrid” models have the same free parameter it doesn’t matter how vague they are when comparing the two. You can just compare the likelihoods using the single best-fit parameter for each. However, I wonder if those models that predicted a value of exactly zero got dismissed too easily.

Ie, p(D|W-S) ~ 0.4 for the “best” choice of Weinberg angle which is going to be much larger than the likelihoods from the models that predicted A/Q^2 = 0, call those collectively p(D|H). But p(D|W-S) should be lower when we consider all the possible Weinberg angles (the density is spread across all the different values), so maybe the p(D|W-S) >> p(D|H) assumption does not hold.

2) Chi-squared p-values are being used to approximate the likelihoods. Not sure if this matters. The tails will be included for all terms so will somewhat cancel out…

P-value vs likelihood (probability mass) for binomial distribution with p = 0.5: https://i.ibb.co/zJFtyYJ/Pvs-Likelihood.png

x = 0:20

likelihood = dbinom(x, 20, .5)

pval = sapply(x, function(x) binom.test(x, 20, p = 0.5)$p.value)

From this case, I would guess use of a p-value to approximate the likelihood will tend to exaggerate the support for models that are relatively accurate.

The integration of several perspectives on the book of Mayo is really nice – thank you for this initiative.

Let me share my perspective: Statistics is about information quality. If you deal with lab data, clinical trials, industrial problems or marketing data, it is always about the generation of information, and statistics should enable information quality.

In treating this issue we suggested a framework with 8 dimensions (https://papers.ssrn.com/sol3/papers.cfm?abstract_id=2128547). My 2016 Wiley book with Galit Shmueli is about that. From this perspective you look at the generalisation of findings (the 6th information quality dimension). Establishing causality is different from a statement about association.

As pointed out, Mayo is focused on the evaluation aspects of statistics. Another domain is the way findings are presented. A suggestion I made in the past was to use alternative representations. The “trick” is to state both what you claim to have found and also what you do not claim is being supported by your study design and analysis. An explicit listing of such alternatives could be a possible requirement in research paper in a section titled “Generalisation of findings”. Some clinical researchers have already embraced this idea. An example of this form translational medicine is provided in https://papers.ssrn.com/sol3/papers.cfm?abstract_id=3035070. Type S errors are actually speaking this language and permit to evaluate alternative representations with meaning equivalence.

So, I claim that the “statistics war” is engaged in just part of the playing ground. Other aspects, relevant to data analysis, should also be covered. For a wide angle perspective of how statistics can impact data science, beyond machine learning and algorithmic aspects, see my new book titled: The Real Work of Data Science (wiley, 2019). The challenge is to close the gap between academia and practitioners who need methods that work beyond a publication goal measured by journal impact factors.

Integration is the 3rd information quality dimensions and the collection of review in this post demonstrate, yet again, that proper integration provides enhanced information quality. This is beyond data fusion methods (An example in this area can be seen in https://papers.ssrn.com/sol3/papers.cfm?abstract_id=3035807).

For me, the 8 information quality dimension form a comprehensive top down framework of what statistics is about, and it is beyond statistics wars…

I haven’t read the book or know anything about severity testing, but I liked Art Owen’s comment as a read in itself. Based on my own limited and brief (I’m a relative newbie) experience, the “Scientific and Advocacy Loss Functions” section of Owen’s comment really rings true. I think it might be easy to become an “advocate”, even for the most well-meaning scientist or statistician or reader of this blog, and I am skeptical that more education and better methods can ever fix this.

Also, this doesn’t contribute to discussion of Mayo’s book, but I also liked his comment on p-values in his “Abstract”. I know there’s been loads of comments on this on other posts (and I don’t wish to distract from the discussion intended), but I found his comment particularly useful from a practical as opposed to technical explanation.

“Much has been written about the misunderstandings and misinterpretations of p-values. The cumulative impact of such criticisms in statistical practice and on empirical research has been nil.”

(Berry, D. (2017), “A p-Value to Die For,” Journal of the American Statistical Association, 112, 895–897. [Taylor & Francis Online], [Web of Science ®], , [Google Scholar] , p. 896).

Garnett:

I disagree with the claim that the cumulative impact of such criticisms in statistical practice and on empirical research has been nil. For one thing, those criticisms have affected my own statistical practice and my own empirical research. For another thing, a few thousand people read my books and I think this guides practice. The claim of zero impact seems both ridiculous and without empirical support.

Andrew:

I’ll let you argue with Don Berry on that point.

I can say that your work has influenced me in many, many ways, but my opinion on all of this is inconsequential. i.e. nil.

This blog has certainly affected my statistical practice since finishing graduate school a few years ago. So, at least for me, such criticisms in statistical practice has had a profound effect.

However, as a newbie and outsider to all of this until a couple years ago, this entire topic reminds me oddly of my previous career in health and wellness with a focus on obesity prevention. One of the most difficult things in the world seems to be to change human behavior. Incentives of various sorts are always present, acknowledged or not. There seemed to be a school of thought in that arena that if you educated people enough about risks, then they would make the right choices (I guess the thinking was “logically, who wouldn’t make the right choice, considering the risks we’ve pointed out?”). Practically it didn’t seem to work or be that simple. And so, Art Owen’s comment rings particularly true.

While a claim of zero impact isn’t true (because, hey, it has already affected me, so that’s more than zero; and obviously many others), from my frame of reference, incentives are probably a large underlying piece that may not be affected by more education and better methods. So that seems worth considering.

My experience is similar and I agree completely. My goal is to understand why researchers keep doing these things despite the important and lucid criticisms. (And then, what else can we do about it?)

I plugged my own (very large and not very well written) blog post examining the severity notion in the context of adaptive trials in the comments of the previous post previewing some of Art Owen’s commentary. Here I will link to a twitter thread I recently wrote explaining the starting point of that examination. The value to readers here is that it’s a two-part thread and the first part explains (in very abbreviated fashion) what severity is (according to me, but I link Mayo’s definitive paper on the subject in the thread). Part 2 of the thread can be skipped if you don’t care about adaptive trials.

https://twitter.com/Corey_Yanofsky/status/1115725035266813953

Wow, that’s great that you finally addressed the book. I’m halfway through. I thought I’d be finished by now. Been hectic this last week.

I commend Deborah for undertaking the laudable task of presenting the history and the controversies. Very engaging work. I look forward to the discussion. As a novice, I am more cautious in drawing any generalizations.

My thinking style is some mixture of Andrew Gelman, Steven Goodman, and John Ioannidis. I am not a linear/binary thinker. One reason why I am not enamored of NHST to begin probably.

My larger focus is in widening the scope of audiences to these controversies. A hobby actually. Not a career interest as such.

To me, at the heart of statistics is not frequentism, or propositional logic, but *decision making* as such I really like Wald’s contribution. But I don’t want to focus on Wald, I want to talk about this idea of decision making:

There are basically two parts of decision making that I can think of: decision making *about our models* and decision making *about how we construe reality*. On this point, I think I’m very much with Christian Hennig’s constructivist views as I understand them (which is admittedly not necessarily that well). It’s our perception of reality that we have control over, and reality keeps smacking us when we perceive it incorrectly (as will Christian, if I perceive him incorrectly, I hope).

For me, the thing we want from science is models of how the world works which generalize sufficiently to make consistently useful predictions that can guide decisions.

We care about quantum physics for example because it lets us predict that putting together certain elements in a crystal will result in us being able to control the resistance of that crystal to electric current, or generate light of a certain color or detect light by moving charges around or stuff like that. I’m not just talking about what you might call economic utility, but also intellectual utility. Our knowledge of quantum mechanics lets people like Hawking come up with his Hawking Radiation theory, which lets us make predictions about things in the vastness of space very far from “money making”. Similarly, knowledge of say psychology can let us come up with theories about how best to communicate warnings about dangers in industrial plants, or help people have better interpersonal relationships, or learn math concepts easier, or whatever.

When it comes to decision making about our models, logic rules. If parameter equals q, then prediction in vicinity of y… If model predicts y strongly, and y isn’t happening, then parameter not close to q. That sort of thing.

Within the Bayesian formalism this is what Bayes does… it down-weights regions of parameter space that cause predictions that fail to coincide with real data. Of course, that’s only *within the formalism*. The best the formalism can do is compress our parameter space down to get our model as close as possible to the manifold where the “true” model lies. But that true model never is reachable… It’s like the denizens of flatland trying to touch the sphere as it hovers over their plane… So in this sense, the notion of propositional logic and converging to “true” can’t be the real foundation of Bayes. It’s a useful model of Bayes, and it might be true in certain circumstances (like when you’re testing a computer program you could maybe have a true value of a parameter) but it isn’t general enough. Another way to say this is “all models are wrong, but some are useful”. If all models are wrong, at least most of the time, then logical truth of propositions isn’t going to be a useful foundation.

Where I think the resolution of this comes from is in decision making outside Bayes. Bayesian formalism gives us in the limit of sufficient data collection, a small range of “good” parameters, that predict “best” according to the description of what kind of precision should be expected from prediction (which for Bayes is the likelihood p(Data | model))… But what we need to do with this is make a decision: do we stick with this model, or do we work harder to get another one. Whether to do that or not comes down to utilitarian concepts: How much does it matter to us that the model makes certain kinds of errors?

*ONE* way to evaluate that decision is in terms of frequency. If what we care about from a model is that it provides us with a good estimate of the frequency with which something occurs… then obviously frequency will enter into our evaluation of the model. But this is *just one way* in which to make a decision. We may instead ask what the sum of the monetary costs of the errors will be through time… or what the distribution of errors bigger than some threshold for mattering is… or a tradeoff between the cost of errors and the cost of further research and development required to eliminate them… If it takes a thousand years to improve our model a little bit… it may be time to just start using the model to improve lives today. That sort of thing.

So I see Andrew’s interest in frequency evaluation of Bayesian models as one manifestation of this broader concept of model checking as *fitness for purpose*. Our goal in Bayesian reasoning isn’t to get “subjective beliefs” or “the true value of the parameter” or frequently be correct, or whatever, it’s to *extract useful information from reality to help us make better decisions*, and we get to define “better” in whatever way we want. Mayo doesn’t get to dictate that frequency of making errors is All That Matters, and Ben Bernanke doesn’t get to tell us that Total Dollar Amounts are all that matter, and Feynman doesn’t get to tell us that the 36th decimal place in a QED prediction is all that matters…

And this is why “The Statistics Wars” will continue, because The Statistics Wars are secretly about human values, and different people value things differently.

Re: And this is why “The Statistics Wars” will continue, because The Statistics Wars are secretly about human values, and different people value things differently.

Yes, that is the case. Ironically this is more discernable in informal conversations that even in academic debates. Viewing the sociology of expertise is essential to evaluating epistemic environment — different degrees of cognitive dissonance manifest about the conflicts of interests and values. In academia, prestige is harder to garner as you all know, given the changes to university structure and incentives, over the last 30 or more years, which only have aggravated the epistemic environment. In this sense, I thought that John’s ‘Evidence Based Medicine Has Been Hijacked’ was a master presentation, for it points to how medicine is a profit-making endeavor largely.

Andrew’s blog is phenomenal as one of the few venues for the scope of topics, articles, and perspectives.

All the talk about “objectivity” in science is in my opinion a way to sidestep questions of value and usually to promote one set of values without mentioning the fact that we are promoting certain values.

“using an objective measure to select genes for further study” = “we want to study these genes and we don’t want you telling us it’s just because we like to study them”

“Objective evaluation of economic policy” = “We like the results of our study and we want to promote them over whatever you think the alternative analysis and results should have been”

If there can be only one “true objective” way to construe reality, then automatically, if you have a claim to having used it then whatever your opponent thinks is wrong…

I think the platonic view of probabilities on either side is motivated by the same goal—trying to make things “objective” by stipulation.

I think Laplace got the philosophy right that the world can be deterministic and probabilities are just our way of quantifying our own uncertainty as agents (this is not necessarily subjective). That way, you can believe in Laplace’s demon and still use probabilities to reason about your uncertainties.

Daniels

Agree with you completely

I think this is spot on:

I also believe it’s why machine learning is so popular—it’s much more common to see a predictive application in ML than in stats.

I’m also getting more and more focused on calibration and also agree with this characterization:

I wonder what Andrew thinks?

I feel like I’m doing well when Bob agrees with me.

As for predictive applications and ML etc. I think there are two senses in which we want good predictions. One narrow sense is we want to predict the results of the given process at hand… How big the sales will be if we show advertisement A with frequency F to people searching keywords K, for example (typical maybe ML application).

But also there are things where we don’t care about the predictions of the model at all, we care about inference of the parameter so that it can be used elsewhere, and this is more sciencey.

In experiments of type V (for Viscometer) the data about the duration of some fluid flow of a particular volume through a particular tube is D with fluid sampled from engine E today and D_old from samples taken yesterday, how many more days will the engine run before the viscosity reaches a level where we expect in entirely different model M that we will get damage to the engine?

And this is more what science is about. The unobserved parameters in good science are meaningful in and of themselves because they’re connected across multiple scenarios by being independently predictive of stuff about the world in a wide variety of models.

To expound slightly, because I realize this wasn’t necessarily obvious: we could have a pretty simplistic model of the viscometer, it might do a kind of lousy job of predicting the flow data through the special tube, perhaps because there’s some kind of uncontrolled clock error (a person has to press buttons on a stopwatch say, and we don’t have a good model for the person) but as long as it does a good job of inferring the viscosity after re-running the experiment with the person and the stopwatch a reasonable number of times, that’s all you’ll be using it for anyway, because you’ll be taking the viscosity you infer, and plugging it into some other model of say wear on the engine…

This is reminiscent of the parable of the orange juice: http://models.street-artists.org/2014/03/21/the-bayesian-approach-to-frequentist-sampling-theory/

the orange juice model predicts individual orange juice measurements *in a terrible way* but it converges to the right average, which is what you care about.

ML may give you *fantastic* predictions of the actual timing data from the viscometer experiment, but since it has no notion of viscosity baked in… it’s *completely and totally useless*. All of its parameters are things like “weights placed on the output of fake neuron i” or whatever.

In fact the way it gets such good viscometer predictions is probably that it internally “learns” something about the combination of fluid viscosity and muscle fatigue on the right thumb of the stopwatch holder… or something like that.

On Lakeland (lost track of the numbering)

I just want to say that the major position in SIST is to DENY that the frequency of errors is what mainly matters in science. I call that the performance view. The severe tester is interested in probing specific erroneous interpretation of data & detecting flaws in purported solutions to problems.

I thank Andrew for gathering up these interesting reflections on my Statistical Inference as Severe Testing: How to Get Beyond the Statistics Wars (2018, CUP). I was not given the reviews in advance, so I will read them carefully in full and look forward to making some responses. My view of getting beyond the statistics wars–at least as a first step–is simply understanding them! That is one of the main goals of the book. More later.

While reading this I finished off a pound of BBQ brisket. Both were delicious and both will take a good while to digest. Many thanks for hosting these discussions.

I find Owen’s discussion a bit frustrating. He frames severity as a competitor to his preferred “confidence analysis” approach and this (in my view) shows that he misunderstands what Mayo is trying to do. Owen asserts that there’s evidence for an inference if a one-sided confidence interval supports it. An anti-frequentist of whatever stripe could say to Owen, “Why are you calling that ‘evidence’? On the one hand, you have a rule with certain long-run properties and on the other hand you have a particular realization of a random variable that satisfies the rule. In what way to aggregate properties of the rule justify your specific claim here and now?” As discussed by Mayo here (1:28:28 to 1:29:37), part of the point of the severity rationale is provide an answer to this challenge, thereby justifying Owen’s preferred approach. But Owen doesn’t even see that it needs any such justification.

Maybe this is just an intrinsically hard idea to get across — I missed it on my first pass too. I guess my frustration is simply that if people can’t understand this much from what Mayo has written then they won’t have a complete enough understanding to comprehend (what I claim is) the the weak point in the severity rationale.

Interesting video. At ~ 5:30:

Ioannidis quote ~ “People claim conclusive evidence based on a single statistically significant result”

Mayo (surprised): “Do people do that!? I find that really amazing…”

Even worse than that is central premise behind 99% of the medical and social science literature for the last 70 years. They claim conclusive evidence for the scientific hypothesis…

Then a bit later someone in the audience points out “there is no mention of H* [the scientific hypothesis] for either Fisher or Pearson in your exposition”. Some discussion proceeds which doesn’t really clarify/resolve anything.

That is the problem I have with this type of stuff. It isn’t that it is wrong, it just attempts to focus on minutia while missing the elephant in the room (the connection people have been drawing between “H0” and “H*” is totally illegitimate).

Then this stopping rule thing that always comes up…

The data generating process is different if you collect 100 datapoints vs you collected datapoints until some threshold is reached. You need to use a different model for the different situations.

The likelihood needs to reflect the actual process that generated the data. You model the process you think generated the data (your theory) and then derive a prediction (likelihood) from it. Why is not only Mayo but someone in the audience acting like the only thing that could be different is the prior?

That result that held for binomial vs negative binomial sampling — the likelihood is the same for both data collection designs — hold generally. Working out the math in the normal case is an interesting exercise.

What do you mean by “generally”?

Typically the stopping rule is not “get a certain number of success/fails” like in that example, instead it is “collect data until n_min points; if p > then keep going until either you run out of money or p < 0.05”.

typo:

…if p > then keep going until…

Also, in that special case where the likelihoods end up the same I don’t see why that is a problem.

I don’t know what keeps making it drop the value. Should be:

p [greater than] 0.05

It interprets < as the opening of an html tag — to make that character appear I had to write <. (And & is also a special character, so to make < appear I had to write &lt; and so on.)

If you can make it through my blog post linked below, you’ll see a Cox/Bayes perspective on the logic of inference with a stopping rule. Think about it this way: if I tell you the data without you knowing the stopping rule you will come up with some model for the data.

Now I show you the data and you will make some Bayesian inference. You will also be able to calculate N exactly.

Now, I tell you the stopping rule, should your inference change?

In most cases, all you discover from the stopping rule is *why* you have N data points. Since you already have the data, you don’t learn anything about N for example.

Only in the situation when you can create a model in which you know something but not everything about the stopping rule will you be able to infer something about the stopping rule by using N as an additional kind of data.

For example, in your scenario, you can learn that they had more than $$$ much money because your model of the stopping is “go until you run out of money or get p < 0.05” and you saw N data points and you know that each one costs $X so they had at least $NX available…

Yes, my inference about the model I came up with should change since the denominator of Bayes’ rule changes when I “add” this new (more accurate) model.

The information you learn though is *about the experimenter* if none of the predictive equations in your model for the experimental outcomes changes, then your inference for the scientific process doesn’t change.

It can be the case that you have informative stopping rules, but this is precisely when you have a model for stopping that is less than completely certain.

Here are some examples:

I tell you my stopping rule is two H in a row, and I tell you my data is

HTHTTTHHTHT

you can calculate that I’m a liar, because I should have stopped at the 8th coin flip if I were being honest. But do you learn anything more about my coin?

Suppose I give you just “I flipped 7 times and got”

HTHTTTH

what is your model?

Now suppose I say “I flipped at least 7 times and the first 7 were”

HTHTTTH

Now suppose I say “I flipped at least 7 times, the first 7 were as below, and also I stopped after getting 2 heads and btw I stopped at the 10th flip”

HTHTTTH

Here the stopping rule and the number 10 together are informative. I can use them to infer *something* about the missing data which is an additional parameter.

I don’t disagree with this. Like I did here:

https://statmodeling.stat.columbia.edu/2019/03/31/a-comment-about-p-values-from-art-owen-upon-reading-deborah-mayos-new-book/#comment-1010811

You seem to be looking at it from a perspective I am not appreciating. I simply think that stopping rule in general requires changing the likelihood. There seem to be some cases where it works out to be the same, which I find very interesting, but not that relevant to general practice.

In a Bayesian analysis there are informative stopping rules and non-informative stopping rules. Carlos Ungil and I hashed that out quite a bit at one point. Summary was here:

http://models.street-artists.org/2017/06/24/on-models-of-the-stopping-process-informativeness-and-uninformativeness/

Yes, I would expect with typical stopping rules the likelihood is different. Eg the likelihood for the probability if sample til n = 100 vs sample til n = 10 then keep going until either p-value < 0.05 or n = 100: https://i.ibb.co/0Br6CHw/optstop.png

Code: https://pastebin.com/Nbszur1v

So I feel like I am missing something. The stopping rule discussion seems to be just about a special case where the likelihoods happen to be the same?

Yes, it is a special case. What happens is that the likelihood has two factors: (i) the probability that you stopped where you did, and (ii) the density of the data truncated by the stopping rule. It turns out that the normalizing constant of the second factor contains the parameter-dependent part of the first factor, so it always cancels out, leaving the likelihood proportional to that of a fixed sample size design.

Sorry, it is not clear to me. Are you saying this also applies to my example (sample til n = 100 vs sample til n = 10 then keep going until either p-value < 0.05 or n = 100) in the parent comment?

If so can you work it out so I can see how the stopping rule cancels out?

What if I put it this way: If I observe 80% “success” rate* wouldn’t you think the “stopping rule” model could better explain the results than the collect until 100 datapoints model?

* I see the x-axis in those plots is proportions yet labeled as percentages but you know what I mean

Yes, it applies to your example. I mean, I can work it out (or at least I could seven years ago), but I’m not going to. You’d learn more if you attempted it yourself anyway…

Ok, I find it very interesting that one could be transformed to the other via enough thought/effort. But still the likelihoods for the measured value (“% success”) are different as is clearly shown by the simulation.

The second simulation includes *lots* of outcomes where n was bigger than 10

if you have a dataset n=38 you are never in the situation where you have to compare “fixed N=10” vs “not fixed but N turned out to be 10”

Obviously if you have 35 data points you can rule out the idea that the rule was “sample until n = 10” but subset your second simulation to just the cases where the rule triggered exactly 10 data points and the p values were basically the same.

Now, if you know you were in the first case, vs you know you were in the second case, does the inference differ?

You are thinking about it completely differently than me.

Yes, obviously my inference differs since the first “case” (model) is different than the second case. You are focusing on one parameter value while I am thinking of the model as a whole.

I care about choosing the correct overall model. Look at how particle physicsists distinguished between

the “W-S” model and “hybrid/mixed” model here. The parameter sin^2(theta) could be the same but lead to different predictions from different models.

The part I think we’re confused about is that you have to *fix the data that you observe* and consider the parameter.

of course, in two different scenarios you’re likely to get totally different data… If your plan is “flip the coin 10 times” you’ll get a whole different distribution of possible flips than if your plan is “flip 10 times, then continue flipping until either p less than 0.05 against the test of binomial(p=0.5) or you get to 100”

But limit it to some specific set of data that is compatible with either model, and then try to see if you get a different *likelihood function* (ie. p(Data | model parameters) evaluated for the given Data)

Here’s what we do, we flip 10 times and filter it to results where binomial tests give p less than 0.05… this is the situation where you have fixed number of flips.

then do flip 10 times and continue until p less than 0.05 or you get to 100… and subset this to the situations where you got 10 flips….

now we imagine we’re in the situation “I have 10 flips and p is less than 0.05” would knowing which of the two processes occurred give you different probabilities over the outcomes?

I don’t understand why you would?

Likelihood is p(Data|Model). The model is fixed not the data…

My goal is simply to determine which model of the process that generated the data is best. How does doing this help me?

Isn’t it obvious that if N=38 you’ve completely ruled out the “flip 10 times” model?

so you’re only in the case where there’s any contention at all when the outcomes is compatible with both models.

This is the only case where p(Model1) doesn’t equal 0

Yes, but not the flip until n=38+ model.

Maybe I am getting this argument now. It is that we should always know the sample size (n), so every model of data generation should include n as a parameter. If you do that, optional stopping always leads to the same likelihood. Is that it?

The situation we are in is:

1) someone gives us some fixed data D.

2) For simplicity let’s say a priori p(M1) and p(M2) are both equal to 0.5

3) Model M1 is “flip exactly N times, where N = the number of times we flipped in data D” (otherwise p(Data | M1)=0)

4) Model M2 is “flip with some rule in which N is a possible outcome logically possible under the rule” (otherwise P(Data | M2)=0)

5) Decide whether M1 or M2 is the true model

Oops, and also decide what the binomial frequency f is.

It seems to amount to the idea we always need to include sample size as a parameter when deriving the likelihood from our models. My simulation histograms do not do that, which is why they look different even though the likelihood would be the same if I took that into account.

No, I still cannot accept this. Let’s say I want to find out which process generated the data (sample til 100 vs sample til stopping rule). If I observed ~80% successes I would prefer the second regardless of sample size. This is wrong?

Also I apologize to Mayo if this is a major distraction from her main points, but it was triggered by watching her lecture so…

Your graphs show frequency of Nheads/Ntotal

If you ask someone “do an experiment and report to me the fraction of heads you found” and they report “I did an experiment and found 1/3 heads” how are you going to assess this? Isn’t there a difference between “I flipped 10^6 times and got exactly 1/3 heads” and “I flipped 3 times and got 1 head” ?

basically your plot shows p(fraction of heads | experiment was run) for two different experiments. It’s very relevant if someone *only reports the fraction* not the N.

Anoneuoid:

You are taking likelihood to mean something other that what others mean by it – in particular, most think of the data as fixed with parameters varying.

This is not that uncommon and why I wrote this – https://statmodeling.stat.columbia.edu/wp-content/uploads/2011/05/plot13.pdf see appendix.

#9

A discussion of the stopping rule dropping out is Excursion 1 Tour II: https://errorstatistics.com/2019/04/04/excursion-1-tour-ii-error-probing-tools-versus-logics-of-evidence-excerpt/

#5 (to Corey’s comment)

Thanks for your point. I totally agree, but notice this has been thought to be a great big deal for many years (witness the recent Nature “retire significance” article).

My #4 comment alludes to Owen’s remarks about CIs and SEV, and why I see SEV as improving on CIs. In the ex. he discusses, the 1-sided test mu ≤ mu’ vs mu>mu’ corresponds to the lower conf bound. Interpreting a non-rejection (or non-SS result, or p-value “moderate”)* calls for looking at the upper conf bound.

* See how hard it’s becoming to talk now (that some advocate keeping p-values, but not saying “significant” or “significance”? I hope that we can at least get beyond those word disputes (but people will shout this down I suspect.)

> [Cousins’ monograph] is unfortunately a bit out of date when it comes to the philosophy of Bayesian statistics, which he ties in with subjective probability.

He seems to discuss both the “subjective” and “objective” points of view, and this paragraph sounds to me quite similar to some of your writings:

“Another claim that is dismaying to see among some physicists is the blanket statement that “Bayesians do not care about frequentist properties”. While that may be true for pure subjective Bayesians, most of the pragmatic Bayesians that we have met at PhyStat meetings do use frequentist methods to help calibrate Bayesians statements. That seems to be essential when using “objective” priors to obtain results that are used to communicate inferences from experiments.”

What I find interesting is that he seems to critique Jaynes for being too objectivistic (arguing for existence of priors representing ignorance) and too subjectivistic (ignoring the importance of having good frequentist characteristics). I may be misunderstanding the mentions, though. The subjective/objective distinction doesn’t make much sense anyway.

(BTW, the previous message was also me forgetting to complete the name/mail fields that were automatically filled in the good old days…)

Let me begin by addressing Wagenmakers who wrote:

“I cannot comment on the contents of this book, because doing so would require me to read it, and extensive prior knowledge suggests that I will violently disagree with almost every claim that is being made.”

You shouldn’t refuse to read my book because you fear you will disagree with it (let alone violently). I wrote it for YOU! And for other people who would say just what you said. SIST doesn’t hold to a specific view, but instead invites you on a philosophical excursion–a special interest cruise in fact–to illuminate statistical inference.