Last month we reported on the book Why We Sleep, which had been dismantled in a long and detailed blog post by Alexey Guzey. A week later I looked again, and Walker had not responded to Guzey in any way. In the meantime, Why We Sleep has also been endorsed by O.G. software entrepreneur Bill Gates. Programmers typically have lots of personal experience of sleep deprivation, so this is a topic close to their hearts.

As of this writing, it seems that Walker still has not responded to most of the points Guzey made about errors in his book. The closest thing I can find is this post dated 19 Dec 2019, titled “Why We Sleep: Responses to questions from readers.” The post is on a site called On Sleep that appears to have been recently set up—I say this because I see no internet record of it, and it currently has just this one post. I’m not trying to be some sort of sleuth here, I’m just trying to figure out what’s going on. For now, I’ll assume that this post is written by Walker.

The post begins:

The aim of the book, Why We Sleep, is to provide the general public access to a broad collection of sleep research. Below, I address thoughtful questions that have been raised regarding the book and its content in reviews, online forums and direct emails that I have received. Related, I very much appreciate being made aware of any errors in the book requiring revision. I see this as a key part of good scholarship. Necessary corrections will be made in future editions.

The first link above goes to a page of newspaper and magazine reviews, and the second link goes to Guzey’s post. I didn’t really see any questions raised regarding the book in those newspaper and magazine reviews, so I’m guessing that the “thoughtful questions” that Walker is referring to are coming entirely, or nearly entirely, from Guzey. It seems odd for Walker to cite “online forums” and only link to one of them. Also, although Walker links to Guzey, he does not address the specific criticisms Guzey made of his book.

And this makes me think Dan Davies’s maxim, Good ideas do not need lots of lies told about them in order to gain public acceptance. (Oddly enough, that classic quote appeared many years ago on a blog called “Economics and similar, for the sleep-deprived.”)

If Walker really knows his stuff, why would he write a book with so many errors?

On the other hand, it’s possible that Walker does know his stuff, or at least some of his stuff, and is just sloppy. He’s a sleep expert, not a statistics expert.

Looking at one specific claim

To try to get a sense of what’s going on, I decided to take a look at some particular disputed claim. I picked an item that’s purely statistical, with no confusing issues of biology: “Does the number of vehicular accidents caused by drowsy driving exceed the combined number of vehicular accidents caused by alcohol and drugs?”

From Guzey’s blog:

“In Chapter 1, Walker writes ‘vehicular accidents caused by drowsy driving exceed those caused by alcohol and drugs combined’. This shows how dangerous it is to not sleep and you have not refuted this part.”

I [Guzey] did look into this. I was not able to find any data on vehicular accidents caused by drowsy driving. However, the data by the National Highway Traffic Safety Administration on accidents that involve drowsy driving (a) and that involve drugs and alcohol (a) does not support this assertion.

According to this data, 1.2-1.4% (Table 2 in linked file) of car crashes involved drowsy driving, while 2.8% (Table 7 in linked file) of car crashes involved driving while having the alcohol blood concentration above the legal limit in the US (and this is not including accidents involving drugs).

From Walker’s blog:

Fatal vehicle accidents associated with drowsy driving are estimated to be around 15-20% (see also data from the AAA Foundation for Traffic Safety).

This is a big difference! Guzey says “1.1%-1.4% of car crashes involved drowsy driving”; Walker says “Fatal vehicle accidents associated with drowsy driving are estimated to be around 15-20%.” Guzey’s talking all accidents, Walker’s just talking fatal accidents. But that can’t explain a factor of 14!

Fortunately, both bloggers give references here, so we can follow the links and check.

Indeed, if you follow Guzey’s source, which is a report from the U.S. National Highway Traffic Safety Administration, you’ll see an estimate that drowsy driving was involved in 2.3% of fatal crashes.

Walker’s first link is to a news article that states “Drowsy driving is estimated to be a factor in 20 percent of fatal crashes,” but provides no sources. His second link is to a report from the AAA Foundation for Traffic Safety that begins:

According to the National Highway Traffic Safety Administration (NHTSA), approximately . . . 2.5% of all fatal crashes in years 2005 – 2009 involved a drowsy driver . . . However, the official government statistics are widely regarded as substantial underestimates of the true magnitude of the problem. . . . unlike impairment by alcohol, impairment by sleepiness, drowsiness, or fatigue does not leave behind physical evidence, and it may be difficult or impossible for the police to ascertain in the event that a driver is reluctant to admit to the police that he or she had fallen asleep . . . This inherent limitation is further compounded by the design of the forms that police officers complete when investigating crashes, which in many cases obfuscate the distinction between whether a driver was known not to have been asleep or fatigued versus whether a driver’s level of alertness or fatigue was unknown.

They take a sample of crashes and use a statistical modeling approach with multiple imputation to estimate drowsiness levels, concluding that 21% of all fatal crashes involved a drowsy driver.

So it seems like Walker’s claim is reasonable here. I can see how Guzey could’ve stopped at the NHTSA data, but this AAA report seems to be making some good points, and the 20% of fatal crashes involving drowsy driving seems as reasonable as any other number out there.

Summary

Based on his book and his Ted talk, it seems that Walker has a message to send, and he doesn’t care much about the details. He’s sloppy with sourcing, gets a lot wrong, and has not responded well to criticism.

But this does not mean we should necessarily dismiss his message. Ultimately his claims need to be addressed on their merits.

P.S. More here. It doesn’t look good.

P.P.S. I was also wondering about this bit from the On Sleep blog post:

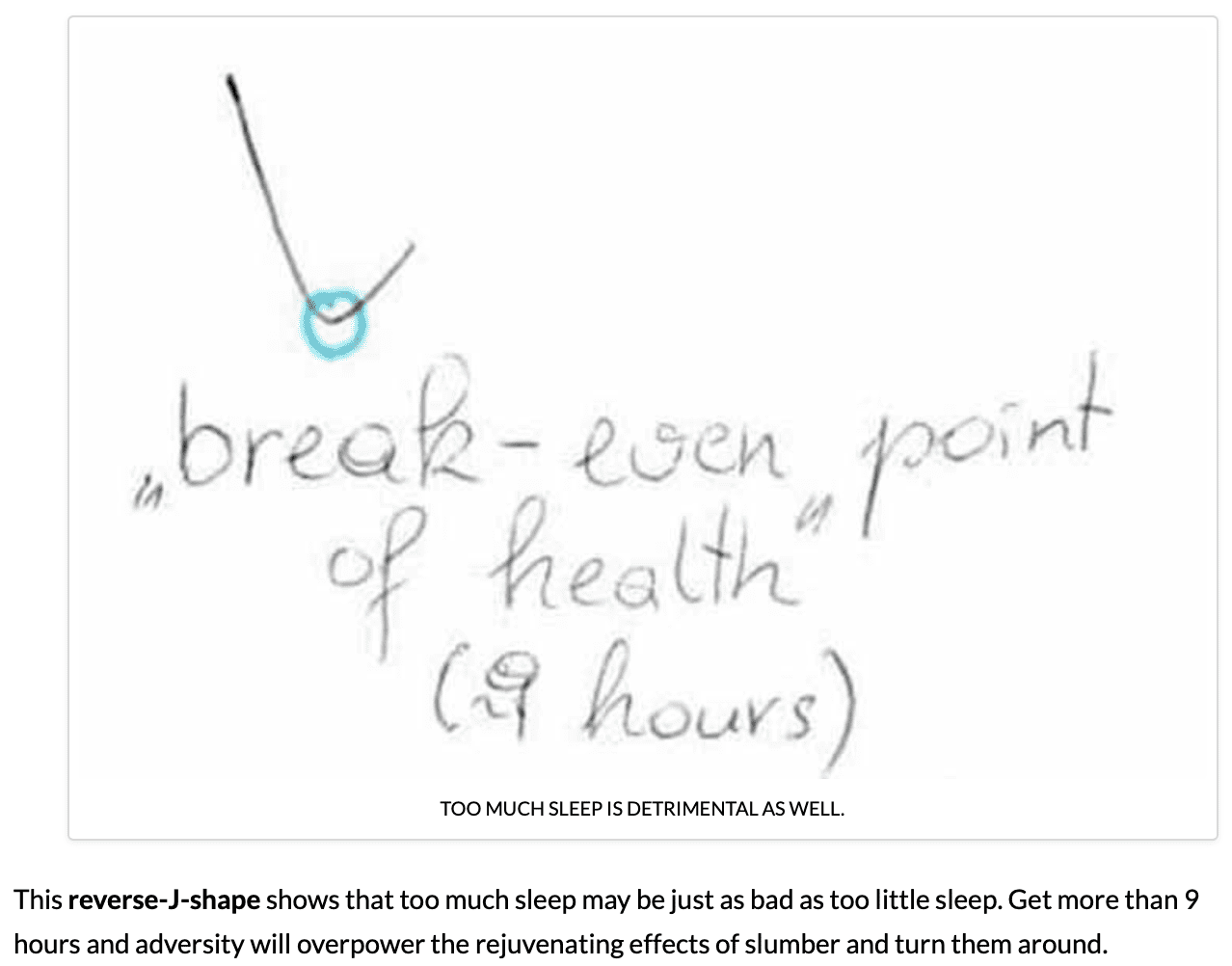

Why did the blogger refer to this as a “reverse-J-shaped function”? Wouldn’t most people call this a “U shape”? I noticed this earlier and just figured it was one of those two-countries-separated-by-a-common-language thing—we say trunk, they say boot; we say quotation mark, they say inverted comma; we say U, they say reverse-J—but then, after seeing the graph manipulation discussed “>here, I wondered if something else was going on.

I did some googling and I suspect I know what’s going on. I don’t have a copy of Why We Sleep and I couldn’t find the exact passage from the book, but I found this picture online:

The point of using “reverse-J” rather than “U” is that reverse-J is asymmetric, implying that worse things happen at low sleep hours than at high sleep hours. I think it’s more accurate to describe the diabetes curve posted by the On Sleep blogger as a U, not a reverse-J.

This is no big deal in itself, but it does fit in the larger pattern of mischaracterization of research results in a way that that supports the authors larger position. I have no reason to believe this particular error (if you want to call it that) is intentional—it seems likely that, once you’re looking for a reverse-J, you’ll see it anywhere. I guess it’s just one more researcher degree of freedom, whether to describe a result using a symmetrical or asymmetrical framing.

Andrew: Interesting analysis; nicely done. I was confident for the first 2 weeks following Guzey’s critique that Walker would surely surface with a full-throated point-by-point response. Humbled by Walker’s long silence, though, I realize now that I’ve been leaving the runway lights on for Amelia Earhart!

I’ve also been disappointed that the hasn’t responded.

But I’m glad that this site is staying on it. I find this kind of thing super interesting.

The problem I have with the Teft paper on imputation is that there doesn’t seem to be any validation of the variables used to do the imputation. If one is going to assume, for instance, that 30% (or whatever it is — it’s not stated in the paper) of people driving late at night are drowsy, then you’ve simply begged the question. I don’t see anything in the Teft paper where each of the variables used is validated or what the error for those are. At some point, it seems to me, you are not doing imputation as much as just propagating error.

I’m not a statistician. I’m a forensic pathologist and computer scientist, but this reminds me of a common problem with respect to inferring cause and manner of death. Such inferences necessarily require an estimate of prevalence or prior probability, and these are almost always poorly characterized. Arguments about these classifications commonly do not involve disagreements about the actual objective findings, but rather prevalence estimates.

Worse, they are self-defining. A classic example is the issue of Sudden Infant Death Syndrome versus unsafe sleeping/asphyxia. SIDS definitionally provides a negative autopsy; asphyxia commonly does. Twenty years ago, asphyxial death classifications were not that common — you basically needed to demonstrate an obvious airway obstruction by physical means (e.g. livor pattern demonstrating a limb over the face, obvious wedging in a couch at the scene, etc). Now, any case of negative autopsy with fluffy bedding gets a diagnosis of asphyxia. This, in turn, results in massively increased estimates of prevalence, which in turn encourages lowering the bar on classifying a case as asphyxia, which increases the estimate of prevalence, and on and on. So, if I were going to use “fluffy bedding” as a variable in my imputation, what should I use? The 1980 estimate that gives it little weight, or the 2019 estimate that almost certainly gives it too much?

This was a huge deal a couple of decades ago when a pathologist in the UK lost his license for calling a death a homicide based on a bad estimation of probability. Unfortunately, the paper that was published to refute it made equally bad assumptions. The first pathologist assumed that in the case of serial SIDS each case was independent and a natural death equally unlikely each time, which overestimated the prevalence of abuse. However, the paper that refuted it made the assumption that all cases were natural unless there had been a conviction in court — which massively underestimated the prevalence of abuse.

Accordingly, when I see studies such as this on on drowsy driving, I am very skeptical — because I know that a lot of the estimates are pretty bad, but always seem to be taken at face value when used for statistical modeling. It seems that filling in missing variables with unvalidated and error filled proxies doesn’t help a lot.

I wonder if I can give a reason why Walker’s message SHOULD be dismissed: incentives. His apparent “sloppiness” has undoubtedly led to a lot of confusion regarding sleep, and erodes trust in experts. He should relinquish the mantle of sleep expert and someone more careful should step up. It should be a lesson to those who want to be famous for what they know and want to influence people’s behavior. “I better be careful about what I say with confidence lest I be shunned like Walker” should be something that wannabes tell themselves.

“Economics And Similar” was Davies’ earlier blog.

Guzey seems to give minimal attention to the relationship of sleep deprivation and cognitive performance, with the only comment referencing his own results from some cognitive tests on a website with a handful of cognitive tests and no information about their validity, reliability, nor ability to discriminate at the high and low ends. Given that cognitive performance is of substantial importance for many people within many types of careers, it seems unlikely it was ignored unintentionally, but rather because it did not serve his argument.

Sleep deprivation: Impact on cognitive performance

Paula Alhola1 and Päivi Polo-Kantola2

Author information Copyright and License information Disclaimer

1 Department of Psychology

2 Sleep Research Unit (Department of Physiology), University of Turku, Turku, Finland

Correspondence: Paula Alhola, Department of Psychology, University of Turku, FI-20014 Turku, Finland, Email [email protected]

The sleep-deprived human brain

Adam J. Krause,1 Eti Ben Simon,1 Bryce A. Mander,1 Stephanie M. Greer,2 Jared M. Saletin,1 Andrea N. Goldstein-Piekarski,2 and Matthew P. Walker1,2

Author information Copyright and License information Disclaimer

1Department of Psychology, University of California, Berkeley

2Helen Wills Neuroscience Institute, University of California, Berkeley, California 94720–1650, USA

Correspondence to M.P.W. ude.yelekreb@reklawpm

>Guzey seems to give minimal attention to the relationship of sleep deprivation and cognitive performance, with the only comment referencing his own results from some cognitive tests on a website with a handful of cognitive tests and no information about their validity, reliability, nor ability to discriminate at the high and low ends. Given that cognitive performance is of substantial importance for many people within many types of careers, it seems unlikely it was ignored unintentionally, but rather because it did not serve his argument.

It did not serve which argument of mine exactly?

I never claim and I never suggest in my essay that people should go around sleep deprived. The only thing that I write is “When I slept for 6 hours a night with no naps for 5 days, I felt pretty bad, but there was no difference in my cognitive scores, compared to the baseline.”

Also, here’s the information about the cognitive tests on that site in the section of the site helpfully called “The Science Behind Quantified Mind”: http://www.quantified-mind.com/science

>I never claim and I never suggest in my essay that people should go around sleep deprived.

I should’ve been more careful here. I did suggest that acute sleep deprivation is helpful in depression, while also noting that “Chronic or externally imposed sleep deprivation is an entirely different matter and has no relation to sleep deprivation therapy”: https://guzey.com/books/why-we-sleep/#no-a-good-night-s-sleep-is-not-always-beneficial-sleep-deprivation-therapy-in-depression.

Alex:

Using a single example of a self administered cognitive assessment is misrepresenting the effects of sleep deprivation – it’s journalism and entertainment, not science. The test you took isn’t the least bit relevant.

First, it’s a sample of one, which is more than a little hypocritical coming from a critic of statistical work;

Second, it seems patently obvious that a person who knows their being tested and has experienced only modest sleep deprivation could perk themselves up for a short test

Third, there’s nothing indicating that the tests at the referenced site have any validity for anything. The site claims it is the “first testing platform that is designed for repeated measures” – it doesn’t claim that it works, probably for good reason.

All of this is exactly why that section is called “Appendix: my personal experience with sleep”

>Third, there’s nothing indicating that the tests at the referenced site have any validity for anything. The site claims it is the “first testing platform that is designed for repeated measures” – it doesn’t claim that it works, probably for good reason.

If you have suggestions for a good way to test effects of sleep deprivation on myself, I’m open to suggestions. I’m planning to run a more formal sleep deprivation experiment in early 2020.

Current plan is to test right after waking up and before going to bed for 3 weeks.

week 1: sleep 7-8 hours to establish the baseline

week 2: sleep 3 hours to see progressive effects of sleep deprivation

week 3: sleep 7-8 hours to see the speed of return to baseline and any lingering effects

Alexey:

I recommend working with others on this sort of data collection. I only say this because my friend Seth Roberts was really into self-experimentation, and this was fine at first, but eventually he started to believe his own hype. His self-measurements became whatever he wanted to find, and he ended up in a skepticism-free feedback loop of spurious discovery. It’s even possible that his self-experimentation killed him.

I’m not saying not to do self-experimentation. Just be careful not to overinterpret self-recorded data.

I miss Seth. Was pretty shocked at the time to hear that he passed away given that he was always trying to optimize his biomarkers, but it also wasn’t surprising since his self experimentation went a tad bit too far (consuming large amounts of butter and supplemental oils), even for those who were really into the quantified self movement

https://perfecthealthdiet.com/2014/06/seth-roberts-appreciation/

>Second, it seems patently obvious that a person who knows their being tested and has experienced only modest sleep deprivation could perk themselves up for a short test

I don’t understand this point. If you can perk up for a short test without any external stimulants, doesn’t this kind of demonstrate that sleep deprivation, as described in the comment you’re responding to, doesn’t do much?

I think it’s possible to perk up for a *short* test, like 10 minutes, but it’s a transient effect. If you want to sit for a 6 hour board exam there’s nothing you can do to undo the sleep deprivation effect. That’s my impression from personal experience.

Informally, I tried sleeping for 3 hours a day for 3 days in a row recently and had no problems focusing on StarCraft 2 and other mentally demanding video games for 10-15 hours a day.

I also think this is highly variable from one person to another and even from one age to another within person.

Video games! If I played video games for 10 hours straight, I’d totally run out of quarters.

Alexey:

Thank you for proving my pount.

While I also think it would be interesting to hear his (or others’) take on the relationship between sleep deprivation and cognitive performance, I do not think he can be criticized for not giving it in an essay that explicitly says “in this essay, I’m going to highlight the five most egregious scientific and factual errors Walker makes in Chapter 1 of the book”.

Totally off topic but I have some questions:

So I followed some links and have been reading articles, blog posts and resulting course materials precipitated by California’s passage of AB 705. The idea behind the new law was that because remedial courses didn’t count towards 4-year transfers/degrees too many students were frustrated by the extra seemingly unrewarded work and dropped out. The solution is to place students according to their wishes/high school grades and scrap the math test that had been used to assign those deficient in algebra or even arithmetic to remedial courses and instead put those who “need a rigorous numbers-related course” into …. you guessed it: Statistics.

“It may be because there were other classes they preferred to teach … or maybe even because they never took an introductory statistics course themselves. The reality of a post-AB705 world is that not teaching intro stats is no longer an option. At our college we are anticipating tripling the number of sections on introductory statistics.”

“Many students who would have been placed into Introduction to Algebra or even Arithmetic are getting As and Bs in college statistics.”

“I got an A in College Statistics, and now I am on my way” – from a student who had serially flunked algebra in high school.

Of the few course materials I’ve found most require the use of Pearson’s “Statcrunch” which from the YouTube videos appears to be Stata with fewer buttons and more sparkly results.

So to my questions. What role does a solid foundation in algebra through calculus play in properly understanding statistics? Could it be that people unencumbered by math have better statistical inferences? How should variables be analyzed when you don’t know what a variable is?

And finally a question to myself: “How will I go after the opposing side’s expert who in his deposition gave the wrong answers to questions about NHST, p-values, CIs, power, etc. when the jury is full of people who think they’ve mastered statistics and who, according to one course outline, covered all of those topics in a single 50 minute lecture?”

“How will I go after the opposing side’s expert who in his deposition gave the wrong answers to questions about NHST, p-values, CIs, power, etc. when the jury is full of people who think they’ve mastered statistics and who, according to one course outline, covered all of those topics in a single 50 minute lecture?”

Badabing.

As the pressure has risen for everyone to have an equal degree, it’s already clear that

1) the cost of providing education to an overall less-qualified student body is rising;

2) the educational value of a degree – any degree, but mostly BA’s – is falling;

3) the monetary value of a degree is falling;

4) the motivational value associated with earning a degree is falling.

No doubt the “improve instruction” movement among faculty is at least in part a response to less prepared and less motivated students.

For the record, I’m only speaking anecdotally from my experience and from some personal intuitions, but I’d like to chime in on your questions which get to the crux of how stats—and generally quantitative reasoning—might be taught better.

> What role does a solid foundation in algebra through calculus play in properly understanding statistics?

Honestly, I haven’t seen much evidence that the standard math course sequence is very critical in itself. I think lots of ideas from these course are essential tools to have on hand. For example: the idea of variables and symbolic manipulation from algebra; the connections between logical arguments and visualization from geometry; characterizing relationships in terms of rates of change from differential calculus; and the idea of taking large sums from integral calculus.

While I do not claim to have a solution for how to get those essential ideas across *outside* the standard basic math curriculum, my intuition is that programming/simulation would get those ideas across in a much more efficient way that makes more clear how these ideas are useful in quantitative reasoning. The point is that simulation (which actually need not be computer based, though obviously that is convenient) helps make it clear how the abstract math operations yield concrete numerical differences, and I feel that this is often the missing link even for students who do well in the standard math foundations.

In summary, I think *elements* of the standard math sequence are important for understanding/using statistics, but I think a better foundational sequence would incorporate programming and simulation since I think that would have a better chance of getting those core elements across in a way that a stats course could build on.

Standard math courses aren’t just about math. Like all science courses, they are about problem solving – building the knowledge to break a problem down into it’s important factors and relationships, setting up quantitative descriptions of those relationships and using them to solve a problem.

The way to get the essential ideas of any concept across is the same as it has been since the dawn of humanity: practice and repetition. Musicians practice. Athletes practice. Firefighters, policemen, soldiers, airmen and everyone that has a job to do practices that job.

> Standard math courses aren’t just about math. Like all science courses, they are about problem solving – building the knowledge to break a problem down into it’s important factors and relationships, setting up quantitative descriptions of those relationships and using them to solve a problem.

Yes, the point is to convey this to students efficiently so they can go out and use what we’re teaching them. I haven’t seen much evidence that students pick this up very easily from the standard course sequence, so I think we’re not doing this right.

> practice and repetition

Again, the question is *what* to practice and repeat such that students pick up the skills they need and know how to generalize them outside the domains covered in limited course time. See, for example, the work on “concreteness fading” (terrible name, interesting idea) that starts with concrete problems and gradually transitions toward more abstract ones:

https://link.springer.com/article/10.1007/s10648-014-9249-3

Some thoughts and variations on the above themes, from the perspective of a mathematician who has thought a lot about teaching math, statistics, and problem-solving in general, and teaching math teachers (current and prospective) about these things:

Jim said,

“Standard math courses aren’t just about math. Like all science courses, they are about problem solving – building the knowledge to break a problem down into it’s important factors and relationships, setting up quantitative descriptions of those relationships and using them to solve a problem.”

I would alter this to say, “standard math courses *shouldn’t* be just about math”. Unfortunately, standard math courses often strip away the problem-solving, and focus just on using and applying prescribed procedures. This is why I volunteered to teach courses for prospective math teachers — because so many of my university students just thought that math is applying algorithms or other prescribed procedures.

Jim also said, “The way to get the essential ideas of any concept across is the same as it has been since the dawn of humanity: practice and repetition.”

I would modify this to say that “practice and repetition” needs to be interpreted as “practice in new contexts” and “repetition with appropriate modifications to fit different contexts”.

Anon said, “Yes, the point is to convey this to students efficiently so they can go out and use what we’re teaching them. I haven’t seen much evidence that students pick this up very easily from the standard course sequence, so I think we’re not doing this right.”

I agree that the standard course sequence as often taught does not prepare students. I think the answer is to make efforts to alter the standard courses to emphasize that the general problem solving principles (e.g., as Jim said, breaking down problems, setting up relationships involved in the problems). For example, instruction needs to include “preparatory” exercises in breaking down problems, describing problem constraints in terms of equations, etc. The concreteness fading that Anon points to is a good idea, but there is also a need for having students practice by using techniques in contexts that differ in a “parallel” direction, as well as going from concrete to abstract.

In case anyone is interested: Here are some of the problems assigned in a class I taught for prospective teachers: https://web.ma.utexas.edu/users/mks/360M05/360Mprob05.html

Many thanks! It turns out my question wasn’t wholly idle. Without going into all the details I’ve become my 11 year old son’s math teacher and after a lot of one-on-one work and drills at the glass dry erase board I’ve installed the little beast is nearly through the 8th grade algebra book and we’ve started Euclid’s elements. We spent a lot of time with balance scales and weights exploring equality, at the board pondering why dividing by 2/3 is the same as multiplying by 3/2 and then one day it clicked.

So, great, and he’s an eager engaged student. However, I’m no teacher and we’ve completely avoided the statistics in the 6th, 7th and 8th grade texts used by his school (which are horrible IMHO – only one even mentions problem solving techniques and that’s found only at the very end and fills just half of a page – so I bought some much more like what I had – fewer pictures and more emphasis on why e.g. completing the square works). Nevertheless, in looking at the state approved texts there’s a clear emphasis on statistics through 10th grade and I worry, among all my other worries about this endeavor, that I’m making a mistake by holding off.

P.S. The sixth grade approved math book has one to two page sections each on “sampling strategies”, “central tendency”, “interpreting data”, “stem-and-leaf plots”, “dealing with outliers”, “interpreting histograms”, “scatter plots”, etc. which I find totally bonkers. Anyway, wish me well on this mad project and hopefully the kid outruns his old man sooner than later so I can hand the project off to someone competent.

I think there’s a lot to be said for computing as an aid to understanding in mathematics. Avoid the “graphing calculator” and go straight to something like R or python. I bought a “Humble Bundle” of no starch press books for a song, with education in mind. One book was called “Doing math with python” you might take a look. There was also “Math Adventures With Python” which is a little more advanced.

You might also look at Uri Wilensky’s book “Agent Based Models With NetLogo” and Browning et Al “Middle School Science Labs With NetLogo” the last one I don’t have and can’t get a good sense from the 3 page Kindle sample, but it’s only $3 so you might as well take a look.

NetLogo offers a way to take real scientific models of phenomena and then play with them and discover how the behavior changes. You can even add your own additional rules to systems and see what happens. With the use of random number generators you can see how sometimes results are insensitive to the details… although say the individual trees that burn may vary, the percentage of the forest that burns is quite consistent to within a small fluctuation… this is the important reason that statistical models work.

You can, for example, run simulations, output the data, and then analyze the data in R by making graphs for example…

Daniel: I believe simulation is a game changer for science, especially with respect to applying math to empirical questions.

First it points to the distinction between a representation and what is being represented (a guess at a fake universe and an exact target fake universe), automation of the implications of the guess at a fake universe (taken as true) and how these combine to guide action so that it is less likely to be frustrated when undertaken.

Until recently, access to those ideas was most through long and difficult study until it clicks.

Keith, I agree, I particularly think Agent Based models are an important kind of simulation in science. Most interesting scientific situations are the result of multiple things dynamically interacting through time. This is true for tissues in the body, organisms in ecological environments, rubber molecules in the membrane of a balloon, economic actors in a county, countries interacting through political negotiations, drug molecules interacting with disease causing agents… you name it, there is probably more than one thing involved, and the situation probably changes in time. That’s all it takes to make agent based modeling a useful technique.

For the record, this “anonymous” is me–my fault for getting distracted and pressing the “submit” button too soon :-(.

Anyway, many thanks to all for getting into the education side of things, not just because it is interesting/relevant to me personally (and I will totally be stealing* Martha’s excellent and well-earned ideas!) but because that’s where the ultimate solutions to many problems discussed on this blog (including shoddy sleep research and its credulous acceptance by the scientific public) are likely to lie.

* Obviously with attribution! Here, I use “stealing” in the aspirational sense implied by that quote variously attributed to Picasso, Stravinsky, or some other famous dead guy, “good artists borrow, great artists steal.”

In the area of math teaching, “stealing” (or “borrowing”) is the norm — what we nowadays sometimes call “public domain”. Two people I have stolen/borrowed/adapted a lot from are George Polya and Alan Schoenfeld.

You might find the discussion at https://www.mathgoodies.com/articles/problem_solving interesting.

Thank you, very interesting, I’m once again in your debt!

Less serious comment: I like how the article describes how a task can “cease being a ‘puzzle’ and allows it to become a problem”. and this is described as a positive change. Only in math are “puzzles” not fun and “problems” good!

And in re: stealing in math, that reminds me of Tom Lehrer’s old song about “Lobachevsky” (https://www.youtube.com/watch?v=gXlfXirQF3A).

gec,

I actually heard Tom Lehrer perform this in a house concert (a fund raiser) in 1972.

Awesome, I’m extremely jealous! Does that grant you a Lehrer number? Personally, I’d much rather have one of those than an Erdos number…

(As far as I know he still hangs around Santa Cruz—if we have any readers out there, maybe he can be coaxed into another public performance?)

gec said, “Does that grant you a Lehrer number? Personally, I’d much rather have one of those than an Erdos number…”

If a Lehrer number is the smallest length of a chain of acquaintance from someone to Leher, then my Lehrer number is 2, since I knew the guy who hosted the house concert, and he knew Lehrer.

PS: I think my Erdos number is 4 (I wrote a joint paper with someone who wrote a joint paper with I.N. Herstein, whose Erdos number is listed as 2 at https://en.wikipedia.org/wiki/List_of_people_by_Erdős_number#H_2)

Martha:

My Erdos number is 3, and you’ve commented on this blog, so that gives you an Erdos number of 4 right there!

I don’t think blog comments count for Erdos numbers.

Great question. I would also like to know what math background is absolutely essential. I was not sure I understood some of the statistical tools even though I did well in the statistics course. Go figure.

It’s almost impossible in a “stats 101” type course, with little math background, to give much understanding of the statistical tools.

Some intro stats teachers use classroom simulations that can help somewhat.

A “mathematical statistics” course (which typically has a prerequisite of a probability course, and a calculus course) is likely to give a better idea.

In teaching a continuing education course on “Common Mistakes in Using Statistics”, I have given a summary of some of the results of a mathematical statistics course, starting on p. 11 of the handout “Slides Day 2 Part 1” ( linked from https://web.ma.utexas.edu/users/mks/CommonMistakes2016/commonmistakeshome2016.html), and continuing on Parts 2 and 3 of the Day 2 slides, in the hope that this leads to greater understanding of what hypothesis testing and confidence intervals are and aren’t.

Honestly, I don’t see how “Walker’s claim is reasonable here”.

What book writes:

>vehicular accidents caused by drowsy driving exceed those caused by alcohol and drugs combined

The claim that anonymous blog post addresses:

>Fatal vehicle accidents associated with drowsy driving are estimated to be around 15-20% (see also data from the AAA Foundation for Traffic Safety).

The book: “vehicular accidents caused by drowsy driving”. The blog post: “Fatal vehicle accidents associated with drowsy driving”. It does not even try to respond to the point the book was making or to the point that I was making, but instead was responding to a point vaguely similar to the one seen in the book and in my piece.

The linked paper writes:

>When aggregated to the level of the crash, an estimated 6% (95% CI: 4% – 8%) of all crashes, 7% (95% CI: 5% – 8%) of crashes that result in a person receiving treatment for injuries, 13% (95% CI: 9% – 17%) of all crashes that resulted in a person being hospitalized due to injuries sustained in the crash, and 21% (95% CI: 13% – 28%) of all fatal crashes involved a drowsy driver (Figure 1).

Is the substitution of “vehicular accidents” to “Fatal vehicle accidents” reasonable? This immediately lets that blogger to substitute 6% to 21%.

Is the substitution of “caused by drowsy driving” by to “associated with drowsy driving” reasonable?

The discussed claim follows a pattern encountered across the entire post: it almost always addresses claims that seem like something I wrote, not what I actually wrote.

For example, I wrote:

>people who sleep just 6 hours a day might have the lowest mortality

The blog post has a section called

>Is sleeping 6 or fewer hours per night fine for your health?

It then says:

>Below I describe a non-exhaustive set of studies that speak to this question, broken into three subsections: i) short sleep and the brain, ii) short sleep and the body, and iii) short sleep and genes.

If you look at that section, it never mentions mortality! It talks about short-term effects on brain, short-term effects on the body, and short-term effects on gene expression but does not actually address my point!

Two more points about the section. For some reason it mentions the following:

>Summarized in a recent 2018 review, individuals restricted to short sleep (5.5 hours a night or less) suffered significant decreases in insulin sensitivity (figure below).

This has no relevance to the claim I was making at all. Over 700 genes were significantly altered in their expression following one week of short (6 hour/night) sleep. The altered genes included those controlling immune regulation, oxidative stress, inflammatory state, and metabolism.

First, this again does not address mortality, only short-term response of the body 6 hours of sleep (this is kind of like me saying “exercise is good for your long-term health” and then getting a response “but right after exercise your genes controlling oxidative stress changed expression!”

Second, If you look at that subsection, you will note that at no point it says that these genes changed their expression in a way that negatively affects anything. Only “significantly altered”.

Alexey:

Yes, I agree that it was frustrating that Walker (or the Walker surrogate) on that blog did this thing of quasi-addressing your claims. I’d much rather that he’d just admitted all the errors and gone from there. Walker is not the only academic or media figure to duck, dismiss, or misrepresent criticism—but every time I see this sort of behavior, it saddens me.

Alexey:

I agree that the whole “caused by” thing is tough, and, at best, Walker was being sloppy in that assertion, as in so many assertions in his book. When I say that Walker’s claim is reasonable, perhaps it would be more accurate to say that his claim is not so unreasonable as it seemed at first. First, going from accidents to fatal accidents seems ok, in the sense that arguably we should care more about fatal accidents anyway. Second, upping the drowsy-driving fatal accident by a factor of 15 . . . that really does change the numbers!

I’m completely with you that Walker’s book is riddled with errors. At least, nobody has really made any good counterarguments against that claim of yours. But going through that fatal-accident thing made me think that there was actually something behind Walker’s general claim. So, at least in that one example, it kinda looks like Walker read a bunch of reports, or maybe he did a bunch of googling, or had an assistant read a bunch of things, and he put together various disconnected factoids. In some places, maybe the result made no sense. In other places such as the purported WHO report, the result was exaggeration. In the case of drowsy driving, I feel like the general claim was reasonable, even if overstated and unproven.

Evaluating How We Sleep as a scholarly work, it’s not much of an excuse to say that, sure, there are tons of errors but some of the claims might kinda be true. So, again, I’m with you on the idea that Why We Sleep should not be taken as any kind of definitive scholarly work, and if Bill Gates or whoever wants to recommend it, they can say how inspiring it is but recognize that many of the details are garbled. And don’t get me started on Ted talks.

In addition to evaluating How We Sleep as a scholarly work, I’m also interested in whether its claims are approximations to the truth. The book has lots of claims, and so I took the lazy way out and decided to look up one claim that was pure statistics, with no biological content. For that particular claim, the numbers seemed roughly reasonable, conditional on that AAA report, which might be wrong but at least is a credible source.

I agree with your point here. It does seem that there’s a lot of uncertainty in the data and one could claim that many more fatal accidents are associated with drowsy driving. And it does seem that the way the book was written is basically by googling for claims, picking the most egregious one, and then exaggerating it a bit more or just substituting associations for causation.

Another example. In his talk at Google, Walker says:

>Men who sleep 5 hours a night have significantly smaller testicles than those who sleep 8 hours or more.

In his TED Talk, Walker says:

>Men who sleep 5 hours a night have significantly smaller testicles than those who sleep 7 hours or more.

I wrote:

>This [paper] appears to be the only paper that examined the relationship between sleep and testicles size. It does not support either of Walker’s claims.

The claim that the anonymous blog post defends:

>Short sleep duration in males is associated with significantly lower levels of testosterone and reduced testicular size.

But this is not the claim Walker made in his talks and this is not the claim that I addressed!

The paper in question finds a correlation between sleep and testicle size. In his talks, Walker says that the difference between those sleeping 5 and those sleeping 8 hours or more (or 7 hours or more) is “significant”, by which he clearly means statistical significance. The paper never examines whether the difference between those two groups is statistically significant. It is true that it found a significant correlation. The paper however does not support either of Walker’s claims.

Alexey:

Yes, it seems like Walker just does not think quantitatively but rather is applying the lack-of-sleep-is-bad principle over and over again. So he doesn’t care about the details. The details are just there to make a dramatic case.

Also, consider this. Walker had this career writing papers, he got a job at a top university and another job at Google. He wrote a bestselling book that received universally positive reviews from prestigious newspapers and magazines, he’s a Ted talk star, people are throwing money and fame at him . . . He’s received all sorts of positive signals that he’s doing everything right. Then this one guy on the internet comes along and says the emperor has no clothes. Maybe the guy can be ignored, right? Or maybe a response is in order. But it will take a lot for Walker to realize that, when he makes things up, that’s not good science, nor is it good science writing. It’s tough to start over when you’ve received so much acclaim for doing things the wrong way.

Another example of the same pattern.

The book writes:

>Routinely sleeping less than six or seven hours a night demolishes your immune system, more than doubling your risk of cancer.

The blog post writes:

>Several studies, however, have indicated that short sleep is associated with a doubling of risk for specific cancers … It is not correct to suggest, based on epidemiological findings, that sleeping less than 6 or 7 hours causes cancer.

But that blog post never addresses the fact that the book made this strictly causal claim! As a somewhat amusing detail, here’s one of the studies on the association of sleep and cancer that section cites:

>Described in a 2014 study, 2,586 men sleeping 6.5 hours a night or less had more than a two-fold greater risk of lung cancer after adjusting for smoking history (hazard ratio (HR): 2.12 [8]).

This is the linked study: https://www.ncbi.nlm.nih.gov/pubmed/24684747

The study says:

>Significant association between sleep duration and increased lung cancer risk was observed after adjustments for age, examination years, cumulative smoking history, family cancer history and Human Population Laboratory Depression scale scores (HR 2.12, 95% CI 1.17-3.85 for ≤6.5 h sleep, and HR 1.88, 95% CI 1.09-3.22 for ≥8 h sleep). Associations were even stronger among current smokers (HR 2.23, 95% CI 1.14-4.34 for ≤6.5 h sleep, and HR 2.09, 95% CI 1.14-3.81 for ≥8 h sleep).

The study found 2.12 hazard ratio for less or equal to 6.5h of sleep and lung cancer and 1.88 for more or equal to 8h of sleep and lung cancer! I have no idea how this supports anything Walker said.

Another example. The book says:

>Two-thirds of adults throughout all developed nations fail to obtain the recommended eight hours of nightly sleep.

I wrote:

>No, two-thirds of adults in developed nations do not fail to obtain the recommended amount of sleep

The blog post writes:

>Are two-thirds of adults failing to obtain 8 hours of nightly sleep, and does sleep opportunity matter?

The data that *I linked* show that approximately two-third of adults fail to obtain 8 hours of sleep. This issue was with Walker turning 7-9 hours of sleep recommendation into 8 hours of sleep. That section completely fails to address it.

Another example. The book writes:

>[T]he World Health Organization (WHO) has now declared a sleep loss epidemic throughout industrialized nations.

The blog post writes while linking to http://www.accqsleeplabs.com/wp-content/uploads/2014/10/CDC-report.pdf:

>The Centers for Disease Control (CDC) has stated that, “Insufficient sleep is a public health epidemic.”

And:

>The book’s misattribution of the CDC statement to the WHO will be corrected in the next edition.

Note that the author of that blog post links to a pdf on some random site not associated with CDC. That pdf says that CDC has declared a sleep loss epidemic. Why doesn’t the author link to CDC directly? The answer is that at the time of their response (and at the time of the book’s publication), CDC had no documents and no pages on its site that would declare a sleep loss epidemic.

As I noted in section “Possible origin of the “sleeplessness epidemic” thing”,

>Between late 2010/early 2011 and August/September 2015 [1-4], CDC had a page on its site titled “Insufficient Sleep Is a Public Health Epidemic”. More than 2 years before Why We Sleep was published, the page changed the word “epidemic” to “problem”, so that its title became “Insufficient Sleep Is a Public Health Problem”. … the fact that CDC itself changed the wording from “epidemic” to “problem” more than 2 years prior to the book’s publication, indicat[es] that they no longer believed in the presence of an “insufficient sleep epidemic”

Issuing a correction that would just change “WHO” to “CDC” in the next edition of the book – as the anonymous author suggested – is deceptive. The CDC long ago (more than 4 years ago) removed the word “epidemic” from the article, and then removed the article itself.

If the author believes that this pdf is sufficient to state that CDC has declared a sleep loss epidemic, they might as well say that CDC has declared an epidemic of inhalation anthrax (and forget to note that it was declared in 1958. [5]

Finally, the author does not answer why the source for this sleep loss epidemic claim in the book is a random National Geographic documentary.

[1] https://perma.cc/72CZ-L9DG

[2] https://perma.cc/23UM-849W

[3] https://perma.cc/2E68-ZRL9

[4] https://perma.cc/UJ4W-JHNY

[5] https://www.cdc.gov/museum/timeline/1940-1970.html https://perma.cc/T6JN-SNSQ

Estimating the % of drowsy drivers correctly is difficult, but so is estimating the proportion of those who were under the influence AND were also drowsy (after the fact).

Despite all the errors in the book, it would be foolish to think that sleep deprivation has no effect on us.

Evolution somehow did not manage to get rid of sleep. Animals are the most vulnerable when asleep and yet we still ‘practice’ it all the time.

Cognitive performance goes south big time when we are sleep deprived. Now, nitpicking by how much and compared to this and that is trivial. There are big individual differences, but still nobody thrives when sleep deprived.

The book has some very useful points. Although with all sleep research available, we still don’t know why we sleep, we know enough to implement some policies. It’s been established that teenagers for some reason stay up late and get up late, yet all schools still start early in the morning for parents’ convenience. Same with shift work or jetlag, etc. There are other examples where current sleep research findings could be used to improve quality of life and safety.

“Walker has a message to send, and he doesn’t care much about the details. He’s sloppy with sourcing, gets a lot wrong, and has not responded well to criticism.” sounds like a great scholar.

Antonio:

Maybe not a great scholar, but good enough for a tenured professorship at the University of California!

Also don’t forget copy-pasting papers: https://guzey.com/books/why-we-sleep/#walker-copy-pasting-papers

Alexey:

Yes, copy-pasting papers is bad. If he does much more of that, the psychology establishment will have to give him some awards like this: