This is the second of a series of two posts.

Yesterday we discussed the difficulties of learning from a small, noisy experiment, in the context of a longitudinal study conducted in Jamaica where researchers reported that an early-childhood intervention program caused a 42%, or 25%, gain in later earnings. I expressed skepticism.

Today I want to talk about a paper making an opposite claim: “Canada’s universal childcare hurt children and families.”

I’m skeptical of this one too.

Here’s the background. I happened to mention the problems with the Jamaica study in a talk I gave recently at Google, and afterward Hal Varian pointed me to this summary by Les Picker of a recent research article:

In Universal Childcare, Maternal Labor Supply, and Family Well-Being (NBER Working Paper No. 11832), authors Michael Baker, Jonathan Gruber, and Kevin Milligan measure the implications of universal childcare by studying the effects of the Quebec Family Policy. Beginning in 1997, the Canadian province of Quebec extended full-time kindergarten to all 5-year olds and included the provision of childcare at an out-of-pocket price of $5 per day to all 4-year olds. This $5 per day policy was extended to all 3-year olds in 1998, all 2-year olds in 1999, and finally to all children younger than 2 years old in 2000.

(Nearly) free child care: that’s a big deal. And the gradual rollout gives researchers a chance to estimate the effects of the program by comparing children at each age, those who were and were not eligible for this program.

The summary continues:

The authors first find that there was an enormous rise in childcare use in response to these subsidies: childcare use rose by one-third over just a few years. About a third of this shift appears to arise from women who previously had informal arrangements moving into the formal (subsidized) sector, and there were also equally large shifts from family and friend-based child care to paid care. Correspondingly, there was a large rise in the labor supply of married women when this program was introduced.

That makes sense. As usual, we expect elasticities to be between 0 and 1.

But what about the kids?

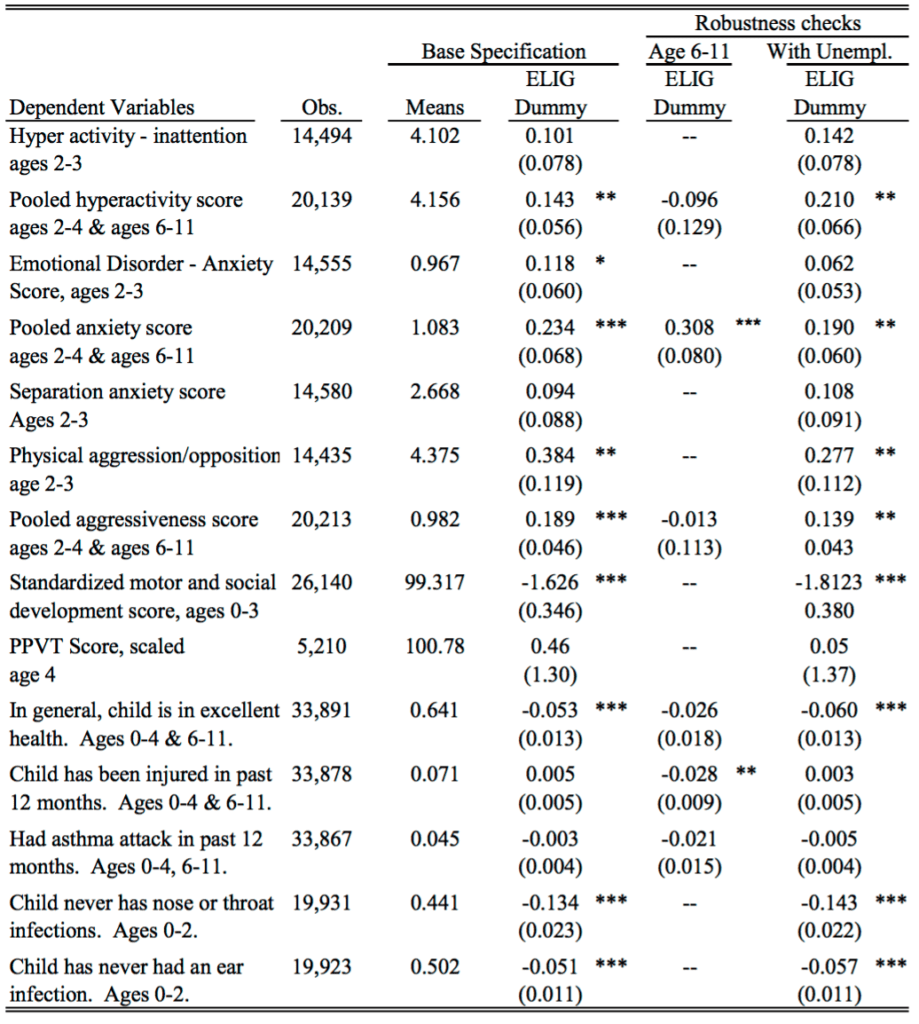

Disturbingly, the authors report that children’s outcomes have worsened since the program was introduced along a variety of behavioral and health dimensions. The NLSCY contains a host of measures of child well being developed by social scientists, ranging from aggression and hyperactivity, to motor-social skills, to illness. Along virtually every one of these dimensions, children in Quebec see their outcomes deteriorate relative to children in the rest of the nation over this time period.

More specifically:

Their results imply that this policy resulted in a rise of anxiety of children exposed to this new program of between 60 percent and 150 percent, and a decline in motor/social skills of between 8 percent and 20 percent. These findings represent a sharp break from previous trends in Quebec and the rest of the nation, and there are no such effects found for older children who were not subject to this policy change.

Also:

The authors also find that families became more strained with the introduction of the program, as manifested in more hostile, less consistent parenting, worse adult mental health, and lower relationship satisfaction for mothers.

I just find all this hard to believe. A doubling of anxiety? A decline in motor/social skills? Are these day care centers really that horrible? I guess it’s possible that the kids are ruining their health by giving each other colds (“There is a significant negative effect on the odds of being in excellent health of 5.3 percentage points.”)—but of course I’ve also heard the opposite, that it’s better to give your immune system a workout than to be preserved in a bubble. They also report “a policy effect on the treated of 155.8% to 394.6%” in the rate of nose/throat infection.

OK, hre’s the research article.

The authors seem to be considering three situations: “childcare,” “informal childcare,” and “no childcare.” But I don’t understand how these are defined. Every child is cared for in some way, right? It’s not like the kid’s just sitting out on the street. So I’d assume that “no childcare” is actually informal childcare: mostly care by mom, dad, sibs, grandparents, etc. But then what do they mean by the category “informal childcare”? If parents are trading off taking care of the kid, does this count as informal childcare or no childcare? I find it hard to follow exactly what is going on in the paper, starting with the descriptive statistics, because I’m not quite sure what they’re talking about.

I think what’s needed here is some more comprehensive organization of the results. For example, consider this paragraph:

The results for 6-11 year olds, who were less affected by this policy change (but not unaffected due to the subsidization of after-school care) are in the third column of Table 4. They are largely consistent with a causal interpretation of the estimates. For three of the six measures for which data on 6-11 year olds is available (hyperactivity, aggressiveness and injury) the estimates are wrong-signed, and the estimate for injuries is statistically significant. For excellent health, there is also a negative effect on 6-11 year olds, but it is much smaller than the effect on 0-4 year olds. For anxiety, however, there is a significant and large effect on 6-11 year olds which is of similar magnitude as the result for 0-4 year olds.

The first sentence of the above excerpt has a cover-all-bases kind of feeling: if results are similar for 6-11 year olds as for 2-4 year olds, you can go with “but not unaffected”; if they differ, you can go with “less effective.” Various things are pulled out based on whether they are statistically significant, and they never return to the result for anxiety, which would seem to contradict their story. Instead they write, “the lack of consistent findings for 6-11 year olds confirm that this is a causal impact of the policy change.” “Confirm” seems a bit strong to me.

The authors also suggest:

For example, higher exposure to childcare could lead to increased reports of bad outcomes with no real underlying deterioration in child behaviour, if childcare providers identify negative behaviours not noticed (or previously acknowledged) by parents.

This seems like a reasonable guess to me! But the authors immediately dismiss this idea:

While we can’t rule out these alternatives, they seem unlikely given the consistency of our findings both across a broad spectrum of indices, and across the categories that make up each index (as shown in Appendix C). In particular, these alternatives would not suggest such strong findings for health-based measures, or for the more objective evaluations that underlie the motor-social skills index (such as counting to ten, or speaking a sentence of three words or more).

Health, sure: as noted above, I can well believe that these kids are catching colds from each other.

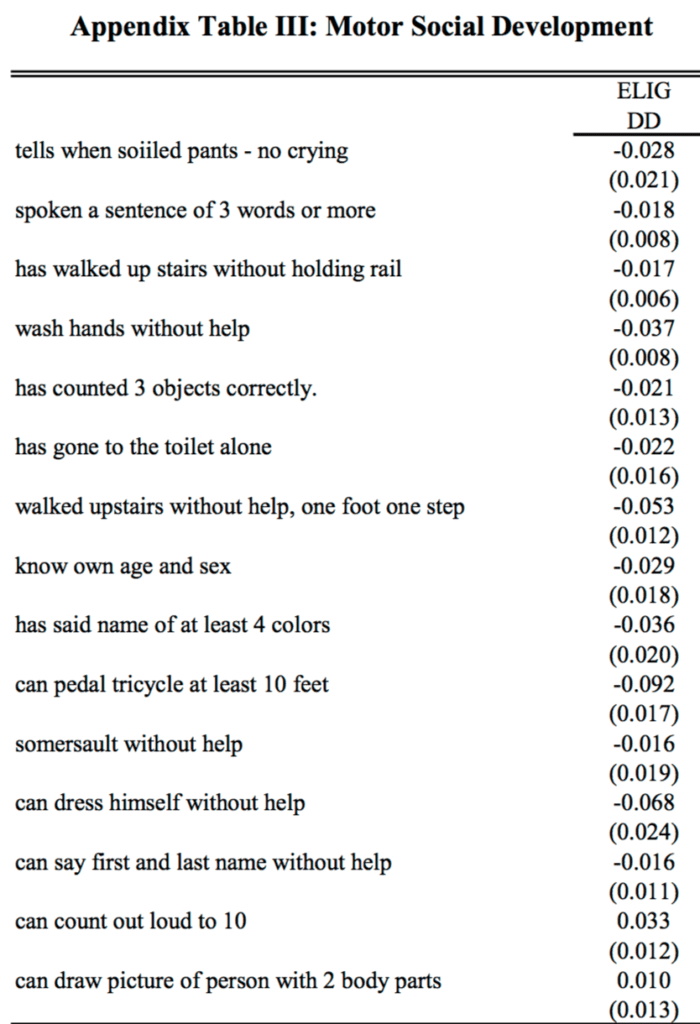

But what about that motor-skills index? Here are their results from the appendix:

I’m not quite sure whether + or – is desirable here, but I do notice that the coefficients for “can count out loud to 10” and “spoken a sentence of 3 words or more” (the two examples cited in the paragraph above) go in opposite directions. That’s fine—the data are the data—but it doesn’t quite fit their story of consistency.

More generally, the data are addressed in an scattershot manner. For example:

We have estimated our models separately for those with and without siblings, finding no consistent evidence of a stronger effect on one group or another. While not ruling out the socialization story, this finding is not consistent with it.

This appears to be the classic error of interpretation of a non-rejection of a null hypothesis.

And here’s their table of key results:

As quantitative social scientists we need to think harder about how to summarize complicated data with multiple outcomes and many different comparisons.

I see the current standard ways to summarize this sort of data are:

(a) Focus on a particular outcome and a particular comparison (choosing these ideally, though not usually, using preregistration), present that as the main finding and then tag all else as speculation.

Or, (b) Construct a story that seems consistent with the general pattern in the data, and then extract statistically significant or nonsignificant comparisons to support your case.

Plan (b) was what was done again, and I think it has problems: lots of stories can fit the data, and there’s a real push toward sweeping any anomalies aside.

For example, how do you think about that coefficient of 0.308 with standard error 0.080 for anxiety among the 6-11-year-olds? You can say it’s just bad luck with the data, or that the standard error calculation is only approximate and the real standard error should be higher, or that it’s some real effect caused by what was happening in Quebec in these years—but the trouble is that any of these explanations could be used just as well to explain the 0.234 with standard error 0.068 for 2-4-year-olds, which directly maps to one of their main findings.

Once you start explaining away anomalies, there’s just a huge selection effect in which data patterns you choose to take at face value and which you try to dismiss.

So maybe approach (a) is better—just pick one major outcome and go with it? But then you’re throwing away lots of data, that can’t be right.

I am unconvinced by the claims of Baker et al., but it’s not like I’m saying their paper is terrible. They have an identification strategy, and clean data, and some reasonable hypotheses. I just think their statistical analysis approach is not working. One trouble is that statistics textbooks tend to focus on stand-alone analyses—getting the p-value right, or getting the posterior distribution, or whatever, and not on how these conclusions fit into the big picture. And of course there’s lots of talk about exploratory data analysis, and that’s great, but EDA is typically not plugged into issues of modeling, data collection, and inference.

What to do?

OK, then. Let’s forget about the strengths and the weaknesses of the Baker et al. paper and instead ask, how should one evaluate a program like Quebec’s nearly-free preschool? I’m not sure. I’d start from the perspective of trying to learn what we can from what might well be ambiguous evidence, rather than trying to make a case in one direction or another. And lots of graphs, which would allow us to see more in one place, that’s much better than tables and asterisks. But, exactly what to do, I’m not sure. I don’t know whether the policy analysis literature features any good examples of this sort of exploration. I’d like to see something, for this particular example and more generally as a template for program evaluation.

You make all sorts of good points – particularly that a real ‘no childcare’ situation is an absolute crisis. One or both parents staying home is very different from children shuttled, say, between various relatives, or non-relatives.

As a microbiologist working with infectious disease and the microbiome, I must: there is no evidence that acute childhood illnesses such as colds, GI infections, and the flu passed around at daycare provide any immunological or developmental benefits. There is theoretical and observational evidence – even some experimental evidence – that other kinds of microbial exposures, including worm infections, provide some benefits. There is very strong evidence that living an agricultural life with traditional animal labor (instead of machinery) and without pets in the home, eating mostly home grown produce and meat, and with the family cleaning the home in traditional ways, provides a huge immunological benefits, for reasons which are unclear at this time (reduction in asthma rates from ~20% to ~5%).

It’s difficult to place much confidence in interpretations of investigators who report percentage gains on variables (i.e., anxiety, social or motor skills) that are, at best, being measured on interval scales.

Regarding your last paragraph, won’t looking at lots of graphs lead you to the garden of forking paths?

Silly personal anecdote: When I was four my parents placed me in a preschool/daycare situation that had a reputation for producing advanced academic skills. It was run by Carmelites. To this day I recall being very scared of the nuns who were quite authoritarian. Yes, formal daycare increased anxiety at least for this guy. And yes, the interpersonal atmosphere did favor aggressive behavior on the playground compared to sitting at home at Momma’s knee.

The one thing I pick up from Andrew’s description (I haven’t read the paper) that would seem to make sense is the “large” increase in numbers of women going into the workforce. So the story could be “most of the 14 characteristics we measured changed in an undesirable direction, and this correlates with the amount of employment among their mothers”. Then model those changes in a multilevel model conditioned on the amount of outside hours the mothers worked.

Then you might be able to see something, if there were a “real” effect.

Of course, we don’t know how independent all these measured traits are, either. That makes it harder to work through what if anything the results mean.

Another thing, one I don’t know the best way to handle, is when as here, many indicators tend to be on one side or the other. but most are not (statistically) significantly so. That seems to be the case here; it would be easier to grasp if they changed the sign of some of the indicators so that e.g., a negative value always meant an undesirable direction.

Suppose instead the topic was “How effective or counterproductive is a parachute?” as seen by this famous satirical paper entitled “Parachute use to prevent death and major trauma related to gravitational challenge: systematic review of randomised controlled trials”

http://www.bmj.com/content/327/7429/1459

The similarity between a parachute and universal child care is that we “know” it is a good thing and the issue is how much is the cost of implementation. With the just-concluded election, funding for government programs in general, and child care in particular, will be impossible. The economically well off in the U.S. capitalist society will have universal child care and the rest won’t.

Nitpicking: If only the well off have it how is it universal?

In dealing with these kinds of multi-variate outcomes, the only way to determine whether an overall situation is “better” or “worse” is to map that situation to some scalar quantity.

Of course, different people like different things, some women may absolutely hate being stay at home moms and much prefer the situation where they work full time and someone else takes care of their kids during work hours. Others may prefer to stay home. Same goes for fathers. Lots of people would probably like a mixture (say part-time work and part time being with children). It’s complicated. But a first pass at all this might just be to create a linear utility scale for all those “can dress themselves” and “can count to 10” and soforth… and then add them all up. Taking an expectation over a posterior probability would be even better. Generally I think this should produce less noisy results and it’s a good step in the right direction towards a useful full utility model

Certainly compared to “outcome X had a statistically significant coefficient and outcome Y didn’t and therefore … blablabla story time useless noise chasing etc”

First, thank you for such a well-developed post. I wish I had time to be as thoughtful in response.

Second, I think the answer is in your last paragraph in your line: “I’m not sure.” I’m not even sure we can list factors. Example: putting aside genetics to focus on environment, how can one isolate schooling at an early age from family, from lack of family, from lousy family? If good effects of early school are considered real, does the extent to which they last really reflect the family? If so, how much effect was there in the first place? I could go on and on and on.

Now for anecdote. I’ve worked with groups trying to train parents so they can be involved in their kids’ schooling, literally teaching them in some cases how to ask specific questions of teachers and administrators. My wife teaches young children. My experience is that reasonably intelligent adults have no clue and that only a relatively small subset are willing to take on the burdens of learning how to advocate/interact and then actually doing that with their kids, with schools. I mean specifically not that they don’t care, because they do, but that any choice requires an effort and they are generally unable or unwilling to take on those efforts, in part because their communities don’t have those levels of educational and career aspirations which apply pressure on parents and students.

My wife’s comment would be more that a shocking number of parents are all the way to toxic and insane, that they are detriments to their children’s learning and that the children learn because of genetics, because they’re children and are naturally curious and because everyone grows up and masters some degree of life skill. This is among communities with high achievement standards and high pressures to achieve. I believe many of these children learn simply because the pressure applied to them in general by family and community pushes them along, even as their parents damage them emotionally and so on.

I’ve read the research on peer effects, etc. I don’t see a way to pull these threads apart. I think early childhood education is an ideal and a good thing if you’ve ever worked with kids but I’ve largely given up the idea that its effects can be quantified because those can’t be unraveled from so much else. I think the proper way to evaluate this is simply this: ever met a child? If you have then you know that helping that child learn and learn how to learn and to observe is one of the great things in life. That’s why we should do early childhood learning. We can pursue ways of quantifying but in this area I’d say the inability to quantify and the constant urge to do so distracts us from the powerful lesson we’ve each experienced in our lives, that learning is the key to life, that learning how to learn and observe is the most important thing.

Thank you for this analysis. I found this point particularly important:

“One trouble is that statistics textbooks tend to focus on stand-alone analyses—getting the p-value right, or getting the posterior distribution, or whatever, and not on how these conclusions fit into the big picture.”

Another problem is that researchers do not always write clearly; this contributes to confusion of ideas. The quotes you provide from this paper are difficult to read–not because of the technical language but because of syntactical and logical obscurity. I am not sure what they are saying–and this ambiguity affects the whole argument. I don’t mean to insult the authors; it’s a widespread problem.

One partial remedy would be a thorough edit by someone reading for clarity and logic. A sentence like “While not ruling out the socialization story, this finding is not consistent with it” would get stopped in its tracks.

Clearer writing would also give more insight into the big-world picture, or at least it could.

I am rather concerned that the researchers excluded single-parent families. Perhaps there is a good technical reason for this that I missed, as I just skimmed the paper, but this is excluding a large proportion of the population served by the Quebec Family Policy

StatCan reports single parent families were 25% of the total in Canada in 2011 and we know that many single parent families are below the poverty line and often on Social Assistance. I think we have a serious sampling problem.

> more generally as a template for program evaluation.

Are we being a bit arrogant to think we can profitably learn about anything but dramatic effects from observations that are available in policy areas like this?

Just from an economics of research prospective, it does not look very good. Medicine has access to much better observations (e.g. randomized trails and increasingly improving and accessible individual patient records) but after 30+ years – evidence based medicine has rarely been truly profitable.

Some casual comments.

Quebec is some what unusual (if I stand on my roof, I can see it) and the context will be important to understand.

The important outcomes need careful thought, the child who can’t count might not wet their pants and what do those assessments tell us about life time abilities and health? The increase in colds was a dramatic effect and not surprising but we have no evidence about whether that is good or bad in the long run. Increasing bilingualism arguably is a important outcome (about 8% of Canadians are functionally bilingual and it is almost required for employment in the civil service) but was it assessed/assess-able?

I looked for citations of the paper on google scholar and there looks to be dozens of related studies, many with new sources of observations in different countries. A thoughtful systematic review of this other information would likely be 3 – 6 months full time work with mostly likely result being it can’t be made sense of.

Quebec is some what unusual (if I stand on my roof, I can see it)

Get down off the roof, it’s too cold and windy to be up there today.

Quebec is some what unusual

I was thinking this as well. I enjoyed my ten years living in the province.

It would be interesting to see if StatCan has done some province-level comparisons of the questionnaires if they could be done. I didn’t poke around the site long enough to see if they had.

Another issue here is that the study was done in 2005 and so it about a population from 10+ years ago and one needs to worry that with internet it is a very different world (or soon will be).

So add to the difficulties of getting evidence here – it has a very short shelve life.

Don’t know what StatCan has for this sort of thing, my guess would be not much that researchers can legally get access to …

Note that the paper is published in the Journal of Political Economy in 2008 (Jstor link here). As you often argue, being peer-reviewed, even in the top journals is no guarantee that the paper is correct, but it’s important to mention that it’s been published in what many consider the best economics journal.

http://www.jstor.org/stable/10.1086/591908

Jack:

I don’t know about this journal but my guess is that econ journals are like psychology journals in that they like to publish newsworthy papers. A paper that claims that child care is effective, or a paper that claims that child care makes things worse: either of these seems like it’s making a real difference in the world; it seems important. A paper with a bunch of data analysis saying that the results are mixed: not so exciting.