I hate to keep bumping our scheduled posts but this is just too important and too exciting to wait. So it’s time to jump the queue.

The news is a paper from Michael Betancourt that presents a super-cool new way to compute normalizing constants:

A common strategy for inference in complex models is the relaxation of a simple model into the more complex target model, for example the prior into the posterior in Bayesian inference. Existing approaches that attempt to generate such transformations, however, are sensitive to the pathologies of complex distributions and can be difficult to implement in practice. Leveraging the geometry of thermodynamic processes I introduce a principled and robust approach to deforming measures that presents a powerful new tool for inference.

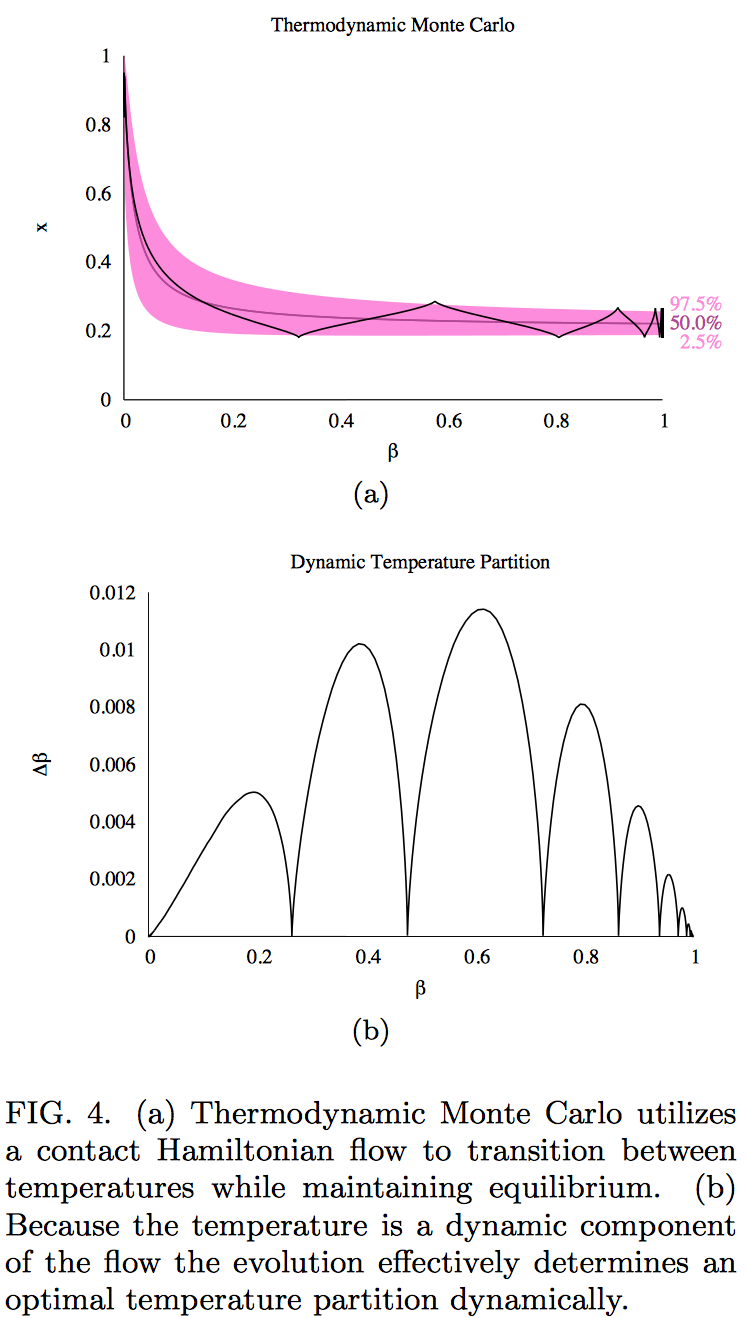

The idea is to generalize Hamiltonian Monte Carlo so that it moves through a family of distributions (that is, it transitions through an “inverse temperature” variable called beta that indexes the family) as a way of continuously sweeping through to efficiently compute the normalizing function. The graphs above show a single HMC path with the temperature going from 0 to 1 on a simple test example. When running the algorithm, this would represent one of many simulated paths. As Betancourt points out, “What’s cool about this is that once you have a sample from the base distribution you only need one transition to get a sample from the target distribution, albeit an expensive transition. This should make seeding and mode exploration really fast.”

The background for the algorithm is as follows. Methods such as simulated annealing and simulated tempering are based on altering a temperature parameter. The basic idea is that you want to simulate from some distribution p(theta) but it’s too difficult, so you start from a simpler distribution p_0(theta) that you understand well, and then you work with the class of distributions p(theta|beta) = p_0(theta)^(1-beta)*p(theta)^beta. You start at beta=0 (“high temperature”) and gradually move toward beta=1 (“low temperature”). In simulated annealing or tempering, you gradually increase beta, not too slowly (or you’ll be wasting your time) and not too fast (or you’ll break the smooth operation of your simulations, assuming this is all embedded in some Markov chain simulation algorithm). You’ll want to move fast in areas where the distributions are changing slowly as a function of beta, slowly in areas where the distributions are changing fast, and you’ll want to stay still for awhile where there are phase transitions. And then once you have all your simulations you can estimate the normalizing function using path sampling. It works, but it’s clunky as it requires lots of outside tuning. It’s nothing close to a black box.

The physical analogy of tempering or annealing is clear—but the interesting thing is that it has problems. In particular, you can’t actually set or change the temperature of a physical system. What you can do is couple your system to a heat bath or cold bath and then let the temperature change naturally. That is, you can connect your system to a heat pump or a refrigerator, you can’t stick it in the microwave oven or in a (nonexistent) “microwave cooler.”

What does this imply for statistical algorithms for simulating from difficult distributions and computing normalizing constants? It implies that if we want to follow the physics, we should not have our algorithm “change the temperature” as if there were some kind of knob to set this state variable. Instead, we should alter the temperature in a more natural, physical way (that is, respecting the underlying differential equations) by mathematically coupling the system with a heat bath or cold bath and letting the temperature then evolve as it will. This is what Betancourt’s thermodynamic Monte Carlo algorithm does. I don’t follow the details of the algorithm (I’m waiting till it appears in Stan) but the basic idea makes a lot of sense to me, as it follows the Folk Theorem and the Pinocchio principle.

And here’s some more intuition from Michael on what the algorithm is doing:

In the thermodynamic evolution we have three things going on. Firstly, we have a usual dynamic evolution that mixes the positions and the momenta. Secondly we have a heat bath that adds or removes momentum depending on current potential energy relative to the average potential energy. Thirdly we have a temperature evolution term that leaches kinetic energy in order to fuel changes in temperature.

When the system is in equilibrium the kinetic energy is nonzero, some of it can be drained away to change the temperature, and then the system re-equilibrates by adding/removing kinetic to balance the average potential energy and then mixing the positions and momenta again. But when the system is far away from equilibrium the kinetic energy will approach zero and the temperature evolution stalls until the positions and momenta equilibrate: the three evolution terms feed back into each other to maintain equilibrium.

Then let’s consider what happens in Figure 4. When the trajectory is in the bulk of probability mass we have equilibrium and the temperature can evolve forward smoothly. When the trajectory approaches a tail all of the energy converts to potential and there’s little kinetic energy to fuel the temperature evolution, which slows to a crawl. This gives the trajectory a chance to bounce back towards the bulk, converting the potential back into kinetic energy which then restarts the temperature evolution.

Thanks for posting this. It looks really clever and promising.

I’d be interested to see how this compares with Radford Neal’s “tempered transitions”, which seems more similar than the other methods discussed in the paper: http://citeseerx.ist.psu.edu/viewdoc/download;jsessionid=3211FB79D5E848019BE6288A3D1016FB?doi=10.1.1.47.4403&rep=rep1&type=pdf

Tempered transitions is similar in that each sample in the MCMC chain comes from a long annealing run, so samples are individually expensive but very independent.

I’d be especially interested in hearing how the two methods compare on multimodal distributions, which seem to be a problem for thermodynamic Monte Carlo based on the last section?

Thanks again for posting this. It looks really cool.

I haven’t understood Betancourt’s paper yet, but it seems more likely to be related to my tempered Hamiltonian Monte Carlo methods (see the the last section of my review at http://www.cs.utoronto.ca/~radford/ham-mcmc.abstract.html) than to tempered transitions, which needn’t have anything to do with Hamiltonian dynamics. Or perhaps Betancourt’s method is related to Nose dynamics:

Nos\'{e}, S. (1984) “A unified formulation of the constant temperature molecular dynamics methods”, {\em Journal of Chemical Physics}, vol.~81, pp.~511-519.

Radford,

The implementation of this will look like your temperated HMC, but it will include added terms that essentially remove the problem of all not being able to remove all of the energy injected into momenta. A transition from the target to the base back to the target maintains the correct invariant distribution so that, modulo integrator concerns, the acceptance probability will be one.

This is also not Nose dynamics, as I’m simulating an adiabatic cooling and not the exploration of a given (constant temperature) canonical distribution.

Ultimately the relationships are

HMC : classical dynamics : symplectic geometry

ThermoMC: thermodynamics : contact geometry

Annealing and tempering attempt thermodynamic algorithms but without incorporating the full contact geometry they run into the known pathologies.

If you have any questions please don’t hesitate to throw me an email!

this reminds me of parallel tempering / replica exchange, is it related?

Both Andrew’s post above and the paper itself describe how thermodynamic sampling is a better physically motivated approach to the same problem (that’s the setting a schedule vs. applying a heat bath/sink). So it’s related in motivation, but not very closely in execution.

Parallel tempting is a means of improving simulated tempering, but you still run into the same pathologies from the rigid temperature partition that are discussed in the paper. Hence the relationship to parallel tempering is basically the same as the relationship to simulated tempering.

Do you know where I can find a good primer on the math behind your thermo stuff — differential forms, symplectic manifolds, contact flows and so on?

For someone with some math background but no geometry I really like Part I of http://www.worldscientific.com/worldscibooks/10.1142/2324. After that it’s hard to beat the new edition of Lee, http://www.math.washington.edu/~lee/Books/ISM/, which covers both symplectic and contact geometries with strong pedagogy.

Awesome! Thanks.

Probably a dumb question – but why are you interested in estimating normalizing constants if you dislike bayes factors and marginal probabilities of hypotheses?

Anon:

Bayesian statistics of any form requires the normalizing function for the data distribution and also for intermediate-level distributions in hierarchical models. This is not always so clear in textbooks because in standard models the normalizing function is so simple to evaluate. In location-scale models such as the normal distribution, the normalizing function is trivial; in other models such as the gamma and beta, our predecessors already figured out how to compute the integrals analytically. But in other models, especially in high dimensions, the normalizing function does not have any clean form and it needs to be computed in some way.

Firstly, the Thermodynamic Monte Carlo algorithm is mostly about transitioning a simple and a complex distribution in order to speed up convergence and enable mode exploration. The normalizing constant estimate comes for free!

Andrew has valid points in his arguments against marginal likelihoods but you have to be careful with the context. In the largely empirical models relevant to social and political science there’s no obvious distinction between prior and likelihood which makes marginal likelihoods somewhat ill-defined and subject to those pathologies. But in the physical sciences where models have a stronger ontological motivation the prior/likelihood decomposition is more clear and marginal likelihoods become important. I’ve certainly had many circumstances in physics where the marginal likelihood was very natural and ended up being critical to an effective analysis.

What we’ll likelihood do in Stan is have the user define a base distribution and a delta distribution. If those correspond to the (some) prior/likelihood decomposition then the normalizing constant can be interpreted as the corresponding marginal likelihood, otherwise it’s just an computational tool.

I’m trying to understand the physical analogy. Are we talking about a const. temp. bath? If so, isn’t the physical analogy a transport problem? i.e. The heat transfer equation being some combination of the deltaT & heat transfer coefficients?

I’m trying to understand how we can avoid an arbitrary temperature program. Doesn’t it effectively just become an arbitrary heat transfer coefficient?

Maybe I’m misunderstanding the physical analogy. What’s the fundamental physical constraint linking bath properties to temperature evolution.

Thermodynamics is hard because there are lots of interpretations and many of them are bad.

Classical thermodynamics is all about the canonical distribution, which induces a probability distribution on the energies a system can take. From a probabilistic (information theoretic, Jaynesian, etc) perspective this distribution is just a manifestation of limited knowledge of the true energy. Concepts like the heat bath introduce a physical interpretation of the distribution, with the energy physically fluctuating as the system interacts with the constant temperature bath.

In Hamiltonian Monte Carlo we construct a canonical distribution directly. From the probabilistic perspective we’re just sampling from that distribution and from the physical perspective we’re simulating the dynamics of a system in equilibrium with a heat bath (the interaction with the heat bath manifesting as the momentum resampling). More sophisticated transport techniques aren’t needed as our system is, by construction, a canonical thermodynamic system and we don’t have to worry about trying to force it into one.

This new Thermodynamic Monte Carlo is moving beyond constant temperature exploration and introducing an adiabatic transition between two different canonical distributions (one simple, one complex). The corresponding physical interpretation is much trickier as the metaphorical heat bath has to transform in a very specific way to ensure that everything remains well-behaved; indeed simulated annealing and simulated tempering do not transform the heat bath in the right way which induces all of the pathologies discussed in the paper.

By working at the foundational mathematical level we can avoid the interpretation issues and construct an algorithm with the desired properties. But after the fact we can interpret the algorithm physically to help with intuition.

Hmm…I guess I’m not getting the physical analogy in this case. Perhaps working at the foundational mathematical level is my only option?

OTOH, there may not even exist a good physical analog for a clever algorithm, right? Is that possibly the case here?

I’d love if anyone can flesh out the physical interpretation more. e.g. for the simulated annealing algorithm the corresponding physical analogy is quite clear (I think).

Whoops, replied to the wrong commend. See below.

There is absolutely a physical analogy here — the underlying math is exactly the same so there has to be. The problem with analogies, however, is that they can be obfuscating. Thermodynamics is subtle and an illusionary understanding can be less constructive than no understanding at all. That said, I can attempt to lay out the basic idea.

The classical construction of thermodynamics is that we have some system in equilibrium with a heat bath. Interactions between the system and the heat bath manifest as transfers of energy without any transfer of heat [1] such that the average energy of the system (averaging over time or position) is proportional to the temperature.

Now there are three common ways we can transform such a system, all of which can be considered as transformations to the heat bath.

In annealing we rapidly increase or decrease the temperature of the heat bath which induces huge transfers of energy and heat between the bath and the system. The violence of the transition opens up vulnerabilities to metastable equilibria and other pathologies. Indeed those metastable equilibria are often the desired outcome in physical applications.

Tempering does not modify the heat bath but instead introduces an ensemble of heat baths with which the system can interact. As the system transitions between neighboring heat baths its temperature changes but much more gently than the forced change in annealing, allowing for equilibrium to be maintained [2]. Alternatively, we can construct a system, a heat bath, and then a “metabath” that interacts with the heat bath to vary its temperature stochastically. One big challenge with tempering is that it can be very slow to explore all temperatures because the exploration is stochastic.

Adiabatic cooling/heating considers a single system coupled to a single heat bath and then gently transforms the temperature of the heat bath. Unlike annealing, the adiabatic change in temperature adapts to the local state of the system — when the system is in equilibrium the temperature change speeds up and when the system is outside of equilibrium it slows down. This ensures that no heat is exchanged and the system remains in equilibrium. And, unlike tempering, the temperature changes are deterministic so we don’t suffer from slow exploration.

[1] Note that heat is something of a loaded term and its proper mathematical construction is not particularly simple.

[2] In parallel tempering we don’t have just one system with many heat baths but rather one system for _each_ heat bath. Transitions are generated by switching states between each system. This isn’t particularly physical, however, and is mostly just a computation tool.

Thanks! I think I’m out of my depth here. :)

But I’ll be brave & ask: What do you mean by “transfers of energy without any transfer of heat”? There’s doesn’t seem any Work done. Other than work or a bulk mass transport how do you transfer energy to a heat bath? I thought the whole point of a heat bath was that it’s only coupled to the system via a heat transfer?

This is where the analogy becomes tricky because of all the subtleties. I personally find that this is where the physical analogy breaks down exactly because you’re confronted with these ill-defined concepts as “what is a heat bath, really?”

Mathematically heat exchange induces a change in the canonical distribution, so if the distribution doesn’t change (as in the constant temperature case) then no heat can be exchanged (think Carnot engine). In adiabatic cooling/heating we do change the temperature but in a way that preserves the canonical distribution and hence also exchanges no heat.

More formally heat is the primitive of the contact form and adiabatic cooling/heat is a contact flow along the zero level set of the contact Hamiltonian. That wasn’t supposed to make much sense other than to justify why heat can be hard to intuit.

To me adiabatic cooling / heating itself is not a mystery. But to have that happen (I thought) the system must do work. i.e. say a change in parcel volume, or demagnetization etc. Then no heat needs cross the boundary.

But if you say, (a) there was no external work done and (b) the system had adiabatic boundaries and yet (c) temperature decreased then that seems very counter intuitive to me. (just to check, that is what you are saying, right?)

What makes it more bizarre is you still want a heat bath but you are not going to (1) do any work on it or (2) pass any heat to it nor (3) pass any bulk mass to it.

Now then the mystery to me is what exactly do you do with this heat bath.

Again, maybe I’m totally lost. :)

I didn’t say anything about work!

Work enters the picture when you try to give a physical interpretation to the heat bath and how it changes with energy. To explain more I have to get into the mechanics, so apologies if this is confusing (not sure of your background).

Let’s start with a classic mechanic system of a particle evolving under Hamiltonian dynamics, and phase space decomposes into the level sets of constant energy. There are two extensions of this system to a thermodynamic system.

Firstly, we may not know the exact evolution because we don’t have complete information of the system.

Given an initial energy we can at least constrain the particle to lie on one of the level sets, and because we don’t know anything about the position on the level set we take a uniform distribution over it (or you can appeal to Hamiltonian chaos to argue that any exponentially small uncertainty amplifies until we recover the uniform distribution); this is exactly the micro canonical distribution. If we don’t know the exact energy then we have to add a distribution of the level sets — the maximum entropy distribution given knowledge of the average energy yields the canonical distribution. There’s no heat bath anywhere to be found, just uncertainty.

Alternatively we can couple our single particle system to an external environment. In particular we add interactions that affect only the energy of the particle, and only with the introduction of fluctuations around an average energy; averaging the dynamics over time yields the canonical distribution again. The interactions between the system and the heat bath take the form of energy transfers and when no heat is exchanged the energy is transferred in such a way that the canonical distribution doesn’t change. From this perspective any thermodynamic process adds or removes energy from the single particle system and that energy has to go somewhere in the environment. So why not just let it do work?

The second approach is a special case of the first, which is itself closer to the statistical application in the paper. That said, the physical interpretation can be nice because it gives a cute meaning to much of the abstract mathematical construction. Cute because it’s only a shallow understanding, but often that’s exactly what you want when communicating to colleagues.

My bad for assuming you implied “zero Work”! But in both canonical (nVT) & micro canonical (nVE) ensembles isn’t system Volume a constant? I assumed that implied no work?

Also, I don’t get the “So why not just let it do work?” bit. You *must* let it do work, right? That energy has to go somewhere, & if not via heat, then via work it must. Uncertainty might explain a short temporary mismatch in energy bookkeeping but if you have a sustained energy change, you’ve got to have a way to transfer it to your surroundings?

Depends on how you define the ensemble — if the general case where your thermodynamic variables are (E, q, p) then work is done via the implicit interaction between the system and the environment (all you need is a force). But you could also define the ensemble using approximate variables, like (E, P, V) in which case work is done by changing the volume.

Sorry, I was being a big glib when I said “why not just let it do work?” What I meant was that you can model where that energy goes explicitly (in which case it has to do work) or treat it as some black box with the energy being redistributed within the environment. It’s just a choice of how far down the rabbit hole you want to go.

Note also that from the probabilistic perspective there is no heat bath in the first place, so the uncertainty in the energy isn’t due to actual fluctuations and you don’t need to keep up with all the bookkeeping.

@Michael: Thanks for humoring my naive questions!

I reread your paper & Andrew’s commentary: One part that bugs me is this bit:

From a strictly Physical World perspective I’m not sure I understand what you’ve replaced the arbitrary temperature knob with. Have we really gotten totally rid of the arbitrariness that’s integral to an “annealing schedule”?

Or have we pushed to to some other place? Does thermodynamic MC really have no arbitrary knobs? When Andrew mentions altering temperature in “a natural physical way” what physical analog is he basing it upon.

i.e. If we are at the point where “physical analogy breaks down” how do we call one temperature evolution more “natural” (physical?) than another.

Whether you transfer out heat or work, shouldn’t there be some necessary parameter choice in either route that basically controls the coupling of the system & the external bath? I rather think of yours as a “work bath” than the conventional “heat bath”.

Rahul,

I think you’re conflating too many particular thermodynamic systems with the general thermodynamic system; in particular, the concept of work requires the introduction of additional structure to the environment that is irrelevant to the basic system + environment construction. In any case ultimately we want a transition that preserves the canonical distribution (or exchanges no heat and hence does no work) and that is given by an adiabatic cooling/heating. Adiabaticity is a sufficiently rigid criterion that there is a unique temperature schedule and we really do lose a knob!

OK, a title for the next paper:

“Honey, I lost the knob! Adiabatic sampling for the rest of us.”

@Michael:

Thanks! I’m impressed. Losing a knob seems pretty darn awesome. I always assumed we were stuck with the arbitrainess (art?) of choosing an annealing schedule.

As an aside, I’ve used simulated annealing in the past for some global optimization problems (say, something akin to travelling salesman). Can your method be swapped in for such applications? I’d be curious to evaluate relative performance on a practical problem.

Andrew:

Or the next blog post. Just be careful when you do the Google Image Search…

Rahul:

The adiabatic algorithm finds regions of high probability mass, not any optimum, but that should be close enough in problems where this kind of algorithm is necessary.

PS. I just loved this line:

I nominate it for the sentence of the week. :)

Pingback: Useless Algebra, Inefficient Computation, and Opaque Model Specifications « Statistical Modeling, Causal Inference, and Social Science Statistical Modeling, Causal Inference, and Social Science

Pingback: Let's play Twister, let's play Risk - Statistical Modeling, Causal Inference, and Social Science