Mikhail Balyasin writes:

I have come across this paper by Jacob Westfall and Tal Yarkoni, “Statistically Controlling for Confounding Constructs Is Harder than You Think.” I think it talks about very similar issues you raise on your blog, but in this case they advise to use SEM [structural equation models] to control for confounding constructs. In fact, in relation to Bayesian models, they have this to say: “…Nor is the problem restricted to frequentist approaches, as the same issues would arise for Bayesian models that fail to explicitly account for measurement error.”

So, I would be very interested to hear from you how one would account for measurement error in Bayesian setting and whether this claim is true. I’ve tried to search your blog for something similar, but couldn’t find anything.

My reply: The funny thing is, I could’ve sworn that someone pointed me to this article already and that I’d blogged on it. But I searched the blog, including forthcoming posts, and found nothing, so here we go again.

1. The paper seems to all about type 1 error rates, and I have no interest in type 1 error rates (see, for example, this paper with Jennifer and Masanao), so I’ll tune all that out. That said, I respect Westfall and Yarkoni so I’m guessing their method makes some sense, and maybe it could even be translated into my language if anyone wanted to do so.

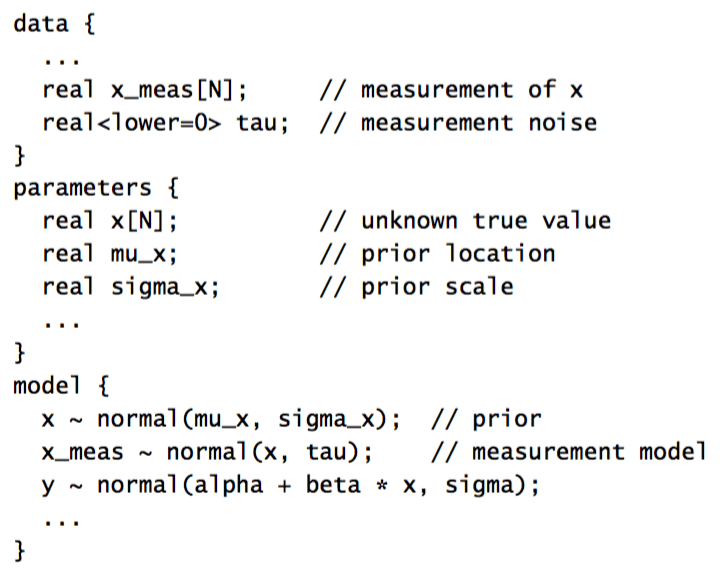

2. You can account for measurement error directly in Bayesian inference by just putting the measurement error model directly into the posterior distribution. For example, the Stan manual has a chapter on measurement error models and meta-analysis which begins:

Most quantities used in statistical models arise from measurements. Most of these measurements are taken with some error. When the measurement error is small relative to the quantity being measured, its effect on a model is usually small. When measurement error is large relative to the quantity being measured, or when very precise relations can be estimated being measured quantities, it is useful to introduce an explicit model of measurement error. One kind of measurement error is rounding.

Meta-analysis plays out statistically very much like measurement error models, where the inferences drawn from multiple data sets are combined to do inference over all of them. Inferences for each data set are treated as providing a kind of measurement error with respect to true parameter values.

A Bayesian approach to measurement error can be formulated directly by treating the true quantities being measured as missing data (Clayton, 1992; Richardson and Gilks, 1993). This requires a model of how the measurements are derived from the true values.

And then it continues with some Stan models like this:

3. To get back to the Westfall and Yarkoni paper, which argues that throwing in a new predictor doesn’t help as much as you might expect: This should pop out directly from the Bayesian model, in that if measurement error is large you will do a lot of partial pooling.

Thanks Andrew. It’s certainly true that you can model measurement error within a Bayesian framework. And as we show, you can do it in a frequentist framework too. The moral of the story isn’t that measurement error is impossible to account for–it’s that it’s eminently possible, and we should be doing it. However, when you *do* explicit account for it, it turns out to be surprisingly difficult to draw conclusions about incremental validity. I don’t see that being any easier in virtue of the Bayesian framework (which, for what it’s worth, I also personally prefer).

Tal:

Yup. As you put it, “the same issues would arise for Bayesian models that fail to explicitly account for measurement error.” One appealing feature about Stan is how easy and direct it is to explicitly account for measurement error. But the user still has to do it! I guess one question is, how routine should this be? Right now, we can easily fit measurement-error models but they are still treated as a bit of a special topic, so most of the time people don’t bother with them. Maybe this should change. I’m reminded a bit about on different models for treatment and control groups. This seems to be a good idea to me, but even in my own textbooks I never seem to get to these models.

“Inferences for each data set are treated as providing a kind of measurement error with respect to true parameter values.”

Can someone clarify this statement from the STAN manual for me?

I put measurement error and meta-analysis in the same chapter of the manual because their models are technically identical when each experiment in the meta-analysis is the same. You think of each experiment in the meta-analysis as having measurement error on the true effect.

P.S. It’s “Stan”, not “STAN”, because “Stan” is not an acronym.

maybe people just like proclaiming STAN emphatically.

Also, I cannot resist calling the manual for Stan “the Stanual”. Ba-dum-bum-ching!

…

……

Okay, I’ll just show myself out. Thanks!

> technically identical when each experiment in the meta-analysis is the same

Yup, in those simple and idealized examples where the study standard errors are taken as known.

Often people are stuck with study summaries chosen by the authors and there is little else that can meaningfully be done.

Hopefully this is changing (more raw data available) so tah more can be done statistically.

More generally one can “summarize, contrast and combine data using likelihood functions… Here the probabilities of possible values of the parameter (prior) are combined with the probability of the data given various values the parameter (likelihood), resulting in the (posterior) probabilities of possible values of the parameter (given the data and the prior).”

Important to remember that “At the heart of any meta-analysis is the belief that something was common between different studies. Only after this belief has stood up to a thorough investigation of the study results, will an appropriate combination to reflect all of the evidence be sensible.”

This is all explained in my concise 10 page letter to the editor on Warn et al., 2002. (Apparently only cited twice.) https://www.ncbi.nlm.nih.gov/pubmed/16118810

Somewhat hilariously, the binary example Warn used was actually based on continuous data that authors had dichotomized and only reported those summaries.

Also hilarious – my attempts to avoid actually using Bayesian methods.

Ah, I see that now. Thanks!

I started reading the paper but I’m confused by the example on page 3. The example says that ice cream sales and swimming pool deaths are spuriously correlated because of their mutual dependence on temperature, and if temperature is measured by self-reported Likert ratings of subjective temperature, then controlling for it does not completely eliminate this spurious correlation due to the measurement error. The way I see it there are two types of measurement error here: (1) the Likert scale measures with error the subjective temperature, a latent construct, and (2) subjective temperature measures with error the actual temperature.

The first type of measurement error can be accounted for by Bayesian Item Response models — i.e. by modelling the relationship between the latent variables and Likert ratings as probabilistic rather than deterministic, and by integrating out uncertainty in the latent variables. The second type of measurement error seems besides the point here; don’t you _want_ to measure subjective temperature, since that influences behaviour more directly than the actual temperature?

Without having read the paper, something that comes to mind as relevant is that subjective temperature has more variability than measurements of actual temperature, since different people may have quite different subjective ratings of temperature.

I had mentioned Westfall & Yarkoni paper in the comments to another post where measurement error was an issue:

https://statmodeling.stat.columbia.edu/2016/06/07/social-problems-with-a-paper-in-social-problems/

But I don’t think the paper had gotten a post to itself yet.