Later this week, I’m going to be GM-ing my first session of Blades in the Dark, a role-playing game designed by John Harper. We’ve already assembled a crew of scoundrels in Session 0 and set the first score. Unlike most of the other games I’ve run, I’ve never played Blades in the Dark, I’ve only seen it on YouTube (my fave so far is Jared Logan’s Steam of Blood x Glass Cannon play Blades in the Dark!).

Action roll

In Blades, when a player attempts an action, they roll a number of six-sided dice and take the highest result. The number of dice rolled is equal to their action rating (a number between 0 and 4 inclusive) plus modifiers (0 to 2 dice). The details aren’t important for the probability calculations. If the total of the action rating and modifiers is 0 dice, the player rolls two dice and takes the worst. This is sort of like disadvantage and (super-)advantage in Dungeons & Dragons 5e.

A result of 1-3 is a failure with a consequence, a result of 4-5 is a success with a consequence, and a result of 6 is an unmitigated success without a consequence. If there are more than two 6s in the result, it’s a success with a benefit (aka a “critical” success).

The GM doesn’t roll. In a combat situation, you can think of the player roll encapsulating a turn of the player attacking and the opponent(s) counter-attacking. On a result of 4-6, the player hits, on a roll of 1-5, the opponent hits back or the situation becomes more desperate in some other way like the character being disarmed or losing their footing. On a critical result (two or more 6s in the roll), the player succeeds with a benefit, perhaps cornering the opponent away from their flunkies.

Resistance roll

When a player suffers a consequence, they can resist it. To do so, they gather a pool of dice for the resistance roll and spend an amount of stress equal to six minus the highest result. Again, unless they have zero dice in the pool, in which case they can roll two dice and take the worst. If the player rolls a 6, the character takes no stress. If they roll a 1, the character takes 5 stress (which would very likely take them out of the action). If the player has multiple dice and rolls two or more 6s, they actually reduce 1 stress.

For resistance rolls, the value between 1 and 6 matters, not just whether it’s in 1-3, in 4-5, equal to 6, or if there are two 6s.

Probabilities

Resistance rolls are rank statistics for pools of six-sided dice. Action rolls just group those. Plus a little sugar on top for criticals. We could do this the hard way (combinatorics) or we could do this the easy way. That decision was easy.

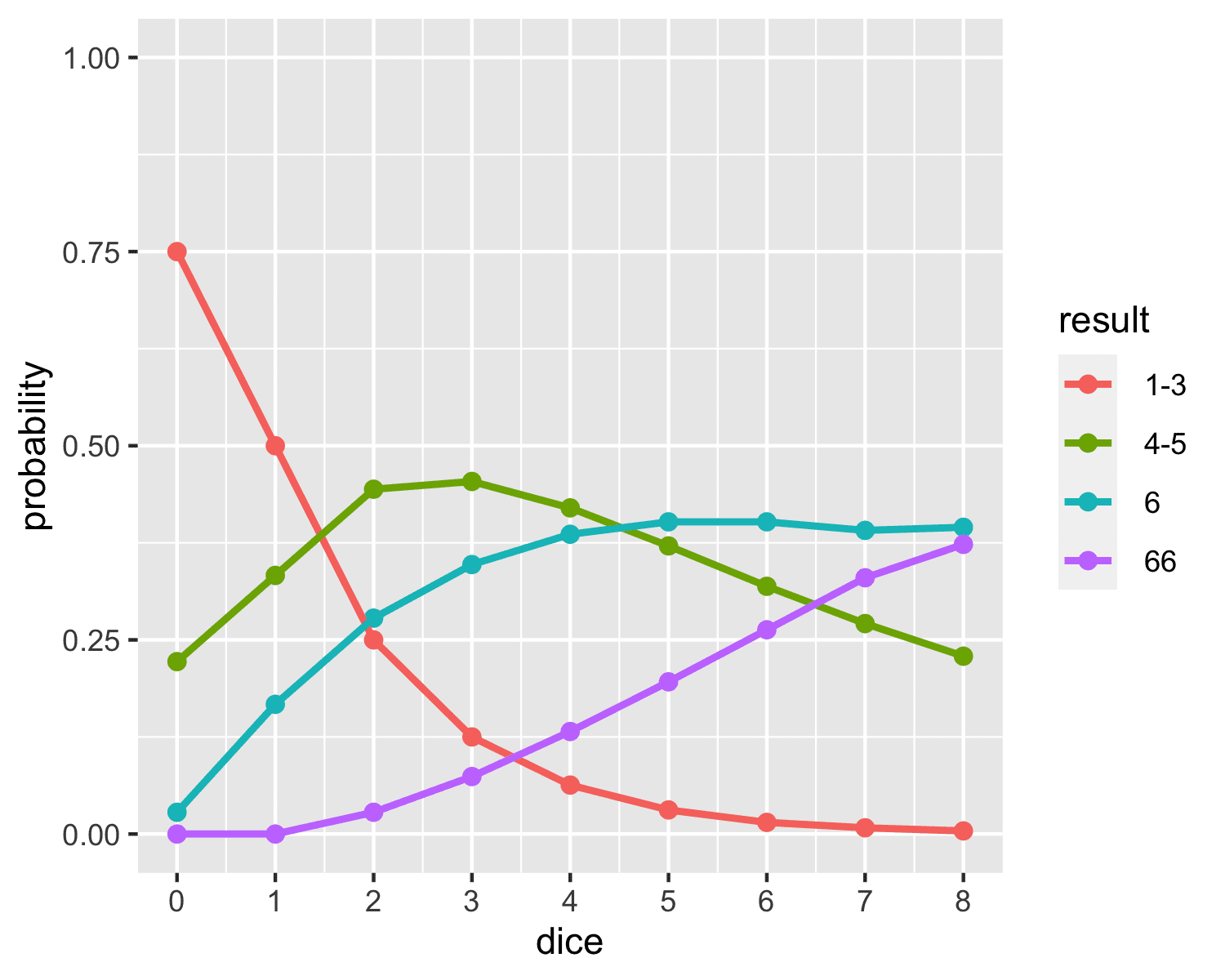

Here’s a plot of the results for action rolls, with dice pool size on the x-axis and line plots of results 1-3 (fail plus a complication), 4-5 (succeed with complication), 6 (succeed) and 66 (critical success with benefit). This is based on 10m simulations.

You can find a similar plot from Jasper Flick on AnyDice, in the short note Blades in the Dark.

I find the graph pretty hard to scan, so here’s a table in ASCII format, which also includes the resistance roll probabilities. The 66 result (at least two 6 rolls in the dice pool) is a possibility for both a resistance roll and an action roll. Both decimal places should be correct given the 10M simulations.

DICE RESISTANCE ACTION BOTH DICE 1 2 3 4 5 6 1-3 4-5 6 66 ---- ---------------------------- ------------- ---- 0d .36 .25 .19 .14 .08 .03 .75 .22 .03 .00 1d .17 .17 .17 .17 .17 .17 .50 .33 .17 .00 2d .03 .08 .14 .19 .25 .28 .25 .44 .28 .03 3d .01 .03 .09 .17 .29 .35 .13 .45 .35 .07 4d .00 .01 .05 .14 .29 .39 .06 .42 .39 .13 5d .00 .00 .03 .10 .27 .40 .03 .37 .40 .20 6d .00 .00 .01 .07 .25 .40 .02 .32 .40 .26 7d .00 .00 .01 .05 .22 .39 .01 .27 .39 .33 8d .00 .00 .00 .03 .19 .38 .00 .23 .38 .39

One could go for more precision with more simulations, or resort to working them all out combinatorially.

The hard way

The hard way is a bunch of combinatorics. These aren’t too bad because of the way the dice are organized. For the highest value of throwing N dice, the probability that a value is less than or equal to k is one minus the probability that a single die is greater than k raised to the N-th power. It’s just that there are a lot of cells in the table. And then the differences would be required. Too error prone for me. Criticals can be handled Sherlock Holmes style by subtracting the probability of a non-critical from one. A non-critical either has no sixes (5^N possibilities with N dice) or exactly one six ((6 choose 1) * 5^(N – 1)). That’s not so bad. But there are a lot of entries in the table. So let’s just simulate.

Edit: Cumulative Probability Tables

I really wanted the cumulative probability tables of a result or better (I suppose I could’ve also done it as result or worse). I posted these first on the Blades in the Dark forum. It uses Discourse, just like Stan’s forum.

Action Rolls

Here’s the cumulative probabilities for action rolls.

And here’s the table of cumulative probabilities for action rolls, with 66 representing a critical, 6 a full success, and 4-5 a partial success:

ACTION ROLLS, CUMULATIVE

probability of result or better

dice 4-5+ 6+ 66

0 0.250 0.028 0.000

1 0.500 0.167 0.000

2 0.750 0.306 0.028

3 0.875 0.421 0.074

4 0.938 0.518 0.132

5 0.969 0.598 0.196

6 0.984 0.665 0.263

7 0.992 0.721 0.330

8 0.996 0.767 0.395

Resistance Rolls

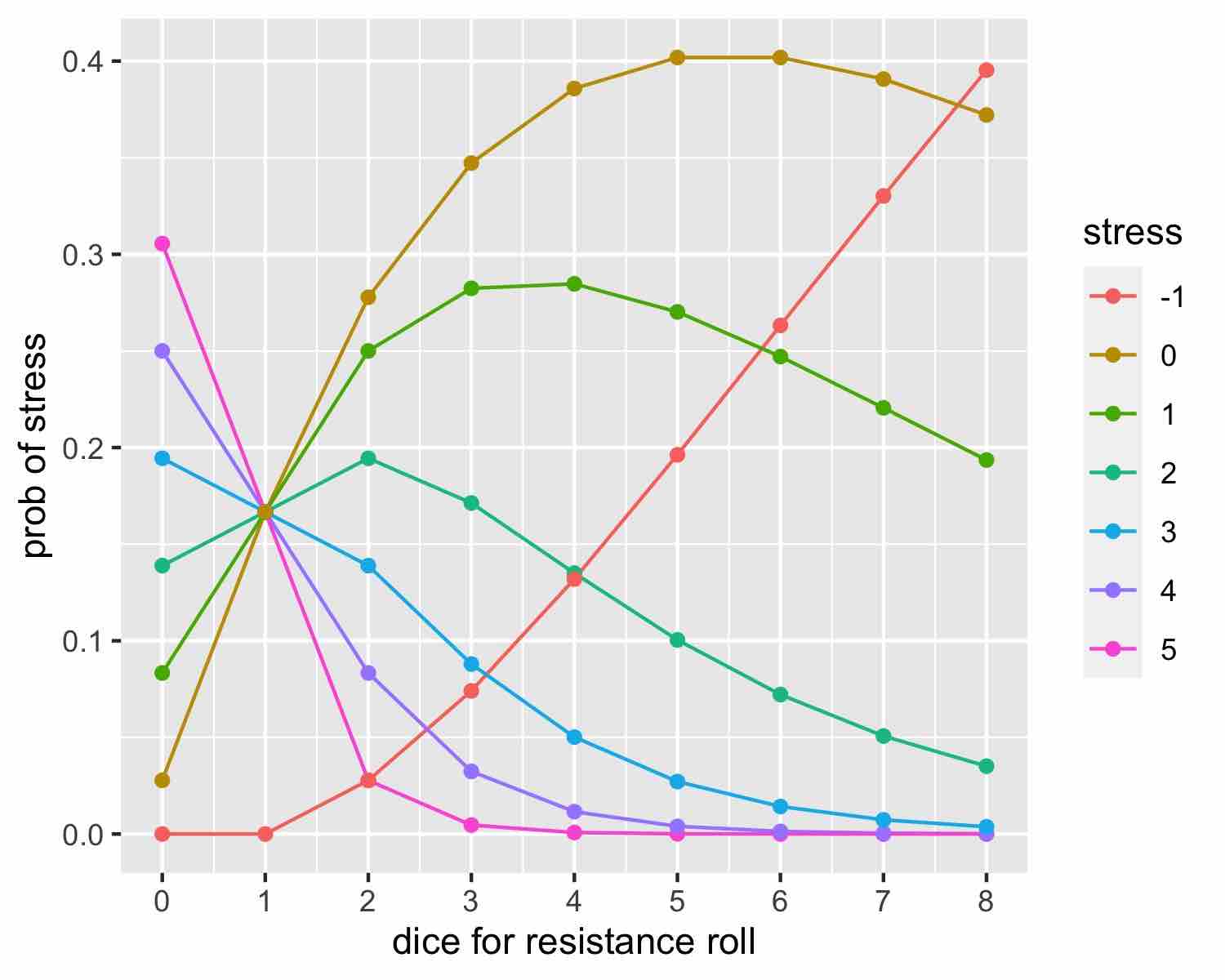

And here are the basic probabilities for resistance rolls.

Here’s the table for stress probabilities based on dice pool size

RESISTANCE ROLLS

Probability of Stress

Dice 5 4 3 2 1 0 -1

0 .31 .25 .19 .14 .08 .03 .00

1 .17 .17 .17 .17 .17 .17 .00

2 .03 .08 .14 .19 .25 .28 .03

3 .00 .03 .09 .17 .28 .35 .07

4 .00 .01 .05 .13 .28 .39 .13

5 .00 .00 .03 .10 .27 .40 .20

6 .00 .00 .01 .07 .25 .40 .26

7 .00 .00 .01 .05 .22 .39 .33

8 .00 .00 .00 .03 .19 .37 .40

Here’s the plot for the cumulative probabilities for resistance rolls.

Here’s the table of cumulative resistance rolls.

RESISTANCE ROLLS, CUMULATIVE

Probability of Stress or Less

Dice 5 4 3 2 1 0 -1

0 1.00 .69 .44 .25 .11 .03 .00

1 1.00 .83 .67 .50 .33 .17 .00

2 1.00 .97 .89 .75 .56 .31 .03

3 1.00 1.00 .96 .87 .70 .42 .07

4 1.00 1.00 .99 .94 .80 .52 .13

5 1.00 1.00 1.00 .97 .87 .60 .20

6 1.00 1.00 1.00 .98 .91 .67 .26

7 1.00 1.00 1.00 .99 .94 .72 .33

8 1.00 1.00 1.00 1.00 .96 .77 .40

For example, with 4 dice (the typical upper bound for resistance rolls), there’s an 80% chance that the character takes 1, 0, or -1 stress, and 52% chance they take 0 or -1 stress. With 0 dice, there’s a better than 50-50 chance of taking 4 or more stress because the probability of 3 or less stress is only 44%.

Finally, here’s the R code for the resistance and cumulative resistance.

# row = dice, col = c(1:6, 66)

resist <- matrix(0, nrow = 8, ncol = 7)

resist[1, 1:6] <- 1/6

for (d in 2:8) {

for (result in 1:5) {

resist[d, result] <-

sum(resist[d - 1, 1:result]) * 1/6 +

resist[d - 1, result] * (result -1) / 6

}

resist[d, 6] <- sum(resist[d - 1, 1:5]) * 1/6 +

sum(resist[d - 1, 6]) * 5/6

resist[d, 7] <- resist[d - 1, 7] + resist[d - 1, 6] * 1/6

}

cumulative_resist <- resist # just for sizing

for (d in 1:8) {

for (result in 1:7) {

cumulative_resist[d, result] <- sum(resist[d, result:7])

}

}

library('reshape')

library('ggplot2')

zero_dice_probs <- c(11, 9, 7, 5, 3, 1, 0) / 36

zero_dice_cumulative_probs <- zero_dice_probs

for (n in 1:7)

zero_dice_cumulative_probs[n] <- sum(zero_dice_probs[n:7])

z <- melt(cumulative_resist) # X1 = dice, X2 = result, value = prob

stress <- 6 - z$X2

df <- data.frame(dice = z$X1, stress = as.factor(stress), prob = z$value)

df <- rbind(df, data.frame(dice = rep(0, 7), stress = as.factor(6 - 1:7), prob = zero_dice_cumulative_probs))

cumulative_plot <- ggplot(df, aes(x = dice, y = prob,

colour = stress, group = stress)) +

geom_line() + geom_point() +

xlab("dice for resistance roll") +

ylab("prob of stress or less") +

scale_x_continuous(breaks = 0:8)

cumulative_plot

ggsave('cumulative-resistance.jpg', plot = cumulative_plot, width = 5, height = 4)

z2 <- melt(resist) # X1 = dice, X2 = result, value = prob

stress2 <- 6 - z2$X2

df2 <- data.frame(dice = z2$X1, stress = as.factor(stress2), prob = z2$value)

df2 <- rbind(df2, data.frame(dice = rep(0, 7), stress = as.factor(6 - 1:7),

prob = zero_dice_probs))

plot <- ggplot(df2, aes(x = dice, y = prob,

colour = stress, group = stress)) +

geom_line() + geom_point() +

xlab("dice for resistance roll") +

ylab("prob of stress") +

scale_x_continuous(breaks = 0:8)

plot

ggsave('resistance.jpg', plot = plot, width = 5, height = 4)

Blades in the Dark!!! I would love to see more RPG posts here. Or maybe a RPG session at one of the StanCons?

Feel free to suggest a topic.

As to RPGs at StanCon, I know of at least two other Stan devs who can GM. We’ve played quite a few one-offs at this point, too.

The stats for all these RPGs tend to be pretty simple. I just couldn’t find a table with the resistance probabilities. Now I wished I’d done everything cumulatively. I really want to know the probability I’ll take 3 or more stress with 2 dice resistance. Not the probability that I’ll take 4 specifically or 2 specifically.

They’re fun to think about! I like one shots because running a bunch let’s you experience different ways creators want to handle uncertainty in their game. I’ve been surprised that some of the games I like the most are much more swingy (very cool or very dangerous outcomes) than I thought would be good. Turns out, frequently almost sending your players on a trip to murdertown can be exciting for everyone.

I’d be open to a stancon RPGs session but I’d want to wait till the next IRL one

+1 to RPG posts on the blog

The “failure with a consequence” probabilities seems to be really small with 5+ dice for my tastes, but I also don’t know what size dice pool players start with, at what point they reach certain dice pools, and whether there is a consequence/benefit roll table or does the DM make up one on the spot (e.g., Dungeon World)?

It is pretty interesting to read up on the probabilities of dice rolls in RPGs, and how they can support or contradict the game design. I remember reading a blog on the Savage Worlds dice system, which has exploding dice if I remember correctly. So rolling the max value on the dice adds an additional roll and then you sum up the value of the dice rolls for that action. The game is designed so that as characters level up or “develop”, their dice pool should increase in max value (e.g., d4 -> d6 -> d8 -> d10). However I think the blog pointed out it is actually preferable to have a d4 instead of a d6 because of the exploding dice, but I never tried to calculate the actually probabilities to see that.

A topic that would be interesting to see is the d20 system vs 3d6 system. 3d6 is usually proposed as a house rule or gamehack for D20 systems, but the players in my old group didn’t like that critical rolls (bad or good) had lower probabilities with 3d6. The DM also was worried how it would impact the balance of the game system since encounters in the game had a fixed threshold for successes and failures.

As Dylan points out below, anything beyond 4d or 6d is more of a mathematical/combinatorial problem than a pragmatic one because the dice pools tend to be pretty limited in practice in Blades in the Dark.

The main effect of a marginal die beyond 2d is in cutting the full failure (1-3 outcome) rate in half. That’ll mainly be useful in desperate situations against superior foes, where the difference between a 1/16 and 1/32 chance of a serious consequence really matters.

As to what Steve (the C++ template and one-off RPG master) and phdummy (presumably an advanced grad student suffering the valley of despair effect) were saying about swinginess, I’m more intrigued by less swingy systems.

In our D&D 5e game, I don’t like possibility that Steve’s commoner Jimbo has a very good chance to out-shoot my ranger Abner, who is not only proficient (+2) and high dex (+2), but trained in the archery fighting style (+2). Let’s say we’re shooting at another commoner with no armor, so AC 10. Steve’s commoner, who’s never held a longbow and is of average dexterity, has a 55% chance of hitting him with a longbow at 50 paces for 1d10 damage [edited so numbers add up and match longbow range!]. The ranger is +6, so I have to roll a 4, for an 85% chance of hitting. Now let’s arm wrestle. Abner gets 1d20 + 4 for my +2 strength and my training in Athletics vs. Jimbo’s +0 for having average strength and no training in how to use his strenght. The chance of Jimbo winning or tying an Athletics contest is (16 + 15 + … + 1) / (20 * 20), or (16 * 17 / 2) / 400, which is a bit better than 1 in 3.

I’m intrigued by the fudge system used by Fate, where you roll 4dF, where F is a fudge die. A fudge die F is a virtual d3 labeled -1, 0, and 1 (equal probabilities). One nice property of dF is that the average roll is 0 (unlike a d20, where the average roll is 10.5, and thus not even a possible outcome). It’s the non-centered parameterization, unlike a d20, which is centered around 10.5. That makes them much easier to stack.

Another (desirable?) property is that the extremes are only +4 and -4, and those each have only a 1/81 probability. Physical fudge dice are six-sided, labeled “-“, ” “, and “+” on two sides each; I’d like to see twelve-sided fudge dice because dodecahedrons are pleasant to roll.

3d6 is an easy plug-in alternative to d20 because it has the same expected value (10.5). Two other alternatives with a mean of 10.5 (like a d20) are the average of 2d20 and the middle roll of 3d20 (rank 2 statistic). For the average, crits are only 1/400, whereas for the middle it’s ((3 choose 2) * 19 + 1) / 20^3, or about 0.7%.

I think the system in “The One Ring” was very well thought out dicewise, and might appeal to your desire for less swingyness. It’s a dice pool and target number system, but with a D12 base + xD6 for skill level (with x ranging from 0-5, I think), making the variance of success decrease as skill increases.

Your use of simulation also reminded me of the time I simulated the lifespans of 10000 knights in Pendragon, because we were curious about what the actual distribution of lifespans implied by the aging system was.

The easiest way to calculate the probabilities is to enumerate all the possibilities. There are not so many when the number of dice is limited to eight (less than 10M you used in your simulation). Below are my results; they match quite well your table but some numbers are slightly different (the first entry in your table is very different, but it may be a transcription error).

dice 1 2 3 4 5 s6 d6 123 45 s6 d6

0 0.31 0.25 0.19 0.14 0.08 0.03 0.00 0.75 0.22 0.03 0.00

1 0.17 0.17 0.17 0.17 0.17 0.17 0.00 0.50 0.33 0.17 0.00

2 0.03 0.08 0.14 0.19 0.25 0.28 0.03 0.25 0.44 0.28 0.03

3 0.00 0.03 0.09 0.17 0.28 0.35 0.07 0.12 0.45 0.35 0.07

4 0.00 0.01 0.05 0.14 0.28 0.39 0.13 0.06 0.42 0.39 0.13

5 0.00 0.00 0.03 0.10 0.27 0.40 0.20 0.03 0.37 0.40 0.20

6 0.00 0.00 0.01 0.07 0.25 0.40 0.26 0.02 0.32 0.40 0.26

7 0.00 0.00 0.01 0.05 0.22 0.39 0.33 0.01 0.27 0.39 0.33

8 0.00 0.00 0.00 0.04 0.19 0.37 0.40 0.00 0.23 0.37 0.40

dice 1 2 3 4 5 single6 double6 123 45 single6 double6

0 0.30556 0.25000 0.19444 0.13889 0.08333 0.02778 0.00000 0.75000 0.22222 0.02778 0.00000

1 0.16667 0.16667 0.16667 0.16667 0.16667 0.16667 0.00000 0.50000 0.33333 0.16667 0.00000

2 0.02778 0.08333 0.13889 0.19444 0.25000 0.27778 0.02778 0.25000 0.44444 0.27778 0.02778

3 0.00463 0.03241 0.08796 0.17130 0.28241 0.34722 0.07407 0.12500 0.45370 0.34722 0.07407

4 0.00077 0.01157 0.05015 0.13503 0.28472 0.38580 0.13194 0.06250 0.41975 0.38580 0.13194

5 0.00013 0.00399 0.02713 0.10044 0.27019 0.40188 0.19624 0.03125 0.37063 0.40188 0.19624

6 0.00002 0.00135 0.01425 0.07217 0.24711 0.40188 0.26322 0.01562 0.31927 0.40188 0.26322

7 0.00000 0.00045 0.00736 0.05072 0.22055 0.39071 0.33020 0.00781 0.27127 0.39071 0.33020

8 0.00000 0.00015 0.00375 0.03511 0.19355 0.37211 0.39532 0.00391 0.22866 0.37211 0.39532

I don’t like those y-axes that go below 0 and above 1. Probabilities are bounded between 0 and 1, and I think the graphs should respect that.

Since values of exactly 0 and exactly 1 are admissible, your suggestion will necessarily clip plot symbols.

This isn’t the worst thing in the world, but becomes an issues where the plot symbols are necessary to convey information.

Garnett:

No, my suggestion would not clip plot symbols. If the points and the axes were in the same color, then, yes, this would be an issue. But in the above graph the axes are white and the points are colored, so we could have the y-axis go exactly from 0 to 1, and points at 0 and 1 would appear just fine.

Points plotted at 0 or at 1 would appear as half circles instead of full circles.

Daniel:

They could be full circles, as the axes lines have thickness themselves.

Especially problematic if variation in the symbol types are intended to convey important information.

It’s always a crap shoot to guess which aspect of a ggplot2 default line plot that Andrew’s going to criticize. My guess on this one before publishing was legend rather than labels on lines, lack of visually distinct x and y axis, grey background, and something about tick marks.

I’d love to see Andrew produce what he considers a best practices version of this graph. Here’s the data:

and my starting point:

df <- read.csv('temp.R') plot <- ggplot(df, aes(x = dice, y = probability, colour = result)) + geom_point(size = 2) + geom_line(size = 1) + scale_x_continuous(breaks = 0:8) + scale_y_continuous(breaks = c(0, 0.25, 0.5, 0.75, 1.0), lim = c(0, 1))If you just remove the padding around the axes (with expand = c(0, 0) on the scale_x and scale_y calls), you get clipping as Garnett says. What I really think Andrew wants is to remove the grey and white background and insert an x and y axis that make a 90-degree angle. That's harder than a simple geom_hline or geom_vline. I don't think converting the legend to labeling lines is going to be automateable with good results.

Bob:

I like the graph you made. I’d change it just by removing the gray rectangles below 0, removing the gray rectangles above 1, and I guess moving up the tick marks and numbers on the x-axis a bit.

Some of these maths don’t apply because You’re never going to throw 8d in BitD. If you have 4 dice, you push yourself, and you get an assist you’ll get six and I’ve never seen that happen because PC’s can’t get 4d in an action for a couple of levels for themselves and the crew. A Resistance Roll tops out at 4d.

I was trying to find a way to visualize these probability tables a long time ago

https://ctg2pi.wordpress.com/2019/11/19/visualizing-probabilities-for-a-board-game/

An easier way to compute analytically is to throw the dice ‘one by one’: Start with the probabilities from one die and work out transition probabilities when throwing an additional die. In javascript code:

// probabilities for rolling a single die:

let res = [[3/6, 2/6, 1/6, 0]]

for(let i = 0; i<8; i++) {

//probabilities for rolling i+1 dice, computed from the distribution with i dice:

res[i+1] = [

res[i][0] * 3/6,

res[i][0] * 2/6 + res[i][1] * 5/6,

res[i][0] * 1/6 + res[i][1] * 1/6 + res[i][2] * 5/6,

res[i][2] * 1/6 + res[i][3]

]

console.log(round(res[i][0]),round(res[i][1]), round(res[i][2]), round(res[i][3]))

}

function round(n) {

return Math.round(n*1000)/1000

}

Result:

0.5 0.333 0.167 0

0.25 0.444 0.278 0.028

0.125 0.454 0.347 0.074

0.063 0.42 0.386 0.132

0.031 0.371 0.402 0.196

0.016 0.319 0.402 0.263

0.008 0.271 0.391 0.33

0.004 0.229 0.372 0.395

That’s a neat way to compute these recursively using the combinatorics—I don’t usually think about dynamic programming algorithms for something this simple. The really nice property of this approach is that it’s general enough to extend to resistance rolls. And it’ll scale beyond enumerating all possibilities.

I just do everything by simulation now because it’s brainless. I didn’t mean to suggest it’s a good way to solve this problem accurately!

OK, here’s the graph I really wanted to plot of cumulative chances of success with complication or better, clean success or better, or critical success, all as a function of dice pool size.

Here’s my very crude code. I will resort to hand-coding one-off graphs! I used Bouke van der Spoel’s nice recursive approach from above and modified the default the ggplot2 output to be slightly better by Andrew’s criteria (though you can see the slight clipping on the right and left because I didn’t expand far enough).

library('ggplot2') library('reshape') res <- matrix(NA, nrow = 8, ncol = 4) # base case for 1 die res[1, 1:4] <- c(3/6, 2/6, 1/6, 0) # recursive case for 2+ die for (n in 2:8) { res[n, 1:4] <- c(res[n - 1, 1] * 3/6, res[n - 1, 1] * 2/6 + res[n - 1, 2] * 5/6, res[n - 1, 1] * 1/6 + res[n - 1, 2] * 1/6 + res[n - 1, 3] * 5/6, res[n - 1, 3] * 1/6 + res[n - 1, 4]) } # bind in result for 0 dice on top cumulative_df <- rbind(data.frame(succ = 1/4, clean = 1/36, crit = 0), data.frame(succ = res[ , 2] + res[ , 3] + res[ , 4], clean = res[ , 3] + res[ , 4], crit = res[ , 4])) # add labels for the dice long_df <- cbind(dice = c(0:8, 0:8, 0:8), melt(cumulative_df)) plot <- ggplot(long_df, aes(x = dice, y = value, colour = variable)) + geom_hline(yintercept = 0) + geom_vline(xintercept = 0) + geom_point(size = 2) + geom_line(size = 1) + ylab("probability") + scale_x_continuous(breaks = 0:8, expand = c(0.01, 0.01)) + scale_y_continuous(breaks = c(0, 0.25, 0.5, 0.75, 1.0), lim = c(0, 1), expand = c(0.0, 0.01)) + theme(panel.grid.major = element_blank(), panel.grid.minor = element_blank(), panel.background = element_blank()) plotHere's the table form, edited by hand from printing the cumulative_df variable.

I updated the body of the post for plotting resistance and cumulative resistance rolls with tables and code.

My handle on the Blades in the Dark Community is “carp”. Here’s my activity log, with links to post. So far, I’ve posted how I set up Roll20 and reported on our session 0 and the crew in addition to these probability reports.

How do you like Roll20?

I’ve tried and failed twice to get any traction with two different groups….

I’ve played 2.5 hours/week with my childhood friends since 2014. I’ve played D&D 4e, D&D 5e, Blades in the Dark, and several homebrew games. Add to that GM-ing prep time, and I’m over 1500 hours. Yet I feel the same way about Roll20 as I feel about R. It’s where my community is and it does let me get the job done, but it’s hard to imagine a worse set of decisions being made around a piece of software. I’d switch to a decent alternative in a heartbeat. Every week, my NYC players, almost all of whom are serious software engineers and/or statisticians, wonders whether our time wouldn’t be better spent moving from the Stan project, from Amazon and from Facebook to build a better mousetrap. Too bad we’re all infrastructure folks—not a front end dev among us.

The modality of the interface and the number of steps and panels to do anything is the worst part (your pointer can be on different layers and also on different functions, so it’s usually a click sequence to do even simple things). Or maybe it’s the fact that there are two ways to look things up—in the journal and in the compendium, but the one in the compendium opens a non-clickable version of a monster. On the other hand, the ability to use high-quality maps and tokens actually works better than drawing on a battlemap. The initiative tracker is good for D&D. And you can use some macros. Some things I’m only just discovering after 6 years of using it, such as Shift-double-click on a token opening its sheet. Their video is terrible, but can work OK if you only have 3 players, as I often do. Otherwise, you need to go to Google Hangouts or Skype or some alternative.

Very interesting!

I noticed that players tend to zero in on the graphics details and get easily distracted from narration. That’s lack of experience, I think, but it’s slowed things down for us.

It does seem that they could have done a better job on the nuts-and-bolts interface, but I’m not a developer.

Dont know to add pictures here, but Here is a visualisation I done

https://ctg2pi.wordpress.com/2020/07/21/another-dice-probabilities-chart/

This chart is designed for a players to quickly evaluate what chances of what outcomes whey have