In a paper to be published in the Journal of Financial Reporting, Luca Berchicci and Andy King shoot down an earlier article claiming that corporate sustainability reliably predicted stock returns. It turns out that this earlier research had lots of problems.

King writes to me:

Getting to the point of publication was an odyssey. At two other journals, we were told that we should not replicate and test previous work but instead fish for even better results and then theorize about those:

“I encourage the authors to consider using the estimates from figure 2 as the dependent variables analyzing which model choices help a researcher to more robustly understand the relation between CSR measures and stock returns. This will also allow the authors to build theory in the paper, which is currently completely absent…”

“In fact, there are some combinations of proxies/ model specifications that are to the left of Khan et al.’s estimate. I am curious as to what proxies/ combinations enhance the results?”

Also, the original authors seem to have attempted to confuse the issues we raise and salvage the standing of their paper (see attached: Understanding the Business Relevance of ESG Issues). We have written a rebuttal (also attached).

Here’s the relevant part of the response, by George Serafeim and Aaron Yoon:

Models estimated in Berchicci and King (2021) suggest that making different variable construction, sample period, and control variable choices can yield different results with regards to the relation between ESG scores and business performance. . . . However, not all models are created equal . . . For example, Khan, Serafeim and Yoon (2016) use a dichotomous instead of a continuous measure because of the weaknesses of ESG data and the crudeness of the KLD data, which is a series of binary variables. Creating a dichotomous variable (i.e., top quintile for example) could be well suited when trying to identify firms on a specific characteristic and the metric identifying that characteristic is likely to be noisy. A continuous measure assumes that for the whole sample researchers can be confident in the distance that each firm exhibits from each other. Therefore, the use of continuous measure is likely to lead to significantly weaker results, as in Berchicci and King (2021) . . .

Noooooooo! Dichotomizing your variable almost always has bad consequences for statistical efficiency. You might want to dichotomize to improve interpretability, but you then should be aware of the loss of efficiency of your estimates, and you should consider approaches to mitigate this loss.

Berchicci and King’s rebuttal is crisp:

The issue debated in Khan, Serafeim, and Yoon (2016) and Berchicci and King (2022) is whether guidance on materiality from the Sustainable Accounting Standards Board (SASB) can be used to select ESG measures that reliably predict stock returns. Khan, Serafeim, and Yoon (2016) (hereafter “KSY”) estimate that had investors possessed SASB materiality data, they could have selected stock portfolios that delivered vastly higher returns, an additional 300 to 600 basis points per year for a period of 20 years. Berchicci and King (2022) (hereafter “BK”) contend that there is no evidence that SASB guidance could have provided a reliable advantage and contend that KSY’s findings are a statistical artifact.

In their defense of KSY, Yoon and Serafeim (2022) ignore the evidence provided in Berchicci and King and leave its main points unrefuted. Rather than make their case directly, they try to buttress their claim with a selective review of research on materiality. Yet a closer look at this literature reveals that little of it is relevant to the debate. Of the 28 articles cited, only two evaluate the connection between SASB materiality guidance and stock price, and both are self-citations.

Berchicci and King continue:

Indeed, in other forums, Serafeim has made a contrasting argument, contending that KSY is a uniquely important study – a breakthrough that shifted decades of understanding (Porter, Serafeim, and Kramer, 2016). Surely, such an important study should be evaluated on its own merits.

That’s funny. It reminds me of the general point that in research we want our results simultaneously to be surprising and to make perfect sense. In this case, this put Yoon and Serafeim in a bind.

And more:

In BK, we evaluate whether KSY’s results are a fair representation of the true link between material sustainability and stock return. We evaluate over 400 ways that the relationship could be analyzed and reveal that 98% of the models result in estimates smaller than the one reported by KSY and that the median estimate was close to zero. We then show that KSY’s estimate is not robust to simple changes in their model . . . Next, we evaluate the cause of KSY’s strong estimate and uncover evidence that it is a statistical artifact. . . . We then show that their measure also lacks face validity because it judges as materially sustainable firms that were (and continue to be) leading emitters of toxic pollution and greenhouse gasses. In some years, this included a large majority of the firms in extractive industries (e.g. oil, coal, cement, etc.). . . . KSY do not address any of these criticisms and instead rely on a belief that their measure and model are the only ones that should be considered. . . .

Where do they sit on the ladder?

It’s good to see this criticism out there, and as usual it’s frustrating to see such a stubborn response by the original authors. A few years ago we presented a ladder of responses to criticism, from the most responsible to the most destructive:

1. Look into the issue and, if you find there really was an error, fix it publicly and thank the person who told you about it.

2. Look into the issue and, if you find there really was an error, quietly fix it without acknowledging you’ve ever made a mistake.

3. Look into the issue and, if you find there really was an error, don’t ever acknowledge or fix it, but be careful to avoid this error in your future work.

4. Avoid looking into the question, ignore the possible error, act as if it had never happened, and keep making the same mistake over and over.

5. If forced to acknowledge the potential error, actively minimize its importance, perhaps throwing in an “everybody does it” defense.

6. Attempt to patch the error by misrepresenting what you’ve written, introducing additional errors in an attempt to protect your original claim.

7. Attack the messenger: attempt to smear the people who pointed out the error in your work, lie about them, and enlist your friends in the attack.

In this case, the authors of the original article are stuck somewhere around rung 4. Not the worse possible reaction—they’ve avoided attacking the messenger, and they don’t seem to have introduced any new errors—but they haven’t reached the all-important step of recognizing their mistake. Not good for them going forward. How can you make serious research progress if you can’t learn from what you’ve done wrong in the past. You’re building a house on a foundation of sand.

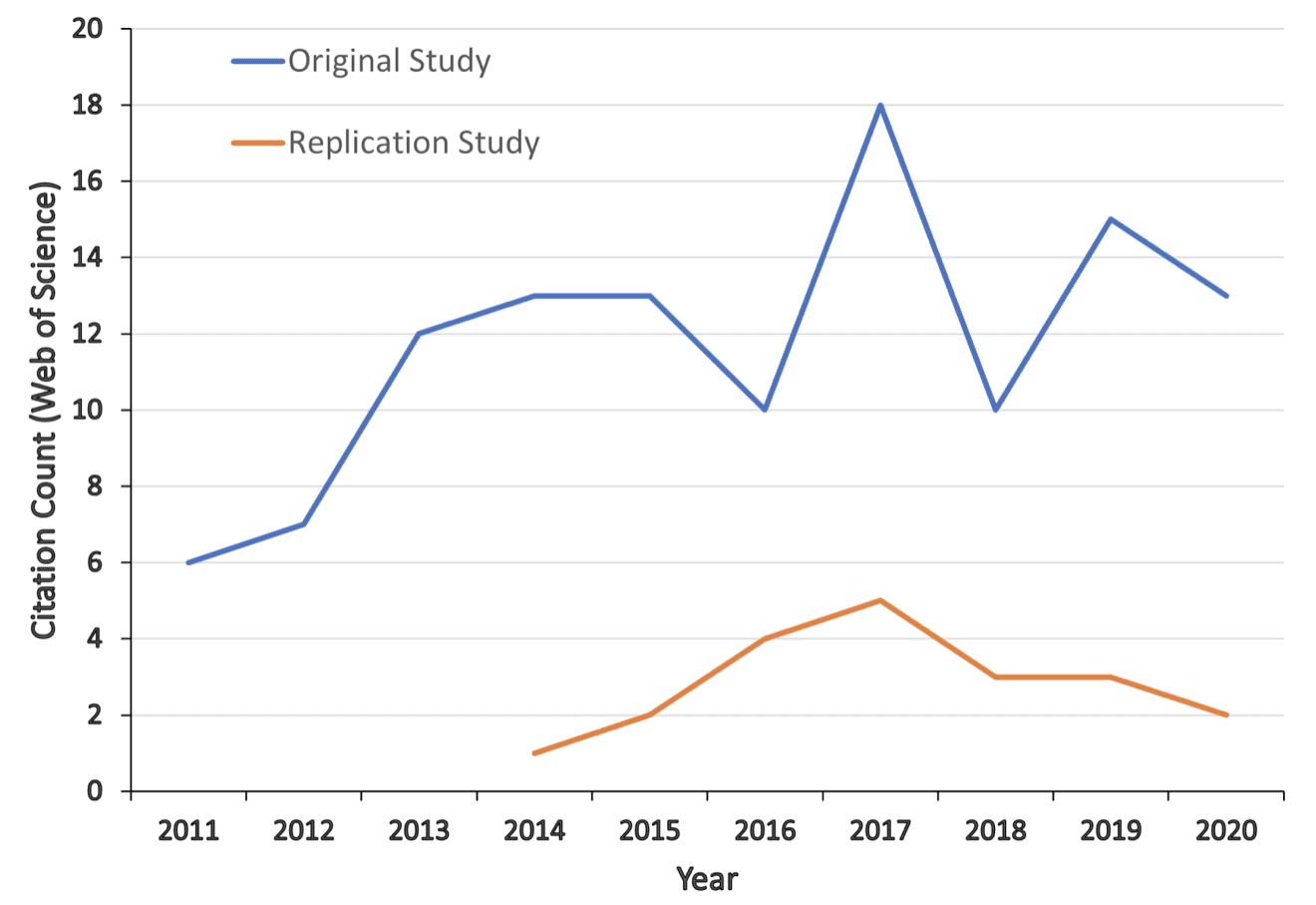

P.S. According to Google, the original article, “Corporate Sustainability: First Evidence on Materiality,” has been cited 861 times. How is it that such a flawed paper has so many citations? Part of this might be the instant credibility conveyed by the Harvard affiliations of the authors, and part of this might be the doing-well-by-doing-good happy-talk finding that “investments in sustainability issues are shareholder-value enhancing.” Kinda like that fishy claim about unionization and stock prices or the claims of huge economic benefits from early childhood stimulation. Forking paths allow you to get the message you want from the data, and this is a message that many people want to hear.