David Rea and Tony Burton write:

The Heckman Curve describes the rate of return to public investments in human capital for the disadvantaged as rapidly diminishing with age. Investments early in the life course are characterised as providing significantly higher rates of return compared to investments targeted at young people and adults. This paper uses the Washington State Institute for Public Policy dataset of program benefit cost ratios to assess if there is a Heckman Curve relationship between program rates of return and recipient age. The data does not support the claim that social policy programs targeted early in the life course have the largest returns, or that the benefits of adult programs are less than the cost of intervention.

Here’s the conceptual version of the curve, from a paper published by economist Heckman in 2006:

This graph looks pretty authoritative but of course it’s not directly data-based.

As Rea and Burton explain, the curve makes some sense:

Underpinning the Heckman Curve is a comprehensive theory of skills that encompass all forms of human capability including physical and mental health . . .

• skills represent human capabilities that are able to generate outcomes for the individual and society;

• skills are multiple in nature and cover not only intelligence, but also non cognitive skills, and health (Heckman and Corbin, 2016);

• non cognitive skills or behavioural attributes such as conscientiousness, openness to experience, extraversion, agreeableness and emotional stability are particularly influential on a range of outcomes, and many of these are acquired in early childhood;

• early skill formation provides a platform for further subsequent skill accumulation . . .

• families and individuals invest in the costly process of building skills; and

• disadvantaged families do not invest sufficiently in their children because of information problems rather than limited economic resources or capital constraints (Heckman, 2007; Cunha et al., 2010; Heckman and Mosso, 2015).

Early intervention creates higher returns because of a longer payoff over which to generate returns.

But the evidence is not so clear. Rea and Burton write:

The original papers that introduced the Heckman Curve cited evidence on the relative return of human capital interventions across early childhood education, schooling, programs for at-risk youth, university and active employment and training programs (Heckman, 1999).

I’m concerned about these all being massive overestimates because of the statistical significance filter (see for example section 2.1 here or my earlier post here). The researchers have every motivation to exaggerate the effects of these interventions, and they’re using statistical methods that produce exaggerated estimates. Bad combination.

Rea and Burton continue:

A more recent review by Heckman and colleagues is contained in an OECD report Fostering and Measuring Skills: Improving Cognitive and Non-Cognitive Skills to Promote Lifetime Success (Kautz et al., 2014). . . . Overall 27 different interventions were reviewed . . . twelve had benefit cost ratios reported . . . Consistent with the Heckman Curve, programs targeted to children under five have an average benefit cost ratio of around 7, while those targeted at older ages have an average benefit cost ratio of just under 2.

But:

This result is however heavily influenced by the inclusion of the Perry Preschool programme and the Abecedarian Project. These studies are somewhat controversial in the wider literature . . . Many researchers argue that the Perry Preschool programme and the Abecedarian Project do not provide a reliable guide to the likely impacts of early childhood education in a modern context . . .

Also the statistical significance filter. A defender of those studies might argue that these biases don’t matter because they could be occurring for all studies, not just early childhood interventions. But these biases can be huge, and in general it’s a mistake to ignore huge biases in the vague hope that they may be canceling out.

And:

The data on programs targeted at older ages do not appear to be entirely consistent with the Heckman Curve. In particular the National Guard Challenge program and the Canadian Self-Sufficiency Project provide examples of interventions targeted at older age groups which have returns that are larger than the cost of funds.

Overall the programs in the OECD report represent only a small sample of the human capital interventions with well measured program returns . . . many rigorously studied and well known interventions are not included.

So Rea and Burton decide to perform a meta-analysis:

In order to assess the Heckman Curve we analyse a large dataset of program benefit cost ratios developed by the Washington State Institute for Public Policy.

Since the 1980s the Washington State Institute for Public Policy has focused on evidence-based policies and programs with the aim of providing state policymakers with advice about how to make best use of taxpayer funds. The Institute’s database covers programs in a wide range of areas including child welfare, mental health, juvenile and adult justice, substance abuse, healthcare, higher education and the labour market. . . .

The August 2017 update provides estimates of the benefit cost ratios for 314 interventions. . . . The programs also span the life course with 10% of the interventions being aimed at children 5 years and under.

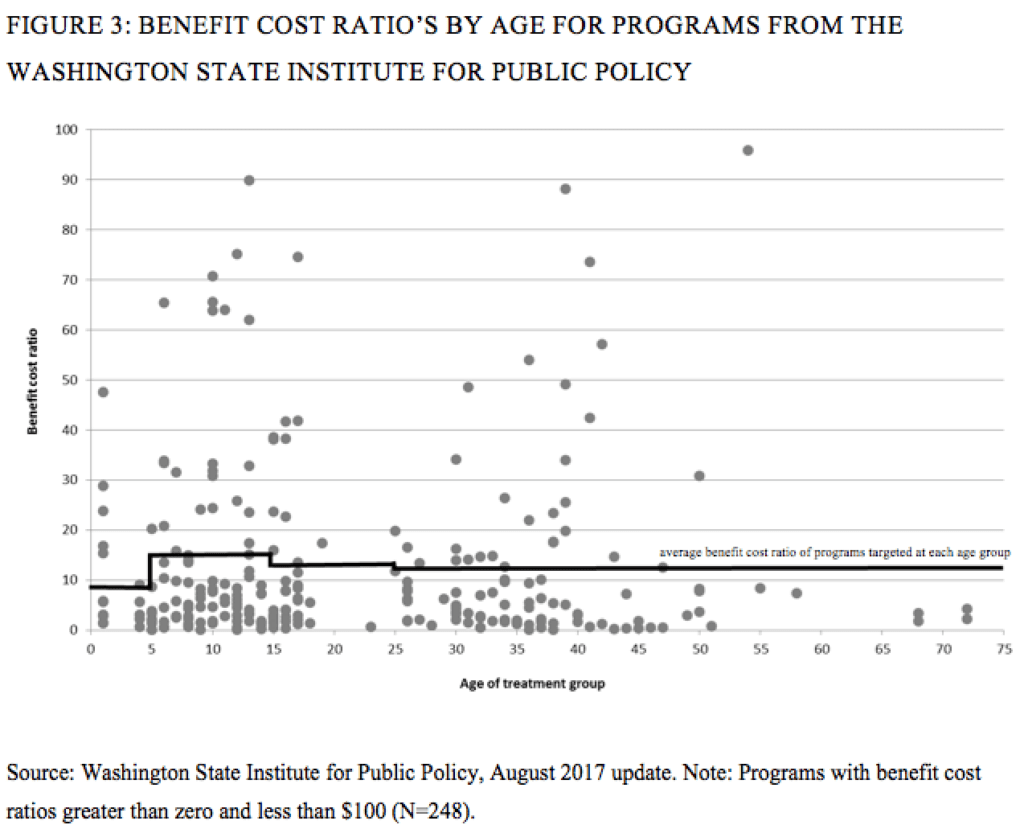

And here’s what they find:

Wow, that’s one ugly graph! Can’t you do better than that? I also don’t really know what to do with these numbers. Benefit-cost ratios of 90! That’s the kind of thing you see with, what, a plan to hire more IRS auditors? I guess what I’m saying is that I don’t know which of these dots I can really trust, which is a problem with a lot of meta-analyses (see for example here).

To put it another way: Given what I see in Rea and Burton’s paper, I’m prepared to agree with their claim that the data don’t support the diminishing-returns “Heckman curve”: The graph from that 2006 paper, reproduced at the top of this post, is just a story that’s not backed up by what is known. At that same time, I don’t know how seriously to take the above scatterplot, as many or even most of the dots there could be terrible estimates. I just don’t know.

In their conclusion, Rea and Burton say that their results do not “call into question the more general theory of human capital and skills advanced by Heckman and colleagues.” They express the view that:

Heckman’s insights about the nature of human capital are essentially correct. Early child development is a critical stage of human development, partly because it provides a foundation for the future acquisition of health, cognitive and non-cognitive skills. Moreover the impact of an effective intervention in childhood has a longer period of time over which any benefits can accumulate.

Why, then, do the diminishing returns of interventions not show up in the data? Rea and Burton write:

The importance of early child development and the nature of human capital are not the only factors that influence the rate of return for any particular intervention. Overall the extent to which a social policy investment gives a good rate of return depends on the assumed discount rate, the cost of the intervention, the interventions ability to impact on outcomes, the time profile of impacts over the life course, and the value of the impacts.

Some interventions may be low cost which will make even modest impacts cost effective.

The extent of targeting and the deadweight loss of the intervention are also important. Some interventions may be well targeted to those who need the intervention and hence offer a good rate of return. Other interventions may be less well targeted and require investment in those who do not require the intervention. A potential example of this might be interventions aimed at reducing youth offending. While early prevention programs may be effective at reducing offending, they are not necessarily more cost effective than later interventions if they require considerable investment in those who are not at risk.

Another consideration is the proximity of an intervention to the time where there are the largest potential benefits. . . .

Another factor is that the technology or active ingredients of interventions differ, and it is not clear that those targeted to younger ages will always be more effective. . . .

In general there are many circumstances where interventions to deliver ‘cures’ can be as cost effective as ‘prevention’. Many aspects of life have a degree of unpredictability and interventions targeted as those who experience an adverse event (such as healthcare in response to a car accident) can plausibly be as cost effective as prevention efforts.

These are all interesting points.

P.S. I sent Rea some of these comments, and he wrote:

I had previously read your paper ‘The failure of the null hypothesis’ paper, and remember being struck by the para:

The current system of scientific publication encourages the publication of speculative papers making dramatic claims based on small, noisy experiments. Why is this? To start with, the most prestigious general-interest journals—Science, Nature, and PNAS—require papers to be short, and they strongly favor claims of originality and grand importance….

I had thought at the time that this applied to the original Heckman paper in Science.

I think we agree with your point about not being able to draw any positive conclusions from our data. The paper is meant to be more in the spirit of ‘here is an important claim that has been highly influential in public policy, but when we look at what we believe is a carefully constructed dataset, we don’t see any support for the claim’. We probably should frame it more about replication and an invitation for other researchers to try and do something similar using other datasets.

Your point about the underlying data drawing on effect sizes that are likely biased is something we need to reflect in the paper. But in defense of the approach, my assumption is that well conducted meta analysis (which Washington State Institute for Public Policy use to calculate their overall impacts) should moderate the extent of the bias. Searching for unpublished research, and including all robust studies irrespective of the magnitude and significance of the impact, and weighting by each studies precision, should overcome some of the problems? In their meta analysis, Washington State also reduce a studies contribution to the overall effect size if there is evidence of a conflict of interest (the researcher was also the program developer).

On the issue of the large effect sizes from the early childhood education experiments (Perry PreSchool and Abecedarian Project), the recent meta analysis of high quality studies by McCoy et al. (2017) was helpful for us.

Generally the later studies have shown smaller impacts (possibly because control group are now not so deprived of other services). Here is one of their lovely forest plots on grade retention. I am just about to go and see if they did any analysis of publication bias.

Those benefit to cost ratio numbers are highly suspect to say the least. These numbers make me not trust the work of this institute. They could all turn out to be legitimate numbers, but it’s hard to believe.

I also wish they included data points with negative benefits and benefit cost ratio higher than 100(!) in the figure.

Benefit cost ratios are not measured in dollars, so $100 is sloppy reporting. Anybody can make that mistake, so I don’t think it materially affects my distrust of these results. I would also point out that the norms of publication would make anyone that claims that Heckman was wrong would face a hurdle getting published in a good journal – hence, they may go to great lengths to not directly contradict it. The lengthy list of unaccounted for factors that would impact benefit/cost ratios is realistic and helpful – but it kept making me think “forking paths, noisy measurements, omitted factors, etc. etc.” With all those caveats, it’s hard to know what to make out of the findings, if anything at all.

It might be interesting to see how the ratios might change over time – the authors state that later studies show smaller impacts (for a subset of the interventions, I believe) but there are a number of factors at work here. The statistical significance filter may dictate more exaggerated claims over time (speculative on my part, but it does seem that the pressure to get people’s attention might work that way). At the same time, if we are learning about what works and what doesn’t, we would expect to see the benefit/cost ratio growing over time. However, diminishing marginal returns might mean the low-hanging fruit have been picked, so the ratios should decrease over time. Then there is the potential effect of improved methods and understanding of good empirical work – which should decrease any upward bias in these ratios.

Of course, the hope of teasing out these myriad time trends is daunting, so I’d be surprised to find anything consistent. Seems like an area to make careers by studying such data over and over again. Harking back to yesterday’s discussion, it would be nice to have all the data and code made public for the endless stream of studies that might be coming. And it is important to know whether these interventions are effective and which might be most effective. But I am not optimistic that we will be able to confirm the Heckman curve or find convincing evidence inconsistent with it. Or – more likely – we will see plenty of evidence both in support of, and inconsistent with, the Heckman curve.

How about some simple reality checks?

If it were actually true that preschool environment is much more important to child development than later schooling (including college), then we would expect to see:

1. A strong aversion to having preschool children cared for by low-quality nannies and daycare facilities. Parents would be terrified of having less-educated nannies care for their children.

2. A strong preference for having mothers take care of their own preschool children, especially among high-income couples where the mothers tend to be more educated. Having an educated mother return to work quickly after birth would be considered borderline child abuse.

3. Far more parental attention directed to preschool care than to college admissions. Famous actresses would offer $500,000 bribes to get the best nannies for their children, but would be unconcerned about what colleges the kids attended.

But we don’t see any of these things. According to Heckman, preschool care is phenomenally important, but nobody acts like it is.

Hmmmmmmm.

Terry:

I don’t buy your reasoning, for the following reason:

1. I think there is a very strong aversion to having preschool children cared for by low-quality nannies and daycare facilities. There are also affordability constraints. School is free; preschool in most of the U.S. is not, and people make do with that they can.

2. I don’t see why you are so sure that being cared for 24-7 at home by a mother with high education is better for a child than spending 4 or 8 hours, 5 days a week at preschool.

3. I’m not sure that we can learn so much from these extreme cases. Rich people spend their discretionary income on all sorts of things. You could just as well say that Americans care more about boats than food, given that some rich people spend millions of dollars on their yachts.

Finally, you write that “nobody acts like” preschool care is phenomenally important. I don’t know about “phenomenally” but I think that lots of people think very hard about their own children’s preschool care, and lots of policy analysts think very hard about preschool care of others. Through some accidents of history, the United States does not have a universal preschool program in the way that France does, but I don’t think this means that Americans don’t care about it. There are lots of aspects of any society that are not under people’s direct control but can still be important to them.

It’s funny, I guess maybe Terry isn’t an upper middle class parent… but as he was writing his checklist I was saying

1) Check

2) Check

3) Check, or at least plenty of attention to both, the stuff about extreme bribes isn’t necessary for high quality preschool, but I’ve heard of bribes offered to local preschools by some parents.

so, I don’t buy it either.

Andrew and Daniel:

You make some good points. I’m not completely sure I don’t have a point too, although, in trying to clarify my point, I’m having a hard time making it compelling. That’s a bad sign. I’ll leave it at that.

+1 in my experience, there are lots of poor families who can’t afford either stay at home parents or high quality day care, but upper middle class and up you see all of those things, and lots of pressure for moms to be at home.

You are assuming college is about acquiring human capital. I would argue that a substantial part of the returns to college come from signalling.

Andrew’s points above of course are spot on– just a bit further: Are famous actresses paying bribes because prestigious education is important, or because it raises status? Heckman doesn’t argue about status– early childhood investments are the foundation of development.

It’s a general problem that ideas become fixed in social sciences with minimal and often highly unpersuasive evidence behind them. I ran into this recently when reading articles about prostitution. An argument being made is that legalization actually increases human trafficking. Problem: there is one paper from 2012 cited in every case. Any other references are to this paper through secondary pathways. I saw one to a Harvard ‘study’ which was a blog post noting the existence of the 2012 paper. Problem: the paper doesn’t say exactly what they say it says; it says on average they found this result in certain advanced countries, and then they ignore the points that make this possible – like the EU ease of travel for EU members makes Romanian prostitutes coming into Germany a different category than the US. But mostly the paper uses at best estimate ranges whose reliability is superficially stated as sometimes exceeding 100%, like a range of 9,000 that extends to over 18,000, and which are themselves of uncertain worth in any case. But this is the political position so it is the ‘data’ or ‘science’ position.

I particularly note that this is the political position which argues that somehow we can convince men from buying sex (and women from selling sex), something that no society has ever managed to do. It would seem that if you’re going to demand such an unlikely behavioral change, you’d have better data to support the need.

I don’t want to suggest I have any special interest in this, but by casual observation I notice that almost every time a news organization runs a story with statistics on human trafficking, they end up walking the numbers back as poorly supported a few days later after sex-work advocates (or others) complain.

Thank you for introducing this piece of research. I don’t think I would have come across it if it weren’t for your post.

I agree with most of the discussion in this post, however, the argument made by the title doesn’t seem to be established by what Rea and Burton show in their paper. It is obvious that the Heckman Curve is not a scatter plot of the data; it is the shape of the parametric function that describes the production of human capital, based on estimates from a series of papers from the work of Heckman and his coauthors. The argument underlying the curve is conditional on many factors: the quality of programs, the care given by parents and teachers, interactions with peers, etc. This is essentially what Rea and Burton describe in the conclusion. On the other hand, the data that, according to the title, isn’t supporting the Heckman Curve, makes an unconditional argument. Or maybe it’s conditional on something as well, but it’s probably not the set of information that Heckman’s models are conditional upon.

It is probably not a surprise that program quality matters, and that programs differ in their quality. The lack of diminishing returns could very well be the result of well-designed and well-executed programs for children older than 5. The calculation of returns on these programs is definitely a subject of debate: what does the long-run return of human capital even mean? The idea seems as philosophical as it is economic. However, Rea and Burton’s results don’t seem to be inconsistent with Heckman’s argument that the conditional effect is larger in early childhood.

I believe that Heckman mainly looks at income as the “long run return.”

This is the problem I see. Heckman is very devoted to the cause of early childhood education, we know that over many of his articles a number of which have been discussed here. Basically that argument is that if you do what you need to do to help kids be successful in their early years in school that continues to have ramifications through middle school, high school, and in post high school life. Most of his work is focused very highly on the most disadvantaged children and sometimes it’s about things a simple as reading to them or as complex as changing parenting behavior (which is a key element of Perry Preschool and the Jamaican studies).

With the Heckman curve he’s making the argument for investing in this. Which is fine for the most part except for the implication that we should accept a tradeoff where that early spending replaces spending for older people. The argument is that helping a 5 year old learn to read is less expensive than helping a 45 year old or even a 15 year old. Maybe it is, I don’t know the accounting of it, but I can imagine that it is expensive to have kids be in school and not learn to read and then have to repeat the whole thing in remedial education in college or in an adult literacy program. But that turns into an argument for writing those adults off. So the data in the graph seems to say: don’t write those older people off. Also because we are just seeing the ratio not the absolute amounts, we don’t really know how much the cost is for teaching a 45 year old versus teaching a 5 year old.

What always strikes me as interesting here is that the most successful early childhood programs often are effectively also interventions with the parents. That’s what happened with the health care visitors in Jamaica and that’s what happened in Head Start. Even today I’ve suggested that we try to get every Bronx parent who didn’t graduate from high school into a GED program, both because that would open doors for them but also because it would change their ability to help with homework. I have no idea if that would be cost effective but I know that children of people with a given level of education most often get to at least the same level of education.

A thing I just thought of that no-one else seems to have mentioned or noticed:

The estimate of how effective an early intervention is relies on the fact that it tends to amplify the effects of later interventions. A child who receives pre-school is better able to utilize the elementary school, a child who does well in elementary school gets through high school, a person who does well in high school has opportunities for college, college provides opportunities for better initial jobs, and better careers, etc.

Now, if for example you cut funding for high school or elementary school and put it into pre-school… there is potentially less benefit to amplify. The child is ready to do well in elementary school, but the teachers are overwhelmed or whatever…

I think the ultimate point I’d like to make is that if you want to optimize the use of funds, you have to do it as a whole vector of funds for each program. “the effectiveness of early interventions” is not a constant thing, it’s a thing that’s dependent on other interventions at later ages.

Yes that’s right, the idea would be that because they are better prepared they are better able to learn. Like any non linear process the impact of something like spending a year doing music in school varies depending on the starting point. This was discussed here previously https://statmodeling.stat.columbia.edu/2016/11/09/how-effective-or-counterproductive-is-universal-child-care/ Also all this work is all difficult because of the need for incredibly long follow up. In the Jamaica study one of the issues I have is that a meaningful number of the subjects were still in school at the time the data for the article were collected. https://statmodeling.stat.columbia.edu/2015/01/12/whats-misleading-phrase-statistical-significance-practical-significance/

Of course, children don’t only learn in school. Preparation in early years could, theoretically, better prepare children as they get older to learn across a wide variety of contexts.

That doesn’t negate your point, but I would suggest it diminishes the impact you might expect to see from diminishing investment in programs for older kids relative to investing in programs for younger kids. Investing in schooling (or other programs) for younger kids would span put and grow in many contexts: diminishing investment in schooling for older kids would diminish the compounding returns in only one of those contexts.

… or I should say which span out and grow from only one of those contexts (obviously, the same phenomenon of expanding contexts for return would also apply to investment in programs for older kids as well).

Oops, Heckman’s paper is about the marginal rate of return. But Rea and Burton only try to measure the averages, which is irrelevant.

Jk:

Different. Not irrelevant.

If the point is to evaluate Heckman’s claims, then only marginal matters. More generally, is there any case where average rather than marginal matters for policy evaluation or recommendations?

Jk:

I agree that marginal is often what’s important for policy. But in evaluation of evidence typically the distinction is not so clear. Almost always when a policy is evaluated it is some mix of aggregate and marginal.

No, not in economics. Replicating a mistake by appealing to common practice is an odd take from you.

This evokes the infamous Neuhauser & Lewicki, “What do we gain from the sixth stool guaiac?” NEJM. 1975 paper. They find the average cost per detection was around $2400 if six tests were done, and thought that average was appealing. But the marginal cost per detection from that 6th test was in fact $47,000,000.

Fantastic. I’ve never come across this article before. Thanks.

I haven’t looked up the article yet, but are you saying that they found the average of 6 positive things was $2400 but the 6th one alone was $47Million ? you realize that’s not logically possible right?

All right from reading just the abstract (I am not going to buy this article) I am guessing they found that if you do 5 in a row per person you spend $X and detect N cancers per year, and if you do 6 in a row per person you spend $X+47M and detect N+1 cancers per year… is that about the sum of it?

I don’t have access to this either, but it claims the article is seriously flawed.

https://www.sciencedirect.com/science/article/abs/pii/016762969090004M

Kaye Brown and Colin Burrows, The sixth stool guaiac test: $47 Million that never was. J Health Econ vol 9 issue 4, 1990

Not to question your points about the statistical issues at hand…

I do think it’s worth underscoring that an important point not be lost in this discussion (I’m not suggesting that you’ve lost it, I think you acknowledge it directly):

There is a baic theory at work here – that investment in children’s development will have compounding returns. Our ability to measure and prove that compounding effect will necessarily always be limited by the impossible task of controlling for the myriad variables in play for measuring cause and effect in educational, or developmental research (the Hawthorne effect alone presents an overwhelming obstacle).

Along with evaluating the numbers that examine effect, the basic theory in itself should always be a primary consideration. What I would hope is that no one confuse the inadequacies in numerical evaluation of that effect with evidence (or at least proof) that the theory in itself is falsified.

I’m curious if any readers (particularly those who might think that Heckman’s research creates a compounding growth in benefit whereas none exists in reality) might present a plausible theory as to why investment in education wouldn’t have compounding returns, with a logical conclusion that the earlier the investment, the more dramatic the returns relative to later investment. Of course, that might be like asking for proof of a negative?

+1 I think there is a body of work that says that the direct impact of high quality preschool on academics “wears off” by late elementary school, but that the impact on other things like early pregnancy and incarceration is still evident in adulthood. In particular Heckman really focuses on very high need, low income children. The massive impact on salary is $18k/year versus ~27k per year, both still low by middle class standards but the latter in the US above the poverty level for a family and maybe meaning you don’t have housing or food insecurity most months. Before head start, well off and many middle class families sent their children to nursery school and poor children got their first experience of school at kindergarten or first grade. It’s still true today that without subsidized programs low income families would not have access to quality childcare programs.

Elin:

I think there are a lot of reasons to support universal preschool or something like it, even if the effects that Heckman et al. claim are not there.

Joshua: yes, there are a myriad variables at play, but it widely known that positive impacts from early childhood education and care (ECEC) are very strongly associated with facilitating parents’ employment. That is to say that ECEC has many fundamental differences to education of 5+ year-old children, the care aspect being key. This is a different perspective than assuming that ECEC is just the same as other kinds of education, the only difference being that it starts early in life.

You seem to equate education with investing in children’s development. Early development is crucial and some subsets of children benefit greatly from ECEC for their cognitive and non-cognitive development. That does not mean that children usually need ECEC for their normal or even some sort of improved development in that age.

I have never quite got my head around the conceptual basis of Heckman’s claim. Presumably it’s that – ON AVERAGE – interventions earlier in life are more cost-effective than those later in life (with a declining level of cost-effectiveness commensurate with age). Is that about right?

If so, I’m really not sure where this leads a decision-maker, concerned (hopefully) with the cost-effectivenes of the next intervention option relative to (i) what’s already in place; AND (ii) all other available intervention options wanting a chance (including with all the forward-looking uncertainty as to the level and profile of both costs and benefits).

In that real-world decision-making setting, including with local context and political economy considerations, how can ANYTHING AT ALL be generalised about the most valuable next intervention? And what about equity considerations and procedural fairness, to give legitimate consideration to the interests of ALL age groups? There can be no pre-determination (assuming good institutional arrangements) that any population sub-group could or should have a defacto priority over others, absent the assessment work required to determine what next intervention choice would, all things considered, be best.

It also seems to me that the Heckman proposition accords quite closely with the popular claim that ‘prevention is better than cure’ (i.e. a preference to invest earlier in ‘prevention’ rather than later in ‘treatment’). But this also strikes me also folly. Consider these definitions of prevention and treatment:

– Prevention … an action now with the expectation or hope that it avoids or reduces some undesirable outcomes from now into the future; and

– Treatment … an action now with the expectation or hope that it avoids or reduces some undesirable outcomes from now into the future.

I’m being provocative to use the same words but I think these definitions are absolutely robust (but welcome challenge).

So, while the Rea and Burton paper looks at Heckman’s proposition using empirical evidence, I just can’t see from first principles that it holds much water. (Great comments above, by the way, thanks all for your interesting and valuable contributions.)