David “Xbox poll” Rothschild and I wrote an article for Slate on how political prediction markets can get things wrong. The short story is that in settings where direct information is not easily available (for example, in elections where polls are not viewed as trustworthy forecasts, whether because of problems in polling or anticipated volatility in attitudes), savvy observers will deduce predictive probabilities from the prices of prediction markets. This can keep prediction market prices artificially stable, as people are essentially updating them from the market prices themselves.

Long-term, or even medium-term, this should sort itself out: once market participants become aware of this bias (in part from reading our article), they should pretty much correct this problem. Realizing that prediction market prices are only provisional, noisy signals, bettors should start reacting more to the news. In essence, I think market participants are going through three steps:

1. Naive over-reaction to news, based on the belief that the latest poll, whatever it is, represents a good forecast of the election.

2. Naive under-reaction to news, based on the belief that the prediction market prices represent best information (“market fundamentalism”).

3. Moderate reaction to news, acknowledging that polls and prices both are noisy signals.

Before we decided to write that Slate article, I’d drafted a blog post which I think could be useful in that I went into more detail on why I don’t think we can simply take the market prices are correct.

One challenge here is that you can just about never prove that the markets were wrong, at least not just based on betting odds. After all, an event with 4-1 odds against, should still occur 20% of the time. Recall that we were even getting people arguing that those Leicester City odds of 5,000-1 odds were correct, which really does seem like a bit of market fundamentalism.

OK, so here’s what I wrote the other day:

We recently talked about how the polls got it wrong in predicting Brexit. But, really, that’s not such a surprise: we all know that polls have lots of problems. And, in fact, the Yougov poll wasn’t so far off at all (see P.P.P.S. in above-linked post, also recognizing that I am an interested party in that Yougov supports some of our work on Stan).

Just as striking, and also much discussed, is that the prediction markets were off too. Indeed, the prediction markets were more off than the polls: even when polling was showing consistent support for Leave, the markets were holding on to Remain.

This is interesting because in previous elections I’ve argued that the prediction markets were chasing the polls. But here, as with Donald Trump’s candidacy in the primary election, the problem was the reverse: prediction markets were discounting the polls in a way which, retrospectively, looks like an error.

How to think about this? One could follow psychologist Dan Goldstein who, under the heading, “Prediction markets not as bad as they appear,” argued that prediction markets are approximately calibrated in the aggregate, and thus you can’t draw much of a conclusion from the fact that, in one particular case, the markets were giving 5-1 odds to an event (Brexit) that actually ended up happening. After all, there are lots of bets out there, and 1/6 of all 5:1 shots should come in.

And, indeed, if the only pieces of information available were: (a) the market odds against Brexit winning the vote were 5:1, and (b) Brexit won the vote; then, yes, I’d agree that nothing more could be said. But we actually to have more information.

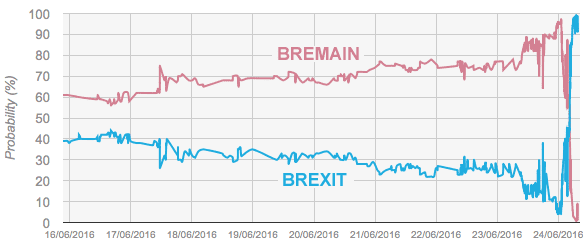

Let’s start with this graph from Emile Servan-Schreiber, from a post linked to by Goldstein. The graph shows one particular prediction market for the week leading up to the vote:

It’s my impression that the odds offered by other markets looked similar. I’d really like to see the graph over the past several months, but I wasn’t quite sure where to find it, so we’ll go with the one-week time series.

One thing that strikes me is how stable these odds are. I’m wondering if one thing that went on was that a feedback mechanism where the betting odds reify themselves.

It goes like this: the polls are in different places, and we all know not to trust the polls, which have notoriously failed in various British elections. But we do watch the prediction markets, which all sorts of experts have assured us capture the wisdom of crowds.

So, serious people who care about the election watch the prediction markets. The markets say 5:1 for Leave. Then there’s other info, the latest poll, and so forth. How to think about this information? Informed people look to the markets. What do the markets say? 5:1. OK, then that’s the odds.

This is not an airtight argument or a closed loop. Of course, real information does intrude upon this picture. But my argument is that prediction markets can stay stable for too long.

In the past, traders followed the polls too closely and sent the prediction markets up and down. But now the opposite is happening. Traders are treating markets odds as correct probabilities and not updating enough based on outside information. Belief in the correctness of prediction markets causes them to be too stable.

We saw this with the Trump nomination, and we saw it with Brexit. Initial odds are reasonable, based on whatever information people have. But then when new information comes in, it gets discounted. People are using the current prediction odds as an anchor.

Related to this point is this remark from David Rothschild:

I [Rothschild] am very intrigued by this interplay of polls, prediction markets, and financial markets. We generally accept polls as exogenous, and assume the markets are reacting to the polls and other information. But, with growth of poll-based forecasting and more robust analytics on the polling, before release, there is the possibility that polls (or, at least what is reported from polls) are influenced by the markets. Markets were assuming that there were two things at play (1) social-desirability bias to over report leaving (which we saw in Scotland in 2014) (2) uncertain voters would break stay (which seemed to happen in the polling in the last few days). And, while there was a lot of concern about the turnout of stay voters (due to stay voters being younger) the unfortunate assassination of Jo Cox seemed to have assuaged the markets (either by rousing the stay supporters to vote or tempering the leave supports out of voting). Further, the financial markets were, seemingly, even more bullish than the prediction markets in the last few days and hours before the tallies were complete.

One of these markets, PredictIt, which is incorporated into the PredictWise probabilities, is very illiquid with pretty large fees placed on money going in to and coming out of the market. Even if the market participants were completely rational and fully incorporated the information from news into their bets these fees would make PredictIt less responsive to news than other markets. Just as an example of the kind of problems this illiquidity can cause, earlier this year PredictIt had primary candidate markets where the probabilities of each of the candidates winning the election didn’t sum to one. When they changed the rules so that you can buy multiple candidates in one bet, this problem fixed itself fairly quicky. I’d be interested to see whether PredictIt is worse than Betfair in terms of the muted response to news.

One of the markets that makes up the PredictWise score, PredictIt, is very illiquid with large fees places on money entering or leaving the market and on any returns within the market. Even if people were completely rational and fully incorporated news into their bets these fees would lead to a delayed response from PredictIt to news. As an example of how bad the illiquidity problem at PredictIt is earlier this year the “probabilities” of all democratic primary candidates winning the election summed up to about 1.5 instead of 1. When the market structure was changed so that betting on multiple candidates became less costly in terms of fees, the “probabilities” quickly came back to pretty close to alignment with laws of probability. I’d be interested to see if PredictIt responds slower to news than Betfair.

My impression of people who participate in prediction markets is that they would tend to support Remain and not support Trump. This is not supported fact, just my general impressions of the demographics of these groups. If true, perhaps the prediction markets fail in these cases because the participants are not representative of the population.

Assuming that these participates benefit with Remain and non-Trump outcomes, we should probably see these people hedge using these markets. This would over-estimate the (real-world) chances of the Exit and Trump scenarios.

Assuming that these folks have a financial interest in a Remain and non-Trump outcome, we should expected these participants to use the market to hedge their risks. This would actually lead to an observed probability that overstates the chances of Exit and a Trump election. This would be akin to how put options usually overstate the chances of a stock market crash.

Perhaps. Do you know of any sources that provide evidence that prediction markets are used to hedge financial risks? My limited exposure to people who participate in such markets is that they use it for speculative, not hedging, purposes, and thus they tend to overweight their biases not their risks.

As to hedging the risk of Brexit, for example, a participant would first need an accurate estimate of how much they would lose from Brexit before using a prediction market to hedge. No one I know in these markets does such a thing, but I could imagine a large corporation at least having such an estimate. Whether they would trust a prediction market to hedge that risk, I have no idea. Thus, my interest in whether you know of any references.

I can’t find it on a quick search, but I thought you had written before in the blog (and in research?) on the general point that polls are far too volatile in general. My take-away (recollection only, so maybe I am imagining it, but I’ve internalized it anyway!) is that one should very heavily discount momentary (even if “momentary” is “this month”) news, fads, scandals, etc relative to far more slow moving voting preferences.

Is my memory wrong? Even if not I can sort of see how this current concern is different, but it still seems broadly at odds.

Sorry, was not meant to be a reply but a head comment/question.

Bxg:

Yes, I have written that; for example, I wrote Every latest shift in the polls is news. But it shouldn’t be, recently in the Monkey Cage. That’s related to my point 1 at the top of the above post. One should not over-react to news. But I think that recently, with Brexit, we were seeing point 2: people were under-reacting to news.

“One challenge here is that you can just about never prove that the markets were wrong, at least not just based on betting odds. After all, an event with 4-1 odds against, should still occur 20% of the time.”

I understand it’s not possible for one particular event but taking several events it should be possible to know if markets are good predictors or not. What does the literature say about it?

Wolfers and Zitzewitz have research on this: http://www.nber.org/papers/w10504

How do you treat the following? I have been collecting stories about Brexit and nearly all seek to excuse the vote as though it was an entirely irrational result or is an assertion of “Empire” by old white people or is the common slob angry at the upper class globalist or is the literally ignorant who voted leave but really wanted remain to win (sort of an intended impotence argument) and so on, despite the fact the pre-vote polls showed nearly half voting to leave. Talk about a feedback loop: it survives the vote! I’ve seen a few pieces that talk about estimates of the large number of MPs who voted to leave, as well as academics, etc. but this seems to be a case where the pre and post discussion is absolutely dominated by those who wanted a particular result. And while that may be racism or “Empire” or anger or whatever, it also may be other things, like disbelief it will really hurt Britain over time and belief it may help Britain (or as I’ve seen argued, a sense that growth in power of Brussels has a cost), as reflected in the actual vote that in the aggregate was a coin flip about whether staying or leaving was the best choice.

For me, as I noted in an earlier post of yours on this topic, the a-ha moment was The Economist saying they adjusted their model, which accurately showed leave winning, because they couldn’t believe the result (so they simply reduced the leave vote). If that’s not an example of bias clouding judgement, what is?

If we had prediction markets not only for the binary outcome, but on the spread as well, they would be much more agile and helped to move the binary outcome markets as well.

Betfair had markets on various “over/under” spread bets, and markets on various turnout levels as well. Also by the day of the vote, the Betfair prices rarely deviated much from the probabilities implied by sterling spot.

I’ve always found it interesting when people say prediction markets are “wrong”. That’s nonsensical. In a liquid market with diverse participants, prediction markets are simply the reflection of the public’s belief’s about the probability of certain outcomes. The public can be wrong, yes, but the market can’t. It’s just a vehicle for translating aggregate public opinion to probability.

Is it “corporations are people” type of argument? If so, it is trivial. But prediction market is a specific mechanism for accumulating “public’s belief”, which can work well or less well. Also, “public’s belief” in abstract is a poorly defined concept. Who believes in particular? If believes differ, how we weigh them? What’s with people who have no opinion? And on, and on.

D.O.:

Yes. When we say that the market is giving wrong odds, we are implicitly saying that the bettors in the market are giving wrong odds.

In the short run you can “never prove that the markets [bets] were wrong” https://twitter.com/d_spiegel/status/746037753742245893

I commented on this issue briefly here in the aftermath of the vote: https://medium.com/kiln-s-cauldron/brexit-and-the-forecasting-business-part-1-shorter-term-issues-9e04bad3c010#.bi3yq84t8

Yes, holding on to original assumptions for too long is part of the problem, but the real problem is that the people in prediction markets were largely not people in the key voting areas. They had no knowledge to bring. As such the markets just reflected the polls – and the polls were no good, for a variety of reasons. (I cover that in my Medium piece.)

However, what is important is to realise that this generalises to a lot of events where prediction markets are used. They are simply aggregators of second hand information – and thus no wiser than that information. Knowing when a market actually contains extra information is not easy, but it is key to knowing whether or not it’s worth paying any extra attention to.

The author should simply stick with his observation that the markets aren’t wrong as far as we know. If something is trading at 25%, it should result roughly 25% of the time.

Lastly, the markets aren’t predicting who will win or lose. It’s a marketlace for speculators to place bets. Think New York Stock Exchange. For example, I might bet on “X” winning even if I think “x” will lose. Why? Because I might think the odds of “x” winning are higher that where the odds are trading. For example, 30% vs. 20%. That’s a mismatch in spread that arbitrage traders will make all day.

If the markets were this blatantly wrong, they would correct almost immediately – assuming there is liquidity. It’s easy money that fools could capitalize on. Markets ain’t nearly as dumb as this author suggests – whether it be in stocks, bonds or in prediction markets.

Mark:

You are demonstrating what is sometimes called “market fundamentalism,” and this attitude of yours illustrates how markets can go wrong! As the Brexit time series illustrates, the markets do not correct almost immediately, in part we suspect because of bettors like yourself, who are so sure the markets can’t be wrong. Finally, markets themselves aren’t “dumb” or “smart”; they represent the aggregate decisions of bettors, who can indeed be less or well informed.

But you have not even begin to prove the markets were “wrong”. Your entire premise is based on a hunch without any backing whatsoever.

For all we know the accurate odds 5% instead of 20%. That is the point.

To add the obvious, watch the race tracks tonight. 5-1 odds will win all the time. And odds will react to news in all different ways – including the timing on news.

That doesn’t begin to prove the odds or the markets were “wrong”. History has proven them to be remarkably hard to beat in fact. Other markets are no different assuming they are liquid with lots of bettors.

Was it not widely reported that there were many more bets on Leave than on Remain? Is it not possible that it was simply a few rich folks making big bets one way which “drowned out” the other signal? Or is that not how these odds work?