Jonathan Sterne sent me this opinion piece by Stephan Lewandowsky and Dorothy Bishop, two psychology researchers who express concern that the movement for science and data transparency has been abused.

It would be easy for me to dismiss them and take a hard-line pro-transparency position—and I do take a hard-line pro-transparency position—but, as they remind us, there is a long history of the political process being used to disparage scientific research that might get in the way of people making money. Most notoriously, think of the cigarette industry for many years attacking research on smoking, or more recently the NFL and concussions.

Rather than saying we want transparency but we don’t want interference with research, I’d rather say we want transparency and we don’t want interference with research,

Here are some quotes from their article that I like:

Good researchers do not turn away when confronted by alternative views.

The progress of research demands transparency.

We strongly support open data, and scientists should not regard all requests for data as harassment.

The status of data availability should be enshrined in the publication record along with details about what information has been withheld and why.

Blogs and social media enable rapid correction of science by scientists.

Scientific publications should be seen as ‘living documents’, with corrigenda an accepted — if unwelcome — part of scientific progress.

Freedom-of-information (FOI) requests have revealed conflicts of interest, including undisclosed funding of scientists by corporate interests such as pharmaceutical companies and utilities.

Researchers should scrupulously disclose all sources of funding.

Journals and institutions can also publish threats of litigation, and use sunlight as a disinfectant.

Issues such as reproducibility and conflicts of interest have legitimately attracted much scrutiny and have stimulated corrective action. As a result, the field is being invigorated by initiatives such as study pre-registration and open data.

Here are some quotes I don’t like:

Research is already moving towards study ‘pre-registration’ (researchers publishing their intended method and analysis plans before starting) as a way to avoid bias, and the same strictures should apply to critics during reanalysis.

All who participate in post-publication review should identify themselves.

I disagree with the first statement. Pre-registration is fine for what it is, but I certainly don’t think it should be a requirement or stricture.

And I disagree with the second statement. If a criticism is relevant, who cares if it’s made anonymously. For example, there seem to be several erroneous calculations of test statistics and p-values in the work of social psychologist Amy Cuddy. Once someone points these out, they can be assessed independently. On the other hand, it could make sense for pre-publication review to be identified. The problem is that pre-publication reviews are secret. So if someone makes a pre-publication criticism or endorsement of a paper, it can’t be checked. It wouldn’t be bad at all for such reviewers to have to stand by their statements.

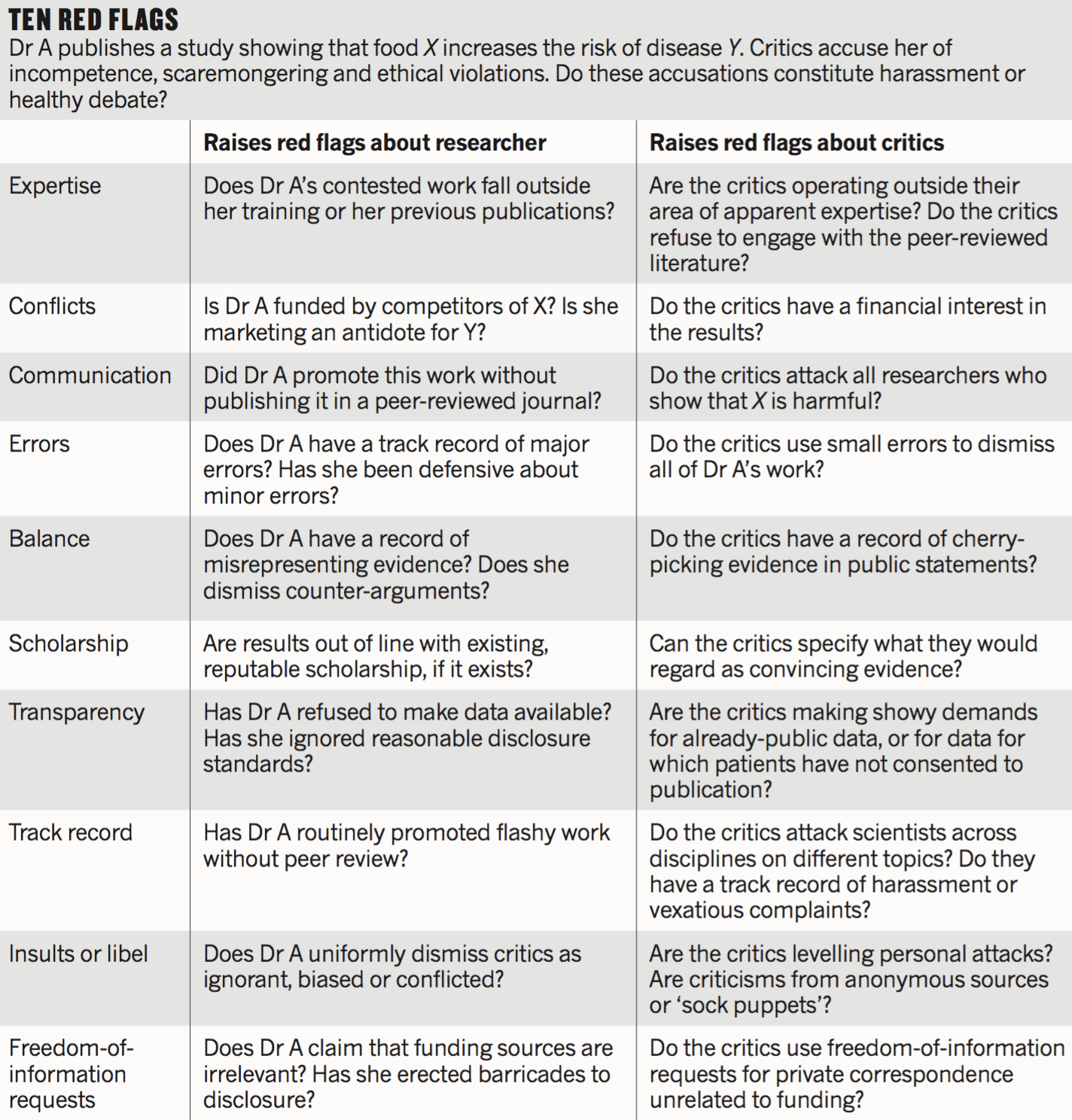

And here’s Lewandowsky and Bishop’s summary chart:

This mostly seems reasonable although I might make some small changes. For example, I don’t like the idea that it’s a red flag if Dr A promotes work that has not been peer-reviewed (a concern that is listed twice in the above table). If you do important work, by all means promote it right away, don’t wait on the often-slow peer-review process! We do our research because it’s important, and it seems completely reasonable to share it with the world.

What’s important to me is not peer review (see recent discussion) but transparency. If you have a preprint on Arxiv with all the details of your experiment, that’s great: promote your heart out and let any interested parties read the article. On the other hand, if you’re not gonna tell people what you actually did, I don’t see why we should be reading your press releases. That was my problem with that recent gay gene hype: I don’t care that this silly n=47 paper wasn’t peer reviewed; I care that there was no document anywhere saying what the researchers actually did (nor was there a release of the data).

What the researcher said regarding a critical journalist was:

I would have appreciated the chance to explain the analytical procedure in much more detail than was possible during my 10-minute talk but he didn’t give me the option.

There was time for a press release with an interview and a publicity photo, but no time for writing up the method or posting the data. That’s a flag that it’s safe to wait a bit before writing about the study.

The “peer-reviewed journal” thing is just a red herring. I completely disagree with the idea that we should raise a red flag when a researcher promotes work before publication. I for one do not want to wait on reviewers to share my work.

Also, one more thing. The above table includes this “red flag”: “Are the critics levelling personal attacks? Are criticisms from anonymous sources or ‘sock puppets’?” That’s fine, but then let’s also raise a red flag when researchers do this same behavior. Remember John Lott? His survey may have been real, but his use of sock puppets to defend his own work does give a credibility problem.

In any case, I think Lewandowsky and Bishop do a service by laying out these issues.

Full disclosure: Some of my research is funded by the pharmaceutical company Novartis.

We may not compel pre-registration but it may be a good move to have papers declare prominently at the top in a standardized format:

“This work was pre-registered” or “This work was NOT pre-registered”.

This work was pre-registered*

“Will transparency damage science?” – No.

*http://statmodeling.stat.columbia.edu/2016/05/02/on-deck-this-week-50/

https://en.wikipedia.org/wiki/Betteridge's_law_of_headlines

The inside vs outside area of expertise issue is basically just a way for people to dismiss other people. Entire fields of research can go off into the weeds and only outsiders can detect it (hello Power Pose)…

Take criticism at face value independent of who it comes from. Let the criticism stand on its content.

I do not think the issue is just that. There are several people operating outside their field, who are really obnoxious, including engineers who think they can disprove the theory of evolution and physicists who think they revolutionized our understanding of cancer. And don’t forget the cranks swamping mathematicians claiming to have found flaws in classical theorems. I remember one person who submitted proof after proof for the real numbers being countable.

A statistician is in the comfortable situation that he is rarely outside his field of expertise as long as you look at any sort of empirical science. I don’t think that the people criticizing the power pose things were operating outside their field.

It would be nice to have the time to evaluate every argument on it’s merits, but some sort of filtering is usually required.

Erik: But this is a downside the resulted from my making a comment regarding a comment by the same Jonathan Sterne.

Personal names removed.

Sent: Wednesday, June 27, 2012 3:45:32 AM

Subject: Cochrane SMG discussion list and recent email exchange

Dear Dr O’Rourke,

We have taken the decision to suspend you from the Cochrane Statistical Methods Group discussion list, SMGlist.

We have previously warned you about sending confrontational emails to the list. Your most recent posts to the list about noninferiority trials were not politely worded, and we have received several off-list adverse comments from long-standing list members. We are concerned that the tone of such exchanges detracts from the usual collaborative spirit and may deter younger list members from contributing and may lead to resignations from the email list. As you have not modified the tone of your posts since previous warning, we feel we had no option but to suspend you from the list.

The SMG co-convenors,

My emails to get Jonathan’s thoughts regarding this banning (or the methodological concerns I raised) were never returned.

(Now we have met and interacted many times before this incident.)

Well, to judge one would need to read these “not politely worded” emails he refers to……

By removing the co-convenors names it might have seemed Jonathan sent the email, he was not one of the c-convenors.

Here was my email (think it would be unfair for me to post Jonathan’s here) ”

“With all due respect, I believe I need to be very direct if not impolite about this.

I think this is ludicrous – “no reason to view results from noninferiority trials differently”

In noninferiority trials (as in all indirect comparisons) Rx versus Placebo estimates require one to make up data (exactly as it occured in earlier historical Placebo controlled trials) but rather than being explicite about that making up of the data (e.g. calling it an imformative prior) vague assumptions are stated that would justify the making up as essentially risk free. Unfortunately there is no good way to check those assumptions and they are horribly non-robust.

Exactly how much less worse noninferiority studies are than observational studies is a good question – the earlier multiple bias analysis literature, especially R Wolpert did address this but it seems to have been forgotten. Also Stephen Senn has written a somewhat humorous paper for drug regulators (with huge historical trials the made up SE will almost be zero)

– hopefully they wont miss the point.

Cheers

Keith”

Standards of politeness are very high in that group.

I am reminded of the old usenet group stat.sci.consult, where we saw what unmoderated statisticians can say to each other under aliases.

The Politeness-vs-Correctness tradeoff?

Rahul: Not sure that’s the trade-off here.

There is this possibility – “ritual human sacrifice serves to cement power structures—that is, it signifies who sits at the top of the social hierarchy” http://www.scientificamerican.com/article/how-human-sacrifice-propped-up-the-social-order/

(Of course, the evidence for that claim is, I believe fairly lacking.)

In any case, I hope Mister Jonathan Sterne (or his long-standing list members) never venture to post on the Linux Kernel Mailing List or else they might get traumatized and scarred for life.

https://lkml.org/lkml/2012/12/23/75

“Mauro, SHUT THE FUCK UP!

It’s a bug alright – in the kernel. How long have you been a

maintainer? And you *still* haven’t learnt the first rule of kernel

maintenance?

If a change results in user programs breaking, it’s a bug in the

kernel. We never EVER blame the user programs. How hard can this be to

understand?

To make matters worse, commit f0ed2ce840b3 is clearly total and utter

CRAP even if it didn’t break applications.

………..

Shut up, Mauro. And I don’t _ever_ want to hear that kind of obvious

garbage and idiocy from a kernel maintainer again. Seriously.

……..

Seriously. How hard is this rule to understand? We particularly don’t

break user space with TOTAL CRAP. I’m angry, because your whole email

was so _horribly_ wrong, and the patch that broke things was so

obviously crap. The whole patch is incredibly broken shit. It adds an

insane error code (ENOENT), and then because it’s so insane, it adds a

few places to fix it up (“ret == -ENOENT ? -EINVAL : ret”).

…..

The fact that you then try to make *excuses* for breaking user space,

and blaming some external program that *used* to work, is just

shameful. It’s not how we work.

Fix your f*cking “compliance tool”, because it is obviously broken.

And fix your approach to kernel programming.” —Linus Torvalds on the Linux Kernel Mailing List

But most of these cases are actually filtering on the content, not the person.

Sure it’s an “Engineer” who claims to “disprove the theory of evolution” but the reason we can ignore it is not because it’s an Engineer, but because it’s a claim of “disproving the theory of evolution” which is pretty strongly established, a claim will have to withstand extreme scrutiny to be credible, and most of these are laughable on their face.

Cranks swamping mathematicians with proofs of the countability of the real line can be ignored unless they provide a specific reason why existing proofs of the uncountability are wrong.

It’s not the person it’s the message. In cases where a particular person has proven themselves to be a crank in the past, sure, ignore them. But ignoring all people outside a small class? That’s a recipe for non-science.

@Daniel

You write:

“Take criticism at face value independent of who it comes from. Let the criticism stand on its content.”

Isn’t that akin to saying: Discard your priors. Just look only at this current data independently? Isn’t that almost perfectly antithetical to your typical Bayesian stand?

Why should we ignore whatever we can impute from the criticizer’s past record of correctness or quality.

As I said above “In cases where a particular person has proven themselves to be a crank in the past, sure, ignore them. But ignoring all people outside a small class? That’s a recipe for non-science.”

So prior information about the individual is certainly useful, but information like “he’s an Engineer, ignore what he says about Biology” is imputing much more from the prior information than is justified.

Also, it ignores the likelihood !!! that is, your prior may say “gee engineers rarely know much about biology” but then failing to actually look at the data (ie. the content of the argument they provide) is decidedly NOT Bayesian unless your prior on their relevance is close to a delta function at 0.

It’s actually an example of the illogic of Frequentist inference!

How often do engineers have valuable insights into biology? Less than 5% of the time. Therefore we reject the hypothesis that this is a valuable insight (p < 0.05), and don't bother even reading it.

But you could get a similar inference with a fully bayesian hierarchicl modelling with a profession level and an individual level.

Once you encountered enough engineers saying crap (just an example) the posterior probability for the next engineer being worth listening to is also vanishingly small.

Your frequentist method also seems incorrect. Would it not be more correct to argue that given the argument being valid (null hypothesis) the probability of it having been made by an engineer or a more extreme profession (hah) is smaller than 0.05,thus rejecting your null hypothesis?

Erik: once you encounter enough engineers saying crap…

So, first off let’s remember that one engineer saying crap over and over N times is not the same as N engineers all saying crap every time…

So, if you take a random sample of N engineers and they ALL say something totally wrong about biology, how big does N have to be before you can really treat engineers prior for knowing something useful about biology as “vanishingly small”.

Let’s just call “vanishingly small” frequency f=0.01 in a binomial model. Using a Bayesian model in which our prior is beta(.5,.5) at what point does f > 0.01 have probability < 0.01 ? (english translation, we're 99% sure that less than 1% of engineers have anything useful to say on biology)

The calculation in R:

engrfun <- function(N) pbeta(0.01,.5,N+.5,lower.tail=FALSE)

> engrfun(330)

[1] 0.009981177

So by all means if you randomly sample 330 engineers using an RNG from the rolls of people graduated from universities with engineering degrees and you sample 330 of them in a row and EVERY SINGLE ONE has wacked-out ideas about biology… then yeah, you can treat them as an information free source on biological questions.

I think the typical calculation is closer to "I heard these 3 or 4 engineers trolling over and over at the climate science blog and so now I treat all engineers as cranks on climate issues" or whatever, substitute your favorite in/out groups.

Excising the “true if and only if peer reviewed” meme from academics might be a tough slog.

Yes!! All great points. One nitpick: “If a criticism is relevant, who cares if it’s made anonymously.” OK, I would say we should use *pseudonyms*, but not total anonymity. The distinction is important because we want people to care about their reputation, even if it’s a pseudonym. This protects the person’s identify but still attaches a track record and reputation to the claims being made. Just like on this blog. Commenters have a history, and you know who is usually very insightful, not so insightful, possible troll, etc. I don’t know who “Rahul” is, but I know he makes good comments and I pay more attention that if the comment was signed “Anonymous”.

I’m worried that otherwise noise will swamp signal. But maybe I’m wrong and my fears are overblown.

I actually like that one Anonymous, but not the other ones. I like some of the Jonathans, but I can never remember which ones. Daniel Lakeland is a troll.

I worked briefly in News, and late nights on the assignment desk we would get a lot of faxes (yeah, I know, faxes…). 2 out of 3 would be real press releases or tips, and 1 out of 3 would be from crazy conspiracy theorists. Maybe 1 out of 100 times it would take me more than 10 seconds to know which was which. Sure, some were dead giveaways because they mixed satanic symbols with semi-pornographic photos or wildly-racist cartoons. But others… you just sort of figured out this wasn’t Edward Snowden, it was just a guy who hadn’t been off his mother’s couch is 27 years.

Conclusion: reputations associated with real people can be helpful sometimes, but reputation is not a necessary condition for judging the quality of a thought. Criticism will always be a noisy process. The balance is between the costs and benefits of attempting to ban anonymity in the critical pre- and post- review process (benefits: reputation filtering; costs: potentially silencing useful criticism) versus embracing it (benefits: more willingness to engage in criticism; costs: potentially decreases average criticism quality). Pseudo-anonymous-poster shock conclusion: I lean towards letting people disseminate their criticism in whatever form they choose.

https://s-media-cache-ak0.pinimg.com/236x/c6/22/62/c6226219cf75186c90c7e025c42e1eef.jpg

This photo shows what happens when you move to LA LA land, the land of sunshine, lawns, and leafblowers, and the resultant airborne pollen.

So sad. At first I thought you had reduced yourself to visual-sock-puppeting. But if you look at the nose… Good G-d LA has been hard on you.

The Lake Land Troll in his natural habitat and all his glory:

http://www.gettyimages.com/detail/photo/wooden-troll-at-hornindal-lake-high-res-stock-photography/103578323

At least you’ve kept your smile! You should smile more. You have a pretty smile. Oh, sorry – trolling the wrong thread: http://statmodeling.stat.columbia.edu/2016/05/18/bias-against-women-in-academia/

Is this the same Stephan Lewandowsky who who took a botched online “survey” of 1145 participants, and based on the fact that out of 10 of the participants who believed the moon landing was faked 3 also happened to be skeptical of climate change claims, concluded that people who “reject climate science” are hopeless conspiracy theorists? (“NASA Faked the Moon Landing – Therefore (Climate) Science is a Hoax”, Lewandowski et al. (2013)). He’s the kind of psychological researcher that you’ve been warning us against. Hopefully this is just someone with the same name.

No, I don’t think so. I think that the conclusion was that conspiracy ideation was a predictor for science denial, not that the rejection of science was a predictor for conspiracy ideation.

Other than the fact that it was not botched yes. It was an excellent example of what kooks could do. They actually managed to get the study retracted. Possibly by legal threats, I don’t remember?

The brouhaha did have the advantage that the resulting uproar turned into a second paper. I was rather envious, Lewandowsky had managed to generate a self-sustaining data collection method.

Some of Lewandowsky’s reseach tends to point out the irrationalities, etc., of climate deniers and, strangely enough, they don’t always appreciate this.

The Moon Landing paper was not retracted. I think you’re thinking of “Recursive Fury”.

Yes, that was the one with the survey of climate denier blogs was it not? I always have trouble remembering which of the three is which and I don’t have copies handy to check at the moment.

Yes, it was a survey of blog comments.

Yes, it was botched. First, it was a convenience sample online. Second, it was supposed to include skeptical sites but in fact did not. (As a result, it only appeared on vociferously anti-skeptical sites, and thus had some number of jokers pretending to be skeptics.) Third, as I said, he made a conclusion based heavily on 3 participants out of 1145.

There were no skeptical threats or lawsuits. He did junk science to reach a foregone conclusion. The second paper was as much junk as the first — and was retracted straight up. The fact that you use the term “climate denier” indicates how closely you looked at his techniques.

Again, this is the kind of research that Andrew has been harping on for months now: convenience-sampled, not-sweating-the-details, psychology thrown into a Structural Equation Model and called proof of the views that the psychologist had going in.

This has been hashed out many times before and my opinion differs from yours.

I guess we will have to differ.

Would be nice if you could at least correct your claim that it ‘concluded that people who “reject climate science” are hopeless conspiracy theorists’, because I do not think it is correct. Would at least indicate that you did look closely at the paper you’ve decided is the kind of paper Andrew has been harping on for months now.

There was a good discussion of this on

http://blogs.nottingham.ac.uk/makingsciencepublic/2016/03/30/transparency-lewandowsky-bishop-socialscience/

starting with a post by Warren Pearce, Sarah Hartley & Brigitte Nerlich. TL:DR – they hated it. As Eli pointed out to them things are not simple

I like this quote “… it should be to increase public understanding of and therefore trust in the social process through which those facts are scientifically determined. Science does not offer the final word, and its public authority should not be based on the myth that it does”

Most social processes should not be trusted and science tries very hard to fix just that!

Dorothy Bishop has made some related points here: http://rsos.royalsocietypublishing.org/content/3/4/160109 in response to our open science initiative.

Somewhat off topic, maybe everyone has seen this, but this widely praised (on Twitter) 2014 compendium of advice on writing code for social science research is … revealing.

http://web.stanford.edu/~gentzkow/research/CodeAndData.pdf

“Each step [in a regression study] is typically run *hundreds of times* as the analysis is developed and refined.” (P. 7) Almost like steps on, I don’t know, a path of some kind.

I’ve scanned the document and it is really difficult to figure out what that means. The line is repeated quite often.

Half the time it reads like the authors are out for a nice stroll down a garden path and the other half of the time it sound like they are discussing code development.

I’m pretty sure on some projects I’ve run code several hundred times while debugging or because I need to feed that part of an analysis into a new module and so on.

Still, it would have been nice of them to be a bit clearer.

What bothers me about Lewandowsky and Bishop’s red flags is the ad hominem focus – the concern with the motives of the people involved, in determining whether information should be shared. Such flags can’t be taken into account when transparency is routine. Applicant-by-applicant sharing would license obvious injustices, such as denying access to a known critic of a particular line of research, on the grounds of prior bias or bad manners (indeed, if such is not their precise intention, it is hard to understand Lewandowsky and Bishop’s scheme at all; this is contrary, however, to much of what Bishop has written on the subject elsewhere). Perhaps even worse, it makes sharing into a labored, quasi-judicial, process.

Exactly such a labored quasi-judicial process has been playing out over several years in the UK, concerning data from the PACE trials on chronic fatigue syndrome. If you’re unfamiliar with that controversy, read Richard Smith’s intervention in the BMJ (link below). Lewandowsky and Bishop’s proposal is framed in general terms, but by virtue both of its timing and of the authors’ professional links, it is right in the middle of the PACE mess.

Eli Rabbett is of course correct that there be real Tygers. But the answer to that surely lies in clarity about the zone in which routine transparency is expected, with that zone stopping well before email archives.

http://blogs.bmj.com/bmj/2015/12/16/richard-smith-qmul-and-kings-college-should-release-data-from-the-pace-trial/

here