Brian Nosek pointed me to this 2013 paper by Theodora Zarkadi and Simone Schnall, “‘Black and White’ thinking: Visual contrast polarizes moral judgment,” which begins:

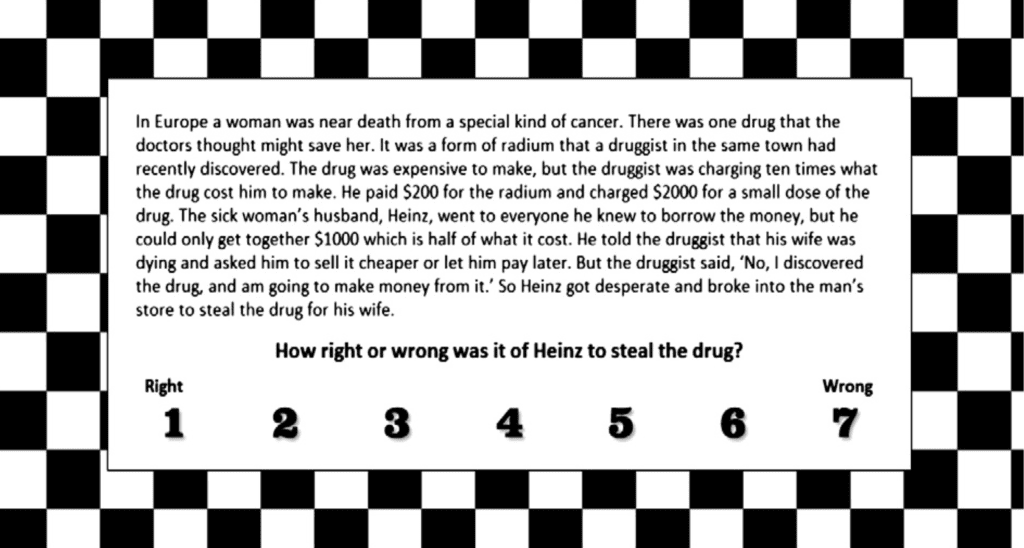

Recent research has emphasized the role of intuitive processes in morality by documenting the link between affect and moral judgment. The present research tested whether incidental visual cues without any affective connotation can similarly shape moral judgment by priming a certain mindset. In two experiments we showed that exposure to an incidental black and white visual contrast leads people to think in a “black and white” manner, as indicated by more extreme moral judgments. Participants who were primed with a black and white checkered background while considering a moral dilemma (Experiment 1) or a series of social issues (Experiment 2) gave ratings that were significantly further from the response scale’s mid-point, relative to participants in control conditions without such priming.

I don’t know whether to trust this claim, in light of the equally well-documented finding, “Blue and Seeing Blue: Sadness May Impair Color Perception.” Couldn’t the Zarkadi and Schnall result be explained by an interaction between sadness and moral attitudes? It could go like this: Sadder people have difficulty with color perception so they are less sensitive to the different backgrounds in the images in question. Or maybe it goes the other way: sadder people have difficulty with color perception so they are more sensitive to black-and-white patterns.

I’m also worried about possible interactions with day of the month for female participants, given the equally well-documented findings correlating cycle time with political attitudes and—uh oh!—color preferences. Again, these factors could easily interact with perceptions of colors and also moral judgment.

What a fun game! Anyone can play.

Hey—here’s another one. I have difficulty interpreting this published finding in light of the equally well-documented finding that college students have ESP. Given Zarkadi and Schnall’s expectations as stated it in their paper, isn’t it possible that the participants in their study simply read their minds? That would seem to be the most parsimonious explanation of the observed effect.

Another possibility is the equally well-documented himmicanes and hurricanes effect—I could well imagine something similar with black-and-white or color patterns.

But I’ve saved the best explanation for last.

We can most easily understand the effect discovered by Zarkadi and Schnall’s in the context of the well-known smiley-face effect. If a cartoon smiley face flashed for a fraction of a second can create huge changes in attitudes, it stands to reason that a chessboard pattern can have large effects too. The game of chess, after all, was invented in Persia, and so it makes sense that being primed by a chessboard will make participants think of Iran, which in turn will polarize their thinking, with liberals and conservatives scurrying to their opposite corners. In contrast, a blank pattern or a colored grid will not trigger these chess associations.

Aha, you might say: chess may well have originated in Persia but now it’s associated with Russia. But that just bolsters my point! An association with Russia will again remind younger voters of scary Putin and bring up Cold War memories for the oldsters in the house: either way, polarization here we come.

In a world in which merely being primed with elderly-related words such as “Florida” and “bingo” causes college students to walk more slowly (remember, Daniel Kahneman told us “You have no choice but to accept that the major conclusions of these studies are true”), it is no surprise that being primed with a chessboard can polarize us.

I can already anticipate the response to the preregistered replication that fails: There is an interaction with the weather. Or with relationship status. Or with parents’ socioeconomic status. Or, there was a crucial aspect of the treatment that was buried in the 17th paragraph of the publish paper but turns out to be absolutely necessary for this phenomenon to appear.

Or . . . hey, I have a good one: The recent nuclear accord with Iran and rapprochement with Russia over ISIS has reduced tension with those two chess-related countries, so this would explain a lack of replication in a future experiment.

P.S. The title of this post refers to the famous “50 shades of gray” paper by Nosek, Spies, and Motyl, who discovered an exciting and statistically significant finding connecting political moderation with perception of shades of gray. As Nosek et al. put it at the time:

The results were stunning. Moderates perceived the shades of gray more accurately than extremists on the left and right (p = .01). Our conclusion: political extremists perceive the world in black-and-white, figuratively and literally. . . . Enthused about the result, we identi ed Psychological Science as our fall back journal after we toured the Science, Nature, and PNAS rejection mills. . . .

Just to be safe, though, they decided to do their own preregistered replication:

We ran 1,300 participants, giving us .995 power to detect an effect of the original effect size at alpha = .05.

And, then, the punch line:

The effect vanished (p = .59).

P.P.S. I’m sometimes told it’s not politically savvy to mock. Maybe so. I strongly believe in the division of labor. We each contribute according to our abilities. I can fit statistical models, do statistical theory, and mock. Others can run experiments, come up with psychological theories, and form coalitions. It’s all good. I think mockery is what a lot of this himmicane stuff deserves, but I have a lot of respect for the Uri Simonsohns of the world who keep a straight face and engage personally with the authors of work that they criticize. Indeed, that careful and polite strategy can really work, and I’m glad they are going that route, even if I don’t have the patience to do it that way myself.

Most awesome post of the year!

“The effect vanished.”

The saddest words in academia?

Good summary of why I hate statistics: people are too easily fooled by randomness.

In spots this post reads like a wonderfully funny riff that A.J. Liebling–or S.J. Perelman– might have penned had they lived long enough to see this kind of research or read Andrew’s blog posts for a while.

Andrew, if you tire of statistics, you might find a new line of work at The New Yorker, assuming you were willing to slightly alter your name to, say, A.S.J. Gelman.

Some studies just make a mockery of science.

Except it is no laughing matter. How many more serious yet less “surprising” studies went unfunded, unpublished, undone? How many careers delayed, or cut short?

Fernando:

Yes, and one of the authors of this study is Simone Schnall, who we discussed here, where I wrote:

Andrew, you refer to a chessboard here, when the authors clearly specify a checkered background. The effect obviously wouldn’t replicate if an entirely different board is used.

Which suggests an extension experiment to test an old hypothesis: does viewing a red and black checkerboard affect a poor young man’s decision to join the clergy?

Andrew, do you feel that Larry Summers’ claim that ‘There are idiots – look around’ is invalidated by his failure to preregister?

Sean:

Preregistration would not have saved this study. It merely would’ve made it much harder for the authors to have obtained publishable results.

The Theodora Zarkadi and Simone Schnall paper contains this statement:

“One hundred-thirty individuals (mean age=28.42, SD=11.36, 73

female) participated in a survey on social issues in exchange for

15 cents as payment. Participants were recruited through Amazon’s

Mechanical Turk, an online marketplace where people complete cognitive

tasks. Recent research (Buhrmester, Kwang, & Gosling, 2011) has

shown that this method of conducting online experiments provides

high quality data that are at least as reliable as those obtained through

more traditional data-collection methods.”

Fifteen cents, Mechanical Turk and online experiments lead to “reliable high quality data”? Is this meant as a parody on faith-based data collection?

Paul:

I love that “at least as reliable” bit.

As far as I am aware, respondents to this blog receive no payment. Would a fifteen cents stimulant improve the level of comments? On the other hand, would ponying up fifteen cents in order to make a comment lead to high(er) quality data?

Paul:

I donate to each commenter more than 15 cents worth of my time in reading what they wrote. My attention is valuable! By commenting here, secure in the knowledge that I will read your comment, you have the opportunity of influencing the future direction of statistics! Also you get the thrill of distracting me away from my real work.

Actually, in psycholinguistics it has been proven beyond a shadow of doubt that MTurk experiments are even more robust than lab experiments, and I believe people even do reading studies (measuring reading/reaction times) over the internet. Also, they are super-fast to run. It’s a win-win situation, there’s no downside.

speaking from experience, i can say that this is quite a brilliant parody of what the peer review process in psychology actually looks like! no joke. mock out with yer c**k out, i say.

Comic sendup of this entire class of moral conundrum problem:

http://existentialcomics.com/comic/106

Glad to see you’re including some polysci in your anti-social psych harangues, even if it’s a just a link (cartoon smiley face).

Numeric:

Actually, the original cartoon smiley-face work wasn’t so bad. I don’t really think it told us much, but it was an experiment: it was what it was. My real problem was when Bartels hyped it to advance big claims which were never made in the original study.