There’s been some discussion recently about an experiment done in Montana, New Hampshire, and California, conducted by three young political science professors, in which letters were sent to 300,000 people, in order to (possibly) affect their voting behavior. It appears that the plan was to follow up after the elections and track voter turnout. (Some details are in this news report from Dylan Scott.)

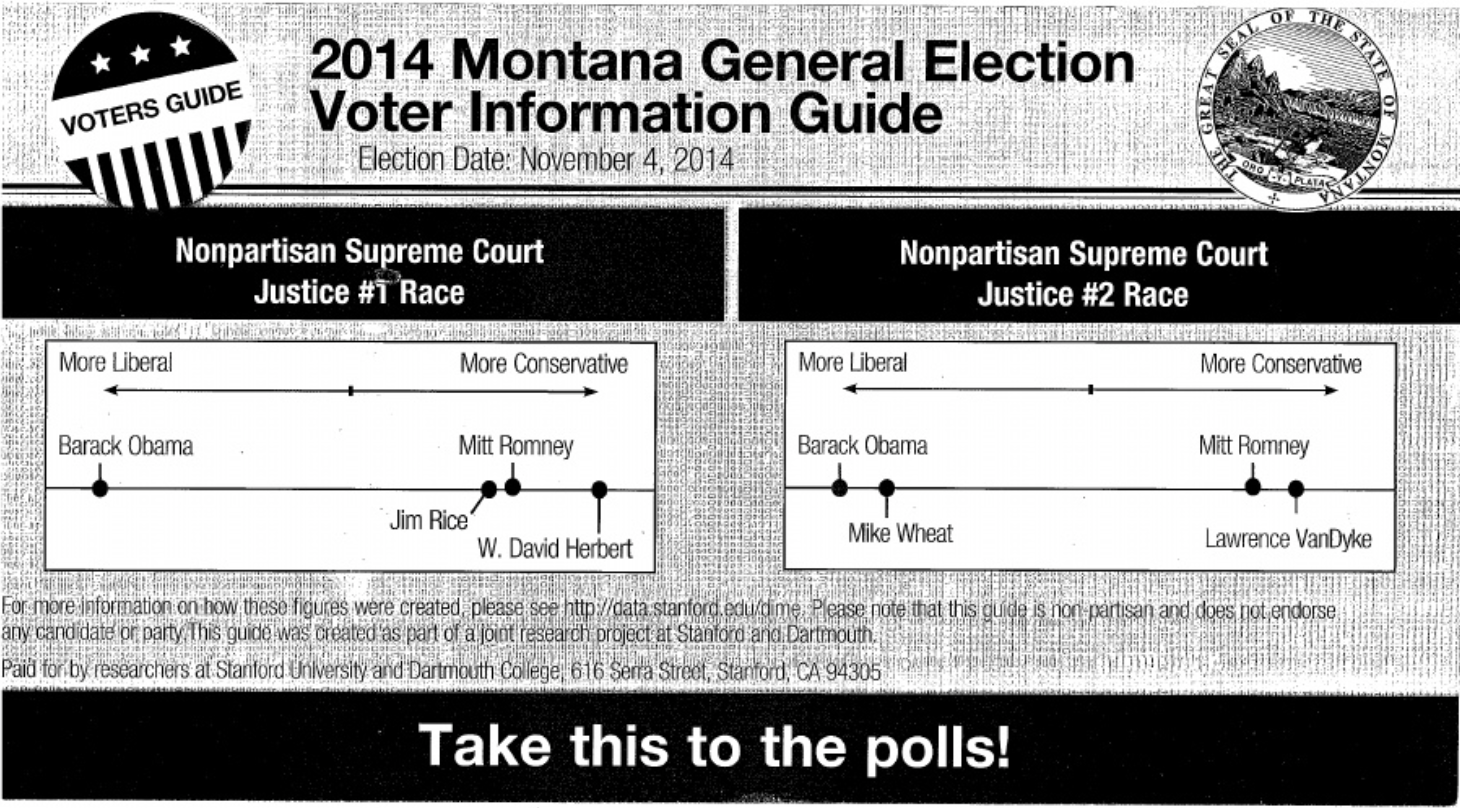

The Montana experiment was particularly controversial, with disputes about the legality and ethicality of sending people an official-looking document with the Montana state seal, the official-looking title of “2014 Montana General Election Voter Information Guide,” and the instructions, “Take this to the polls!”

The researchers did everything short of sending people the message, “I am writing you because I am a prospective doctoral student with considerable interest in your research,” or, my all-time favorite, “Our special romantic evening became reduced to my wife watching me curl up in a fetal position on the tiled floor of our bathroom between rounds of throwing up.”

There’s been a bunch of discussion of this on the internet. From a statistical standpoint, the most interesting reaction was this, from Burt Monroe commenting on Chris Blattman’s blog. Burt writes:

In my “big data guy” role, I have to ask why 100,000? As far as I can tell there’s exactly one binary treatment, or is there some complicated factorial interaction that leads to a number that big? For that to be the right power number, the treatment effect has to be microscopic and the instrument has to be incredibly weak — that is, it has to be a poorly designed experiment of a substantively unimportant question. Conversely, if the team believes the treatment will work, 100000 is surely big enough to actually change outcomes. . . .

Related to this is the following quote from the Dylan Scott news article, from a spokesman for one of the universities involved, that the now-controversial study “was not intended to favor any particular candidate, party, or agenda, nor is it designed to influence the outcome of any race.”

But a treatment that affects voter turnout will, in general, influence the outcome of the race (the intervention in Montana involved giving voters an estimate of the ideological leanings of the candidates in a nonpartisan (or, according to Chris Blattman, a “technically nonpartisan”) judicial election, along with the prominently-displayed recommendation to “Take this to the polls!”).

So I’m not sure how they could say the study was not designed to influence the outcome—unless what was done was to purposely pick non-close elections where a swing of a few hundred votes wouldn’t make a difference in who won. Even then, though, the election could be affected if people are motivated by the flyer to come out to vote in the first place, assuming there are any close elections in the states or districts in question.

I will set aside any ethical questions about the extent to which academic researchers should be influencing elections or policy, via experiments or other activities. Chris Blattman makes a good point that ultimately in our research we typically have some larger political goal, whether it’s Robert Putnam wanting to increase Americans’ sense of local community, or Steven Levitt wanting more rational public policy, or various economists wanting lower tariff barriers, and research is one way to attain a political end. The election example is a bit different in that the spokesman for one of the universities involved is flat-out denying the intention of any agenda, but Chris might say that, whether or not that spokesman knows what he’s talking about, having an agenda is basically ok.

To put it another way: Suppose the researchers in question had done this project, not using foundation funding, but under the auspices of an explicitly political organization such as the Democratic or Republican party, and with an avowed goal of increasing voter turnout for one candidate in particular. (One of the researchers involved in this study apparently has political consulting company, so perhaps he is already doing such a study.) Lots of social scientists work with political or advocacy organizations in this way, and people don’t generally express any problems with that. To the extent that the study in question is, ummm, questionable, it’s the idea that it violates an implicit wall separating research with a particular political or commercial purpose, and research with no such purpose. I have mixed feelings here. On one hand, Chris is right that no such sharp boundary exists. On the other hand, I understand how voters in Montana might feel a bit like “lab rats” after hearing about this experiment—even while a comparable intervention done for one party or another would be considered to be just part of modern political advertising.

But I don’t want to get into that here. What I do want to discuss is the statistical question raised by Burt Monroe in the above quote: why a sample size of 100,000 for that Montana experiment? As Monroe points out, 100,000 mailers really could be enough to swing an election. But if you need 100,000, you must really be studying small effects, or maybe localized effects?

When we study public opinion it can be convenient to have a national sample size of 50,000 or more, in order to get enough information on small states interacted with demographic slices (see, for example, this paper with Yair). And in our Xbox survey we had hundreds of thousands of respondents, which was helpful in allowing us to poststratify and also to get good estimates day by day during the pre-election period.

In this election-mailers study it’s not clear why such a large sample was needed, but I’m guessing there was some good reason. The study was expensive and I assume the researchers had to justify the expense and the sample size. A bad reason would be that they expected a very small and noisy effect—as Burt said, in that case it’s not clear why it would be worth studying in the first place. A good reason might be that they’re interested in studying effects that vary by locality. Another possibility is that they are studying spillover effects, the idea that sending a mailer to household X might have some effect on neighboring households. Such a spillover effect is probably pretty small so you’d need a huge sample size to estimate it, but then again if it’s that small it’s not clear it’s worth studying, at least not in that way.

P.S. Full disclosure: I do commercial research (Stan is partly funded by Novartis) and my collaborator Yair Ghitza works at Catalist, a political analysis firm that does work for Democrats. And my office is down the hall from that of Don Green, who I think has done work with Democrats and Republicans. So not only do I accept partisan or commercial work in principle, I support it in practice as well. I’m not close enough to the details of such interventions to know if it’s common practice to send out fake-official mailers, but I guess it’s probably done all the time.

P.P.S. No worry about the prof who was last seen lying curled up in the fetal position on his bathroom floor. He landed on his feet and is a professor at the Stanford Business School. Amusingly enough, he is offering “breakfast briefings” (no throwing up involved, I assume) and studies “how employees can develop healthy patterns of cooperation.”

Never knew that the Government has a guy called “Commissioner of Political Practices”

Well, at least they didn’t call him “Commissar of Political Practices”.

Needing to account for local effects is a good, and plausible, answer. I often argue that the best reason for using massive N data is not for ludicrously precise estimates over the whole population, but for the just big-enough N it often gives for inference about fine-grained subpopulations (or similar ideas like really precise matching). Same idea could be in play here. I haven’t seen any reference to an interest in local effects, but turnout is presumably conditioned by competitiveness and other features of other races that will vary across the state. I’ll buy that.

(I don’t see how spillover could have been an intended positive. In hindsight, it got so much spillover — everyone reading this page just got some of the treatment — that it unambiguously broke the whole experiment.)

Burt:

One reason I was thinking about spillover as a goal was there was that silly Facebook experiment on voter turnout a couple years ago that was featured in one of the tabloids . . . I don’t remember all the details, I think they had n=60 million or something like that, and they had a small main effect and a really really tiny indirect effect, but the indirect effect was statistically significant and it had a sexy story because it was a network effect. So I could imagine that this new study had this sort of thing in mind.

Not my area in either case, but I personally didn’t find the Facebook experiment silly. (It may have been “featured in one of the tabloids” but an alternative set of facts would be “published in Nature” and “cited over 250 times in 2 years.”) But de gustibus and all that … agree to disagree on that one.

Sticking just to comparative research design … the Facebook experiment was studying network effects through an intervention over a known network structure. I don’t see anything like that here. The authors’ discussion of it discusses only the main effect (of introducing ideological information about candidates in nonpartisan races).

Burt:

Yes, Nature was the tabloid I was referring to. I hadn’t remembered which of the tabloids this article had appeared in.

I thought the Nature experiment resulted in a very large, increase in turnout?

“Just how much can activity on Facebook influence the real world? About 340,000 extra people turned out to vote in the 2010 US congressional elections because of a single election-day Facebook message, estimate researchers who ran an experiment involving 61 million users of the social network”

Maybe we are not referring to the same study? http://www.nature.com/news/facebook-experiment-boosts-us-voter-turnout-1.11401

Justsomeone:

340,000/61,000,000 = 0.006. This is not zero but I would not call it large.

Ah, of course. My UK years trained my neurons to fire differently on the word “tabloids.” Apologies.

Hey—I just noticed another thing about that mailer. It’s asymmetric: it puts Barack Obama farther to the left than Mitt Romney is to the right. This characterization may be accurate, or maybe not—I guess it depends on what issues we’re talking about here—but it’s a way in which the intervention could potentially help the more conservative candidates in these races (by placing the candidates closer to the middle of the scale).

No big deal, it’s just an interesting way in which a seemingly-neutral way of presenting information, might not really be so neutral at all.

How to convert a set of factual parameters into a single left-right score is a process ripe with subjectivity. Its a stretch for the authors to even claim they did this in any “neutral” or objective manner.

The Romney point must be some sort of average over Romney(2004), Romney(2008) and Romney(2012). Those three points taken individually have enough range to define the scale, so it seems they are throwing away a lot of information by averaging them (and they make the scale impossible to interpret by not disclosing how they weighted the average).

The Obama point is just weird. I generally think of Obama as centrist, perhaps even slightly right of center; with their scale, where do actual socialists go?

They move to France (Sorry, the temptation was irresistible)

Unless I’m reading the DIME database incorrectly, the 2012 ideal point for democratic socialist Bernie Sanders would be on top of the ideal point for Barack Obama at -1.6. For reference, lifetime scores for Ted Kennedy, Lincoln Chafee, and Jesse Helms are -0.9, 0.0, and 1.0, respectively. Senator Barack Obama in 2006 landed at -1.35. Maybe presidential candidates get pushed toward the extreme in the estimates. There’s two 2012 scores for Romney / Ryan: 0.9 and 1.2; I think that the second score is based on donations after Romney won the nomination.

The ideal point estimates in the mailer aren’t based on issues directly: the estimates are based on patterns in campaign donations, so maybe Obama has a relatively liberal set of donors or donors who are less likely to spread their donations across the political spectrum.

In my introductory Statistics course, they taught me that 32 was the minimum sample size needed. But in practice it seems like most surveys have 1-2k and sometimes as big as 10k. Could someone please explain why 32 isn’t enough? Is it simply that the margin of error is too big for practical purposes? Or is there more to it than that?

Tim:

Since nobody else has responded to your question, I’ll offer a brief reply:

Lots of people have suggested various unqualified “minimum sample sizes.” Don’t trust anything like that — in statistics, one size (metaphorically as well as literally) definitely does not fit all. A lot depends on context. Your question, “Is it simply that the margin of error is too big for practical purposes?” shows good intuition. Put in more general terms: The sample size needed to detect a desired difference depends on the situation, including just what it is you are trying to measure, how good your measurement is, how many things you are trying to study with the sample, how much the sampled population varies on the item(s) under study, and what type of analysis is needed.

Tim, there’s no magic number. The number of samples you need depends on the size of the effect you’re looking for, the precision you desire in measuring it, and the amount of variability in your measurements (both due to intrinsic variation in the population and and due to measurement error).

If the effect size is very large, the precision you desire is low, and the variability is low, then the sample size can be very low. (Suppose I want to know “how does the weight of an adult elephant compare to an adult human”, and I will tolerate very low precision. Maybe I only need to measure one or two of each).

If the effect size is very small, the precision you desire is very high, and the variability is very high, then the sample size needed is enormous. This is often the situation in elections: if the election is very close (i.e. the average difference between the candidates is very small as far as voter preference), then you need very high precision, and the intrinsic variability is very high (ask 100 voters, you get lots who favor one candidate and lots who favor the other). If you were trying to determine who would win the 2000 Florida presidential election, data from a random selection of 32 voters was not going to tell you. Nor would 320 or 3200 or 32000 or even 320,000.

That said, it’s kind of interesting that a sample size of around 30 to 200 does seem to be roughly right for many real-world problems. I think what’s going on there is that if the effect is really big then everybody knows it already so you wouldn’t bother doing any formal statistics, and if the effect is rather small or the variability is very high then you often don’t care about it anyway, so a large fraction of interesting problems require a sample size of a few dozen or so. But, as the presidential election example indicates, that is not always true. Another example is whether taking statins or aspirin reduces the risk of heart attacks: we’d be interested in even a small effect (because if low doses of aspirin reduce risk even a little bit, hey, why not?) but you need a really really big sample size to tell how big the effect is (or even what direction). A sample of 32 people is not going to tell you anything.

As I was taught at university, sampling (independent) 30/35 obs from a distribution that looks very non-normal has a distribution for it’s sample mean that looks pretty close to normal thanks to the CLT. It was just a rule of thumb for a special case.

So its interesting that despite the growing norm to preregister experiments (at places like EGAP: http://e-gap.org/) in the discipline it appears this wasn’t pre-registered? If it was, you can go look up exactly why they wanted this sample size and settle it, because they’d have laid it out in that plan.

Which raises maybe a more interesting question — that pre-registration of experimental designs could play a role in instituting some kind of “ethics” check, where soon-to-be experiments that seem to cross the acceptable threshold of impact/deception (itself highly debatable I know, but still) could be flagged in advance and discussed before it all blows up like this.

Ideally, this is the job of IRB’s. But IRB’s at most universities seem incapable of reasonably assessing social science work — they’re set up to address hard science issues and often don’t really know how to engage with proposals for experiments like these. But what I’m thinking about is an effort by the experiments community within political science to “self-police,” which ultimately could become a public goods that helps experimentalists overall if we can prevent blowups like this in the future while still doing most of this work

This is an interesting suggestion. I think IRBs know something about how to handle human subjects lab experiments, even those run by social scientists, but are largely unprepared for dealing with these sorts of field experiments. In particular, while they tend to spend a lot of time worrying about informed consent and deception (although both of these were also issues here and the Dartmouth IRB signed off) I don’t think they’re designed to deal with potential externalities, like the population of an entire state getting angry at you for messing with a “nonpartisan” judicial election, or the potential to affect election outcomes, or to make legislators suspicious that every point of contact they get from a constituent is just some academic experimenting on them.

I worry though, that many political scientists wouldn’t think that anything these people did (aside from affixing the state seal) was wrong, or even potentially harmful. I’m not sure, in other words, that asking people who have very different norms from the mainstream will achieve the desired goal, which is really protecting the viability of research like this (and funding, etc) for the long term.

Dan:

I’m just wondering: Is there a reason why you (and, earlier, Chris) put “nonpartisan” in quotes? Are you saying that it’s not really nonpartisan? I don’t know anything about Montana elections so I was just taking the nonpartisan label as being accurate, indeed one thing that bothers me about this study is that the intervention turns a nonpartisan election into something more partisan.

Andrew:

I can’t speak to whether or not the judges behave in a partisan way on the bench, but this particular race has been quite partisan (eg http://tinyurl.com/nn43mgv). This is one reason that people reacted so strongly to the study mailer and it also presents a potentially big confound, not to mention likely generates a treatment effect that seems likely to dwarf that of the mailer. So maybe they really did need 100,000 participants to tease out the effect of their contribution to partisan information here…

Having spent over two decades on a couple of IRBs, I can tell you two things about them.

The first is that their ability to deal with a particular kind of research is mainly a function of the disciplines of the people who sit on them. There are some institutions that have multiple IRBs to cover different areas of specialization for precisely this reason. Briefly put, there is a lot of variation at the IRB level.

The second is that IRBs are generally not prone to engage in formal ethical inquiry. They perceive their role primarily as the enforcement of the various laws and regulations governing human subjects research. Those laws tend to focus very much on individual participant rights, harms, and benefits and rarely extend to consideration of population-level effects. Had this study come before either of the IRB’s I have served on, some of us would have shaken our heads and said it raises serious ethical questions, but in the end, I think we would have approved this study as it doesn’t seem to transgress any laws or governmental regulations.

Clyde:

I do not think the study should’ve been done, but I don’t think it’s the role of the IRB to stop it. Certain things are unethical but still follow the rules, and I think that setting up the IRB to be some sort of ethical arbiter will just create more problems.

N in this study is not necessarily 100000. It’s possible N is the number of those 100K whom they contact for the survey AND who agree to participate. I have no idea how many they actually planned to survey or what they anticipated participation levels would be.

More likely, this may was a cluster design, and they were not actually planning on doing a follow up survey at all. They would just look at turnout levels by district or municipality (whatever the cluster level), and compare those to the non-selected ‘controls’ and each other. In order to execute that design, I could see how you would need to reach a large % of voters in a particular cluster in order to pick up effects from the administrative turnout data.

Fata’s comment about cluster design makes sense.

BUT isn’t whether you voted a matter of public record (not for whom, but whether)?

Zbicyclist:

Yes, it was my impression that these voter turnout studies are typically analyzed by checking to see who voted.

Keep in mind that Bonica has a start up political consulting firm that intends to do exactly this sort of work. I.e., use the DIME database/models to be able to place candidates and potential candidates (i.e., if you are a PAC or party looking to recruit candidates) on an ideological scale and make that information available to voters.

So maybe it was marketing, not science that drove the sample size.

A full-scale demonstration where providing that sort of ideology-scale information changed enough votes to matter in a real world election would certainly be a selling point to investors and potential clients. And nice to get said beta test funded by Hewitt and Stanford rather than your firm’s own pocket.

https://www.crowdpac.com/about

Aprof:

It could be marketing, or maybe even more likely it could be a convergence of interests, that he would like his business to succeed and also thinks the study has research value (just as I both want more funding for Stan and think that Stan is a public good). In any case, though, he’d have to convince his funders that sending out 300,000 letters was a good idea. Somewhere along the way, I imagine someone would’ve thought that 300,000 is a big number. But I guess it’s possible that the sheer size of the experiment could have been appealing from a publicity standpoint: big data and all that.

Instead of speculating about the research purpose, should we not be asking Stanford or Hewlett foundation to share the research proposal that justified the foundation award? And should we not be asking Dartmouth to share the IRB documentation? Maybe the latter has too much sensitive information (but that can be redacted), but relevant parts of the proposal on the grant application should certainly be made public, which I suppose might happen in the course of a lawsuit. Absent that kind of documentation, we are merely left to speculate on the motives and intentions of the researchers.

+1

YK:

We could just ask the researchers directly. I know Rodden and was thinking of asking him but I didn’t want to bug him, as I’m guessing he’s already busy dealing with questions about this right now.

My understanding is that they’re comparing treatment districts (or precincts, or counties, etc.) with control districts, so the N is actually something much smaller. But even if they were looking at individuals’ turnout with an N of 100,000, keep in mind that this isn’t a lab experiment where you can fairly certain that each person has been exposed to the treatment. If I’m any indication as a mail-receiving voter, most of those people are going to throw the mailer away with at most a cursory look. I don’t know what would be reasonable to expect as a rate of reading, but maybe they’re only expecting 1,000 people to read the thing.

Disclosure: While I know the co-PI’s of the study, I have no special knowledge of what they’re doing. This post is conjecture based on public reports of the study.

This is probably a roll-off study, not a turnout study. If you wanted to affect voter turnout, this would be a really bad way to do it. Very few people go to the polls for a judicial race, and giving them more information wouldn’t change much.

However, once someone shows up at the polls, they don’t have to vote in every race. People cast fewer votes in judicial races, and non-partisan judicial races in particular, than in top-of-the-ballot elections. So the researchers were trying to assess if one of the reasons for this high rate of roll-off was that people didn’t have partisan informational cues about the candidates. By giving people some information about the ideological position of the candidates, they’re trying to see if people will be more apt to vote.

The trouble with roll-off studies (as with vote choice studies) is that you can’t get individual-level information about roll-off. Instead, you have to assign treatment at the level of aggregation (most likely the precinct) and then compare what proportion of voters voted in the judicial election. You need a lot of treated people to detect reasonably sized roll-off effects.

100k doesn’t seem ridiculous in this context. I’d also like to add that someone could’ve done such a study by sending a mailer that said candidate X is closer to the Dems and canidate Y is closer to the GOP, but using Bonica’s CFscores make such a statement more empirical and (in my opinion) fair.

An even better answer. That makes it completely reasonable (from a statistical power point of view). Thanks.

You are probably correct about this being a roll-off study (research summary here: PDF).

One of the hornet nests that they kicked was the Montana “non-partisan” judicial election between Mike Wheat and Lawrence Van Dyke that has attracted lots of outside money (close to $1 million I believe). Van Dyke has very little experience practicing law in Montana (there was a controversial state Supreme Court case that ruled that he was eligible to run nonetheless). On the other hand, Wheat is supported by the in-state legal establishment, which leans relatively left. The result is that the experimental mailing puts Wheat next to Obama and Van Dyke next to Romney, in a conservative state where Obama has a 35% approval rating. This is the sort of thing that presumably “cancels out” over multiple elections, but 100,000 mailers in Montana is a fairly big deal.

More generally, I have three problems with the mailing:

1. There’s really one way to use the information: if you prefer Obama to Romney or vice versa, vote for the candidate closer to him.

2. Obama/Romney are “familiar” names with familiar political positions, but as political leaders they also serve as attack magnets for the opposing party. This is especially true for the sitting President.

3. While the CF score is “empirical,” it’s also not obvious what it means, and the flyer makes no attempt to explain. Scoring candidates on a single dimension is a task necessarily full of assumptions. This might be OK in a political science paper read by sophisticated analysts, but it’s highly questionable in a “Bring this to the polls!” mailing that assumes the general public is too dumb for anything more than a line with the points “Obama” and “Romney” marked out.

1) Recent work from Ahler and Broockman not withstanding, voting on the basis of which presidential candidate you prefer seems pretty okay. It kind of underlines the ridiculousness of holding elections for a judge: there’s basically no way for non-experts to evaluate them on judicial decision-making.

2) Point taken, but are campaign donations a bad measure in this case? I haven’t seen any evidence that the scalings were way off the mark in this race. Would a bunch of lefty lawyers go out-of-state to recruit a conservative? Seems unlikely.

I agree that elections for judges are absurd. But while “in general” it may be fine to slap a “D” or an “R” on everyone’s name, this is a particular election between two candidates with fairly large differentials in experience. And while highlighting the partisan affiliations of the donors is something that can be done objectively by a computer, that doesn’t mean that it is the most objective or most important bit of information that should be provided to voters. Republican campaigns around the country are trying to put their opponents next to Obama, while very few Democratic campaigns are campaigning using photos of Romney and their opponents side-by-side. When you combine these facts with an official-looking seal and an imperative to “bring the guide to the polls”, these professors were basically walking into a minefield.

Pingback: Shared Stories from This Week: Oct 31, 2014

Pingback: A Montana resident sent me this - Statistical Modeling, Causal Inference, and Social Science Statistical Modeling, Causal Inference, and Social Science

I heard a paper today at a marketing conference that may provide an answer. Most of the comments here reflect expertise in survey research / political research.

But advertising research typically has low power, and in many ways this is an advertising test. The paper is by Garrett A. Johnson (Rochester), Randall A Lewis (Google), David Reiley Jr.(Google) and lined to here: http://research.chicagobooth.edu/~/media/DB9DC2E6376A474F9155DD367B4C781C.pdf I heard Garrett talk from slides and I haven’t read he complete paper; the discussion of power is on page 3 and I’ll briefly excerpt it:

“our experiment includes over three million

joint customers of Yahoo! and the retailer, of which 55% are exposed to an experimental ad….Our overall results suggest that the retailer ads increased sales and that the campaign was

profitable. Our experimental estimates suggest an average sales lift of $0.477 on the Full treatment

group and $0.221 on the Half treatment group, which represent a 3.6% and a 1.7% increase over the

Control group’s sales. Each ad impression cost about 0.5¢, and the average ad per-person exposure

was 34 and 17 in the Full and Half groups. Assuming a 50% retail markup, the ad campaign yielded

a 51% profit, though profitability is not statistically significant (p-value: 0.25, one-sided).”

Their experimental treatment consisted of showing the ad fifty (50) times to the experimental group versus zero for a matched control.

So here we have an ad with excellent return on investment (51% profit) with a sample of 3 million but not enough power to make the result statistically significant.

As Phil noted above “If the effect size is very small, the precision you desire is very high, and the variability is very high, then the sample size needed is enormous.” But the effect can be ‘very small’ but still pay out for the advertiser. We don’t usually think of elections in these terms — there’s no concept of paying out in terms of the number of votes, just whether you win or lose, but there’s no reason why the logic of advertising testing shouldn’t be applicable to a close election. Maybe the authors, who seem to also be political consultants, wanted some nice results in their pocket.

Re “Our special romantic evening became reduced to my wife watching me curl up in a fetal position on the tiled floor of our bathroom between rounds of throwing up.” How is that Frank Flynn manage to avoid getting sued for libel? Or did he get sued?

Chris:

The poor guy, his romantic evening was ruined. Don’t you think he’s suffered enough??

Probably so :-)

A related story in yesterday’s NY Times –

http://www.nytimes.com/2014/11/03/us/montana-judicial-race-joins-big-money-fray.html