Someone sent me the following email:

I am an environmental journalist writing an Environmental Science 101 textbook and I’m currently working on the section on hypothesis testing and statistical significance. I am searching for a story to make the importance of thinking statistically come alive for the students, ideally one from the environmental sciences. I’m looking for a time when an effect seemed huge to the naked eye, but wasn’t or a time when an error made an insignificant result look significant. Or maybe a story about how the media took an insignificant relationship and blew it out of proportion. Or maybe a story, like the one you told so well recently in Slate, about how you can find “significance” if you just keep throwing enough mud at the wall.

It could be old or new, obscure or well known. The key thing, to make it work for the textbook, is that it have consequences—either implications outside of science, or high drama inside science.

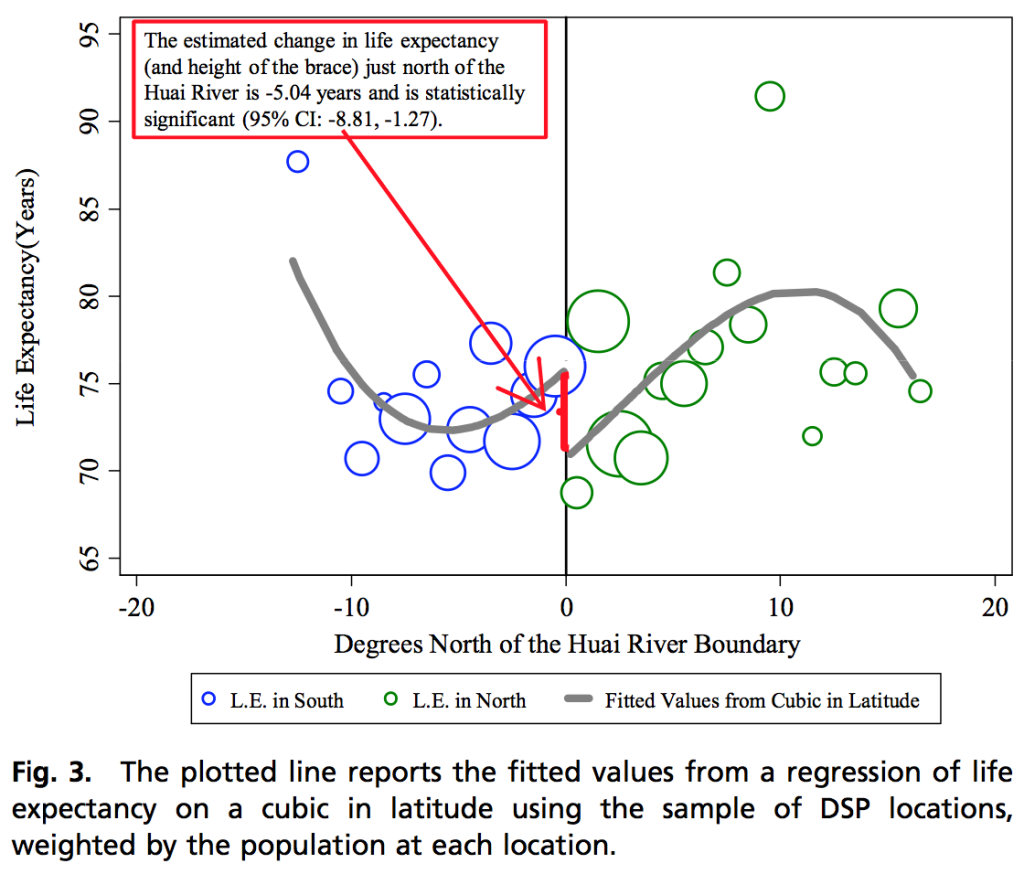

I pointed the textbook writer to this example, which seemed ideal:

Although, as my correspondent pointed out to me, this example might be too complicated for students in an intro class.

Andrew, this is off-topic, but I wonder whether you would be willing to read my blog post that my name links to. Thanks.

I like this xkcd: http://xkcd.com/882/ although it’s clearly fictional.

Wneh in doubt, give students stiories involving beer. Particularly Student’s beer!

http://sites.roosevelt.edu/sziliak/files/2012/02/William-S-Gosset-and-Experimental-Statistics-Ziliak-JWE-2011.pdf

An excellent example is the global temperature hockey stick graph.

For full history & details see the gripping detective story by A. Montford

http://www.amazon.com/The-Hockey-Stick-Illusion-Climategate/dp/1906768358/

Searching posts at Steve McIntyre’s website could also help: http://climateaudit.org/

Apparently there are still some people defending it

“An excellent example” of what exactly? Whatever the manufactured ‘controversy’ regarding the Hockey Stick Graph is about (alleged data-adjustments, alleged scientific misconduct, alleged deliberate deception), it is not about statistical significance.

If you want a climate related statistics-example, try looking at the “no warming since 1995” claims of a few years ago. Climate change deniers stated that there was no statistically significant warming from 1995 to 2009, even though if eyeballed there clearly was a warming trend (every year in the 2000s being warmer than the 1990-1999 average). When the data for 2010 came in the “no warming since 1995” claim collapsed as with the new data added to the series the p value crossed the traditional .05 threshold.

(Some background info here: http://theness.com/neurologicablog/index.php/global-warming-and-statistical-artifacts/ )

Of course this example is exactly the opposite of what the email asked for; it’s an obvious case made to look insignificant by statistics.

What about this

http://www.washingtonpost.com/national/health-science/the-press-release-crime-of-a-biotech-ceo-and-its-impact-on-scientific-research/2013/09/23/9b4a1a32-007a-11e3-9a3e-916de805f65d_story.html

it has a quite a bit of drama (ruined CEO career, home detention) and hits the common abuse of statistical test significance , “data dredging” (cherry picking from multiple tests)

Perhap’s the one Don Rubin gave at JSM this year as an example of how poor statistical analysis of an RCT can be more misleading than observational studies.

http://www.ohri.ca/newsroom/newsstory.asp?ID=136 or http://www.nejm.org/doi/full/10.1056/NEJMoa0802395

Perhaps ask people what would they conclude when they read these links.

Then have them read this http://www.hc-sc.gc.ca/dhp-mps/medeff/advise-consult/eap-gce_trasylol/final_rep-rap-eng.php

Excerpt:

Following the provision of this additional data by BART investigators, the EAP-Trasylol® identified the following key findings:

•Accounting for the excluded patients in the analysis reduced the statistical significance of the mortality signal for Trasylol®. It was therefore possible that the risk of death noted in the BART study was due to chance.

•There were issues identified in other aspects of the study involving how the primary outcome data was examined (primary outcome was risk of massive bleeding). In particular, there was an unusually large number of reclassifications of outcomes from the originally reported data, with a large (approximately 75%) change rate in primary outcome. Reclassifications were in opposite directions for Trasylol® versus Tranexamic Acid and Epsilon Aminocaproic Acid, favouring the latter, and these changes increase through the duration of the study. This observation was never satisfactorily explained.

Then do an impact study – what were the monetary and patient costs?

I think that the controversy over using geoengineering to address climate change could be used to teach undergraduates to compare biological significance with statistical significance. Iron fertilization to increase ocean carbon sequestration is a great example; money, climate change, and p-values all intersect. Significance tests of phytoplankton responses to iron additions have been used a justification for this type of geoengineering.

Some background here:

http://www.gfdl.noaa.gov/bibliography/related_files/wgg0302.pdf

http://www.reuters.com/article/2013/08/30/us-climate-geoengineering-special-report-idUSBRE97T0BZ20130830

A really good story can be put together from attempts in Germany to determine whether some persons have the ability to detect subsurface water by “dowising.” I do not have all the references at hand, but the story, along with references, is in Wikipedia. In the 1988 test 6 out of 500 persons performed at a level supposedly not at all explainable by chance. Later it was shown that the statistical analysis was seriously flawed and that, in any case, the six dowsers were not able to replicated their performances upon retesting.

The Hawthorne Effect makes for an interesting story, hugely influential, but apparently not supported by the data, and was recently (27 September) discussed on the BBC radio program More or Less:

https://en.wikipedia.org/wiki/Hawthorne_effect

http://downloads.bbc.co.uk/podcasts/radio4/moreorless/moreorless_20130927-1700a.mp3

http://www.aeaweb.org/articles.php?doi=10.1257/app.3.1.224

Serendipitously, Peter Bruce of statistics.com presented these slides:

http://www.flickr.com/photos/76514656@N04/sets/72157636099330615/

yesterday as part of a larger event about “Data Mining for Patterns That Aren’t There”:

http://www.meetup.com/Data-Science-DC/events/137933372/

Some good examples!

While doing research for my dissertation at Virginia Tech I was attempting to ascertain interpersonal characteristics that make for the best teachers. Having noted a colleague from another university who always had a small group of undergrads following him around & paying attention to every word bestowed upon them I was interested to know what “type” of leadership Prof XYZ practiced. Prof XYZ never wanted to reply to my inquiries until one day he stated, “OK, you want to know what type leader I am–well–I’m a spaghetti leader”! Meaning that I allow it to boil until done, throw it against the wall, and if it sticks–I use it. This was a very crude response, especially when I was looking for a specific leadership theory. Obviously he knew little of official leadership terminology–only how to do what came naturally. I do believe that a better understanding of the leadership style Prof XYZ exhibited would have served him well. After all, what’s wrong with knowing what one is doing???