At the bottom of this entry I wrote that the so-called Fisher exact test for categorical data does not make sense. Ken Rice writes:

It turns out the standard conditional likelihood argument (which to me always looked prima facie contrived and artifical) is in fact exactly what you get from a carefully considered random-effects approach.

There are some nice symmetries in the random-effects prior, effectively it forces the same prior beliefs for cases and controls. It also has a nice non-parametric property – effectively one only specifies the first few moments of the prior, it’s most attractive in e.g. matched pair studies.

Naturally, where one had good backing for a ‘bespoke’ prior, the conditional approach isn’t going to beat it, but as a default I believe it’s acceptable, and does actually make some sense.

Ken’s paper is here, and here’s an entertaining powerpoint [link fixed] on the topic.

Larger models that reduce to particular smaller models

I’ll have to digest all this before I have any comments. Except that it reminds me of something similar with models of censored and truncated data. Truncation can be considered as a generalization of censoring where the number of censored cases is unknown. Thus, to do a full Bayesian inference for truncated data you need a prior distribution on the number of censored cases. It turns out that, for a particular choice of prior distribution, the truncation model reduces to the censoring model. We discuss this in chapter 7 of Bayesian Data Analysis (second edition) and section 2 of this paper from 2004. As I wrote then:

once we consider the model expansion, it reveals the original truncated-data likelihoodas just one possibility in a class of models. Depending on the information available in any particular problem, it could make sense to use different prior distributions for N and thus different truncated-datamodels. It is hard to return to the original “state of innocence” in which

N did not need to be modeled.

The same issue arises when considering additional input variables in a regression models.

Also

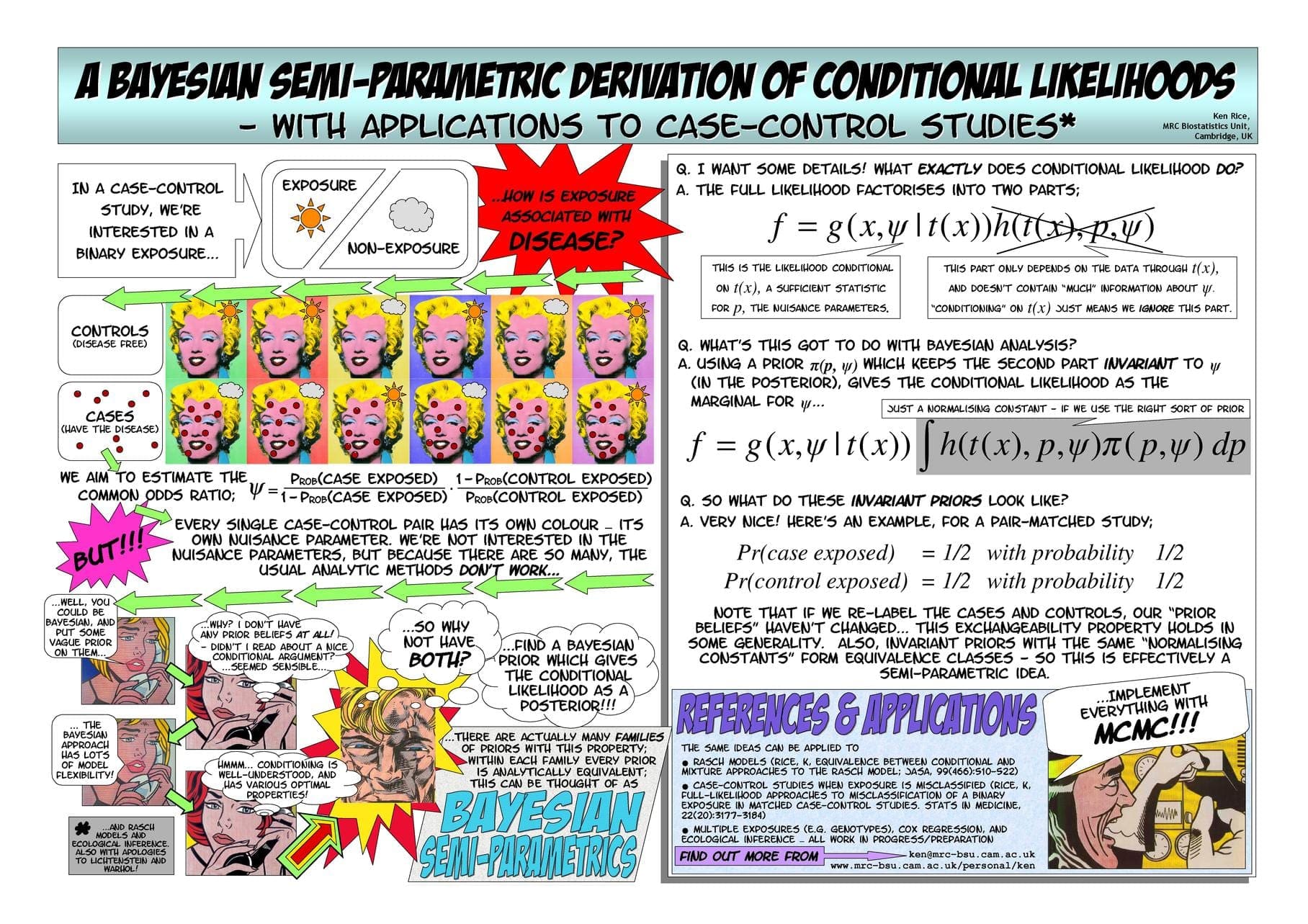

Here is Ken’s conference poster:

The link to the presentation should be http://www.stat.columbia.edu/~gelman/stuff_for_bl… I guess.

I'm truly inspired by that conference poster. It sets a high bar for communicating effectively in that medium while drawing one's attention.

The random effect argument leading to Fisher's exact test is the point made in the paper I alluded to previously:

Streitberg B, Röhmel J (1991) Alternatives to Fisher's exact test? Biometrie und Informatik in Medizin und Biologie 22:139-146

It is a pity that this paper is not better known, which is no doubt due to its appearance in a relatively obscure journal.

I'm not sure about the effectiveness of the poster… there was a generation gap between those who liked it (and read it all) and those who seemed to think it insufficiently reverent (and didn't). I do show it off to students sometimes, but take care to point out that poster sessions would be awful if everyone tried similar tricks.

The other key reference to mention is Pat Altham's classic 1969 Biometrika paper (Exact Bayesian Analysis of a 2×2 table, and Fisher's Exact Test). Before she retired, Pat told me she'd always thought the results could be extended to generalized linear models. So far as I'm aware, doing that remains an interesting open problem.